Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Loading...

Welcome to the N2W Backup & Recovery User Guide. Here you will find all the documentation that you need to make the most of N2W.

N2W Backup & Recovery (CPM), known as N2W, is an enterprise-class backup, recovery, and disaster recovery solution for Amazon Web Services (AWS). Designed from the ground up to support AWS, N2W uses cloud-native technologies (e.g., EBS snapshots) to provide unmatched backup and, more importantly, restore capabilities in AWS.

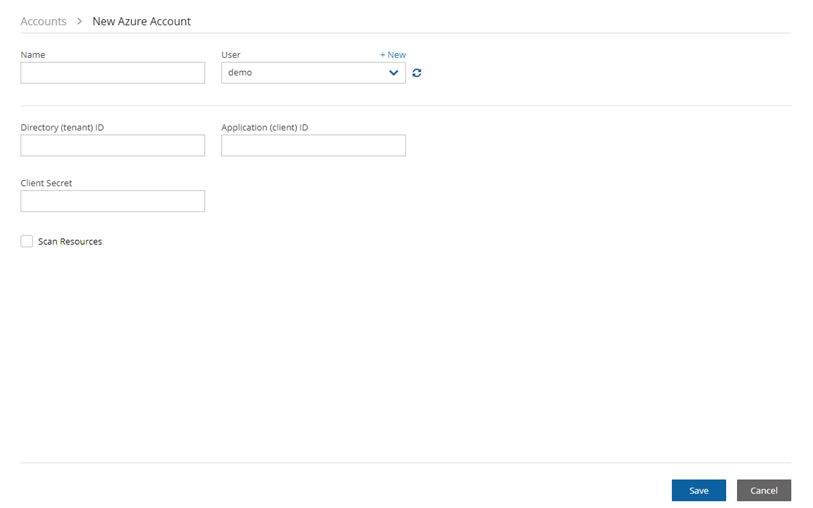

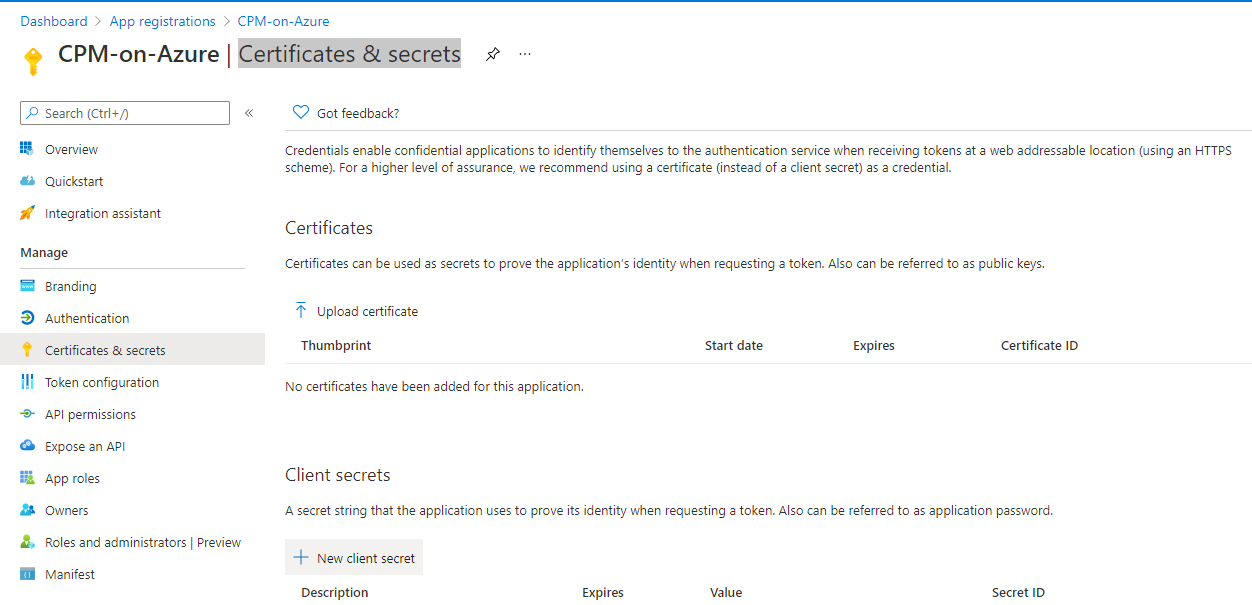

N2W also supports backup and recovery for Microsoft Azure Virtual Machines, SQL Servers, and Disks (section 26).

N2W also supports using Wasabi Cloud Object Storage for Amazon S3 backups (section 27).

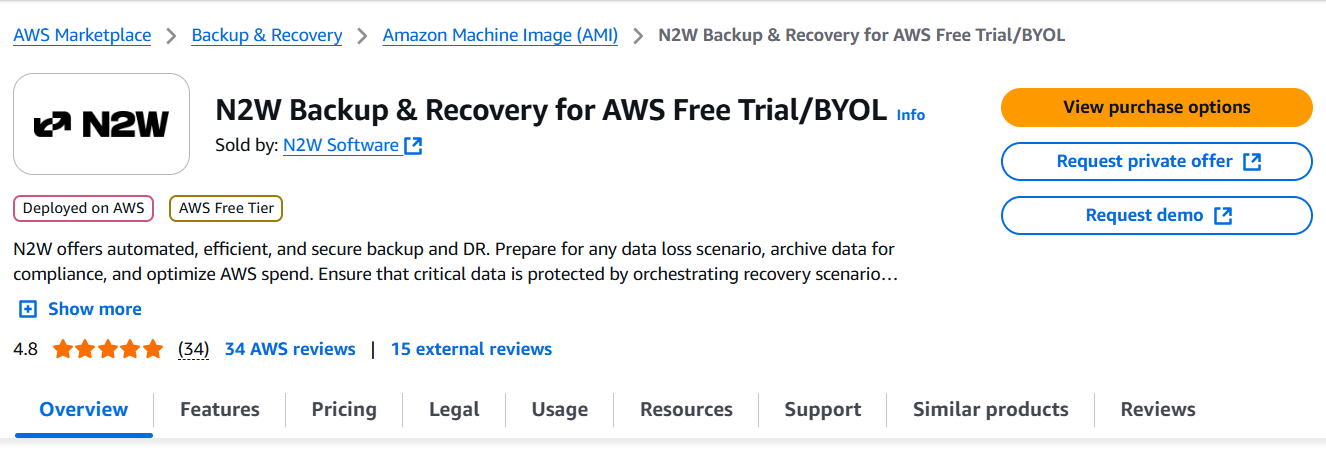

N2W is sold as a service. When you register to use the service, you get permission to launch a virtual Amazon Machine Image (AMI) of an EC2 instance. Once you launch the instance, and after a short configuration process, you can start backing up your data using N2W.

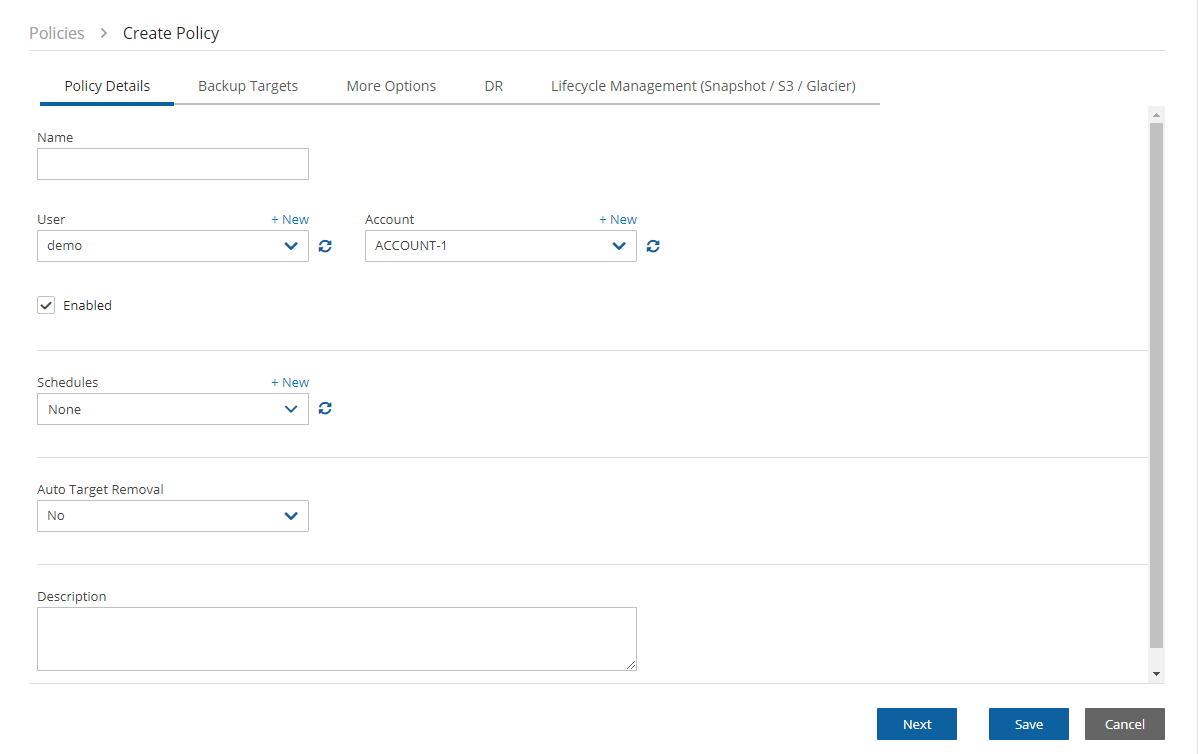

Using N2W, you can create backup policies, schedules, and import non-N2W backups to Amazon Simple Storage Service (S3). Backup policies define what you want to back up (i.e., Backup Targets) as well as other parameters, such as:

Frequency of backups

Number of backup generations to maintain, duration of retention periods, and lock application

Whether to copy the backup data to other AWS regions, etc.

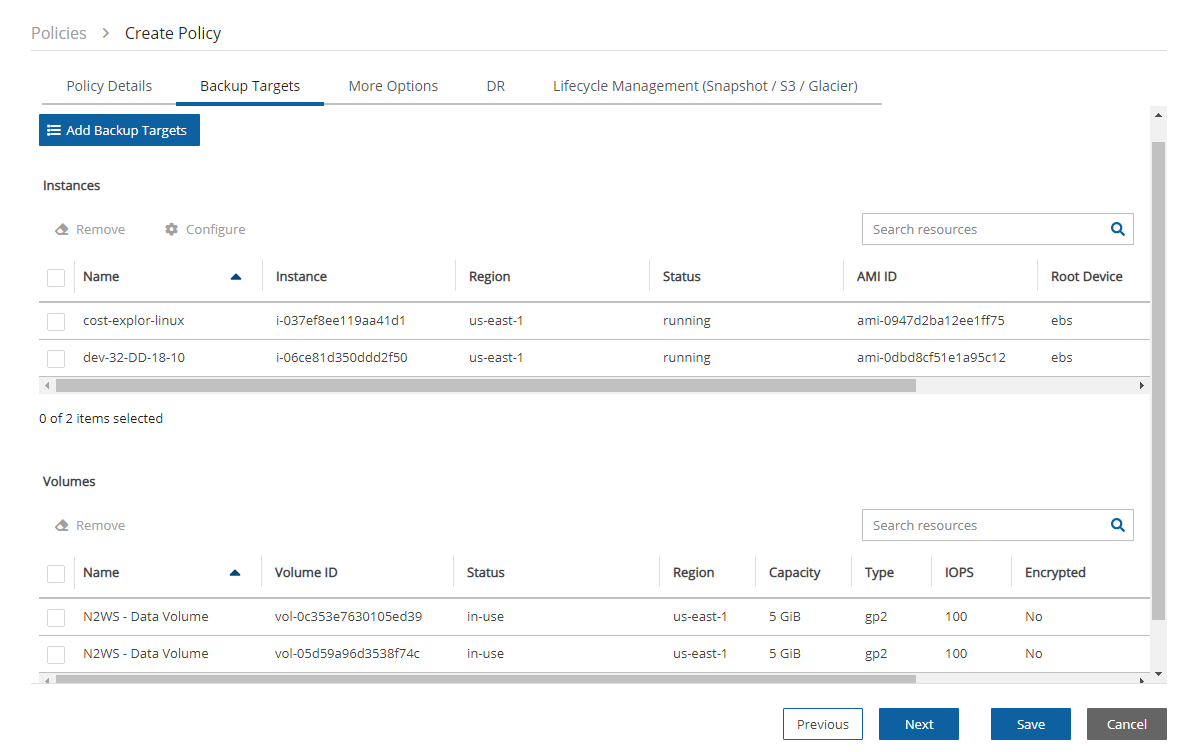

Backup targets can be of several different types, for example:

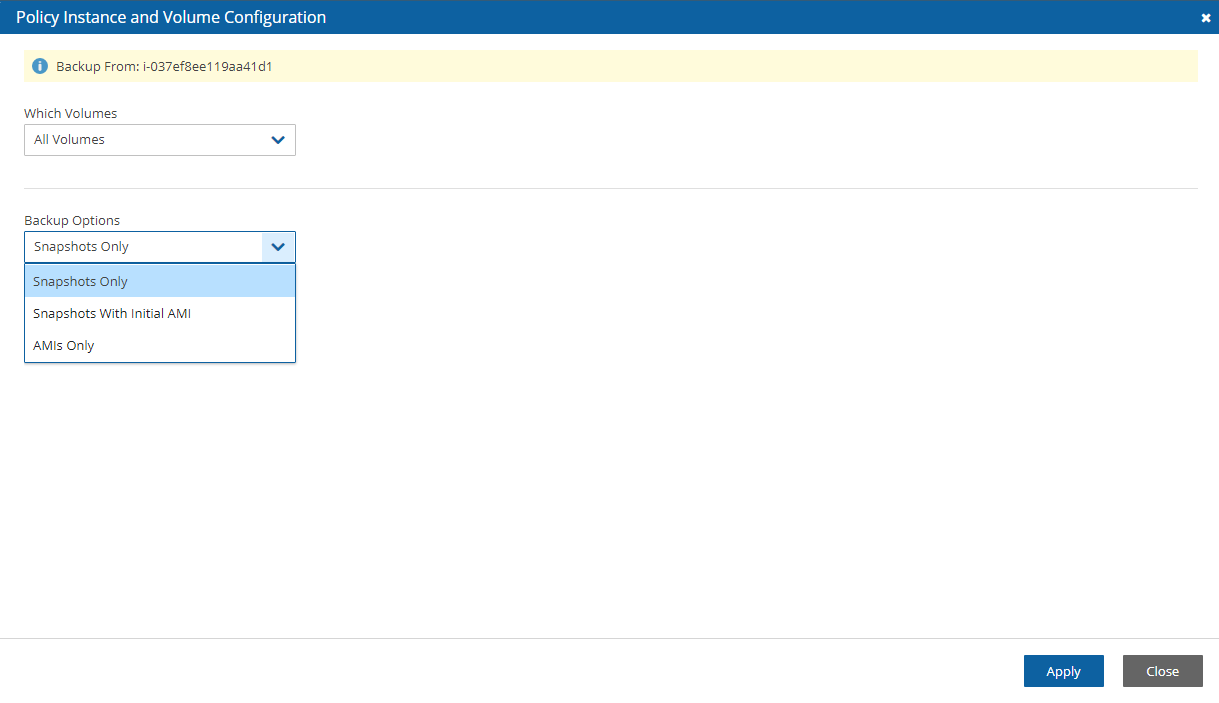

EC2 instances (including some or all instance’s EBS volumes)

Independent EBS volumes (regardless of whether they are attached and to which instance)

Amazon Relational Database Service (RDS) databases - regular and custom

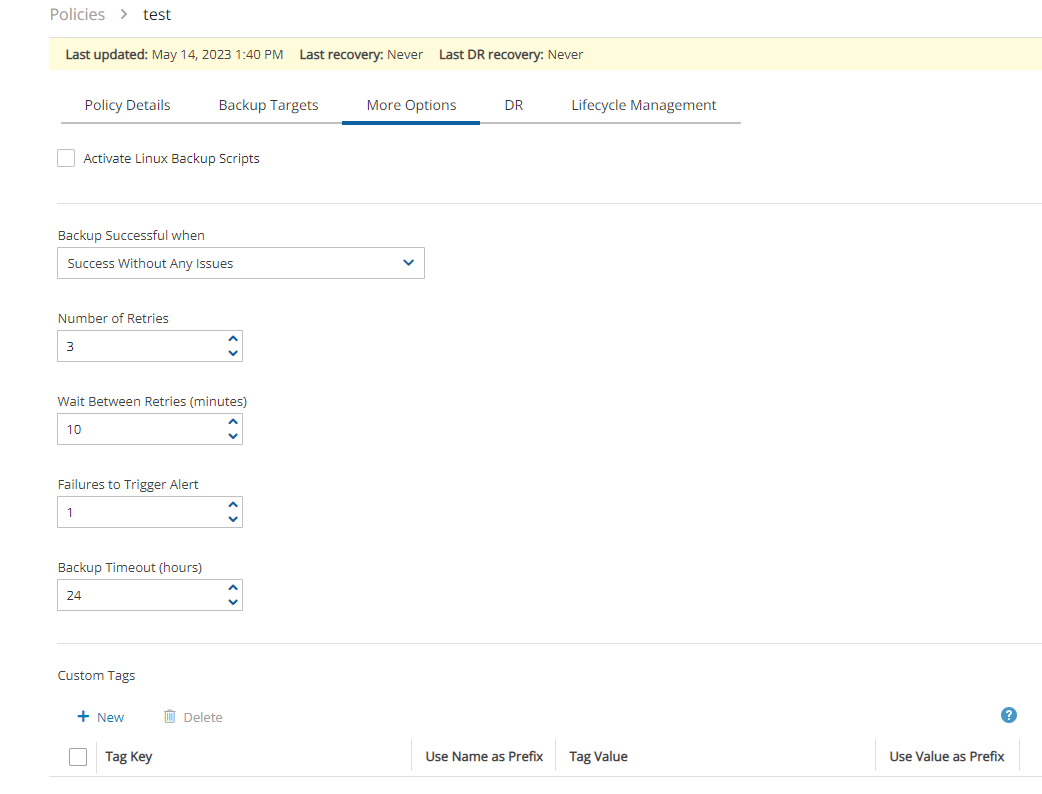

In addition to backup targets, you also define backup parameters, such as:

In Windows achieving application consistency using Microsoft Volume Shadow Copy Service (VSS)

Running backup scripts

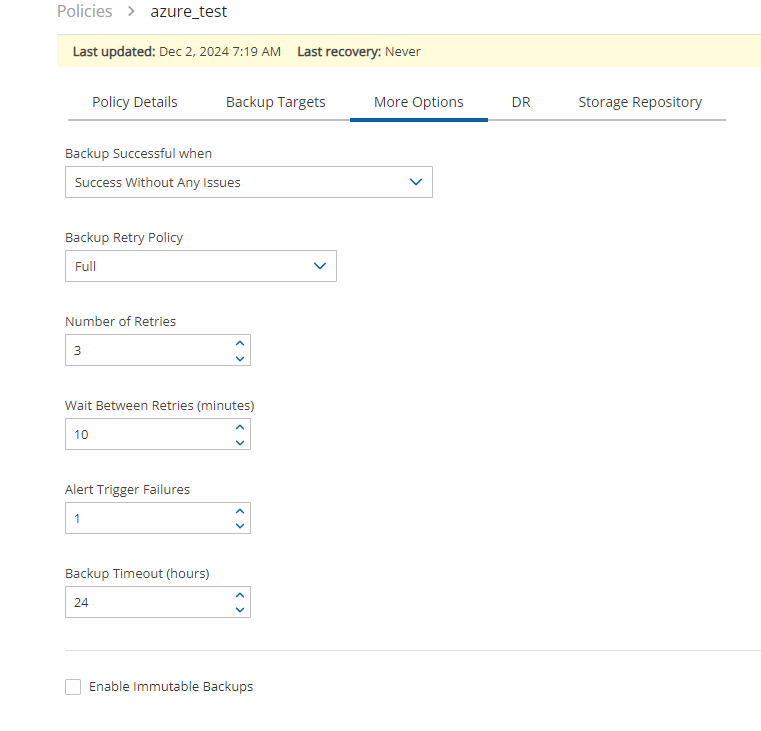

Number of retries in case of a failure

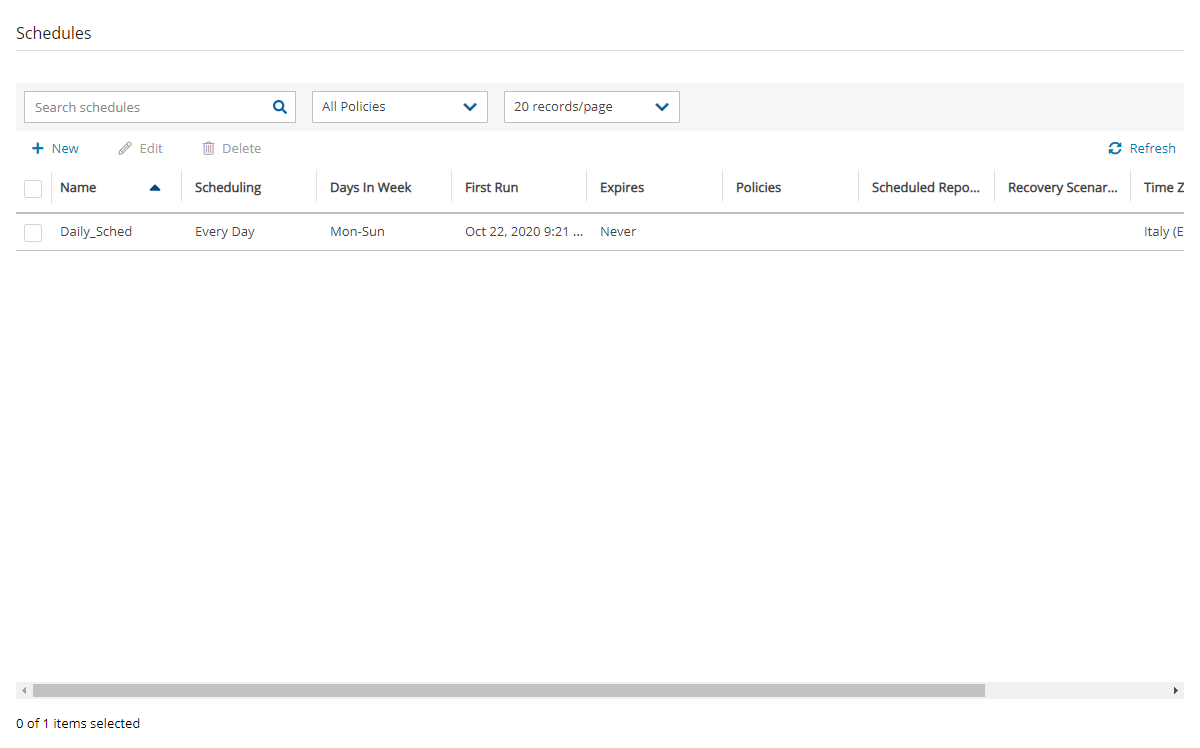

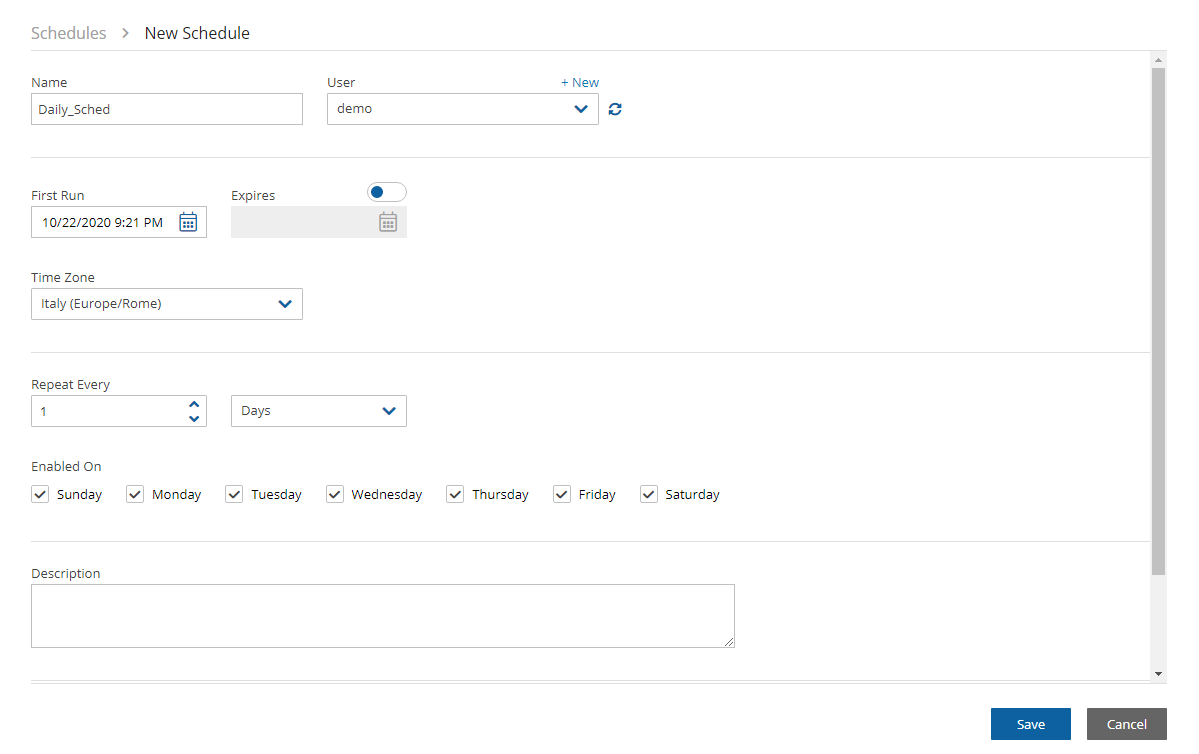

Schedules are used to define how you want to time the backups. You can define the following:

A start and end time for the schedule, including time zone of data

Backup frequency, e.g. every 15 minutes, every 4 hours, every day, etc.

Days of the week to run the policy

A policy can have one or more schedules associated with it. A schedule can be associated with one or more policies. As soon as you have an active policy defined with a schedule, backups will start automatically.

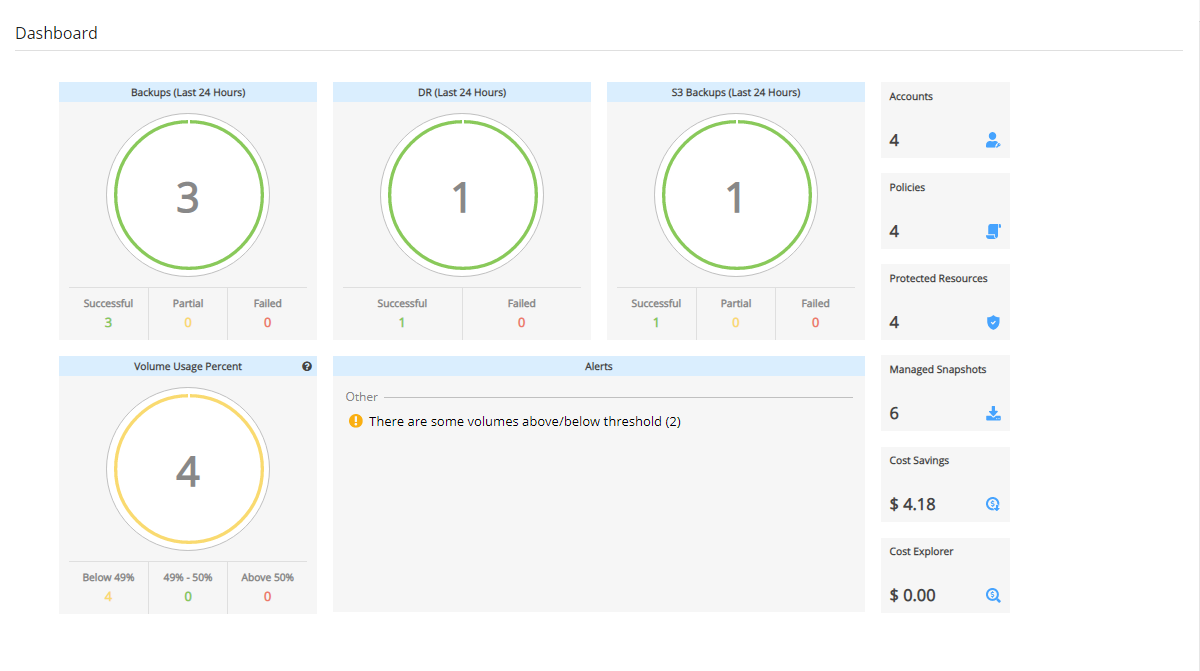

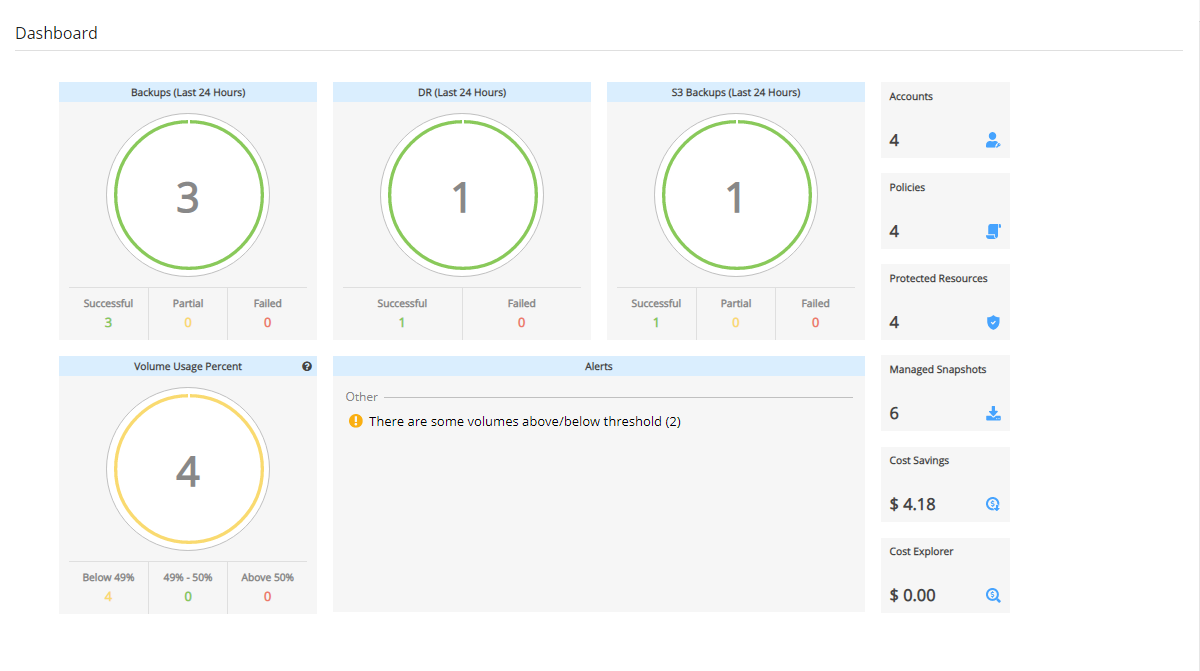

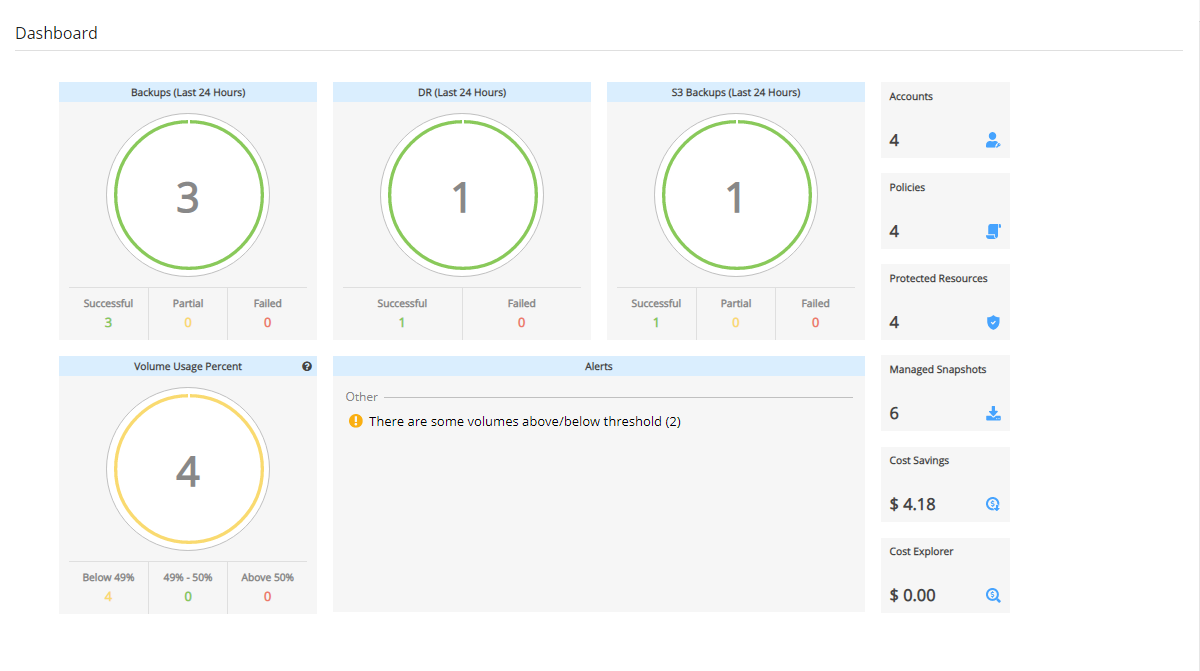

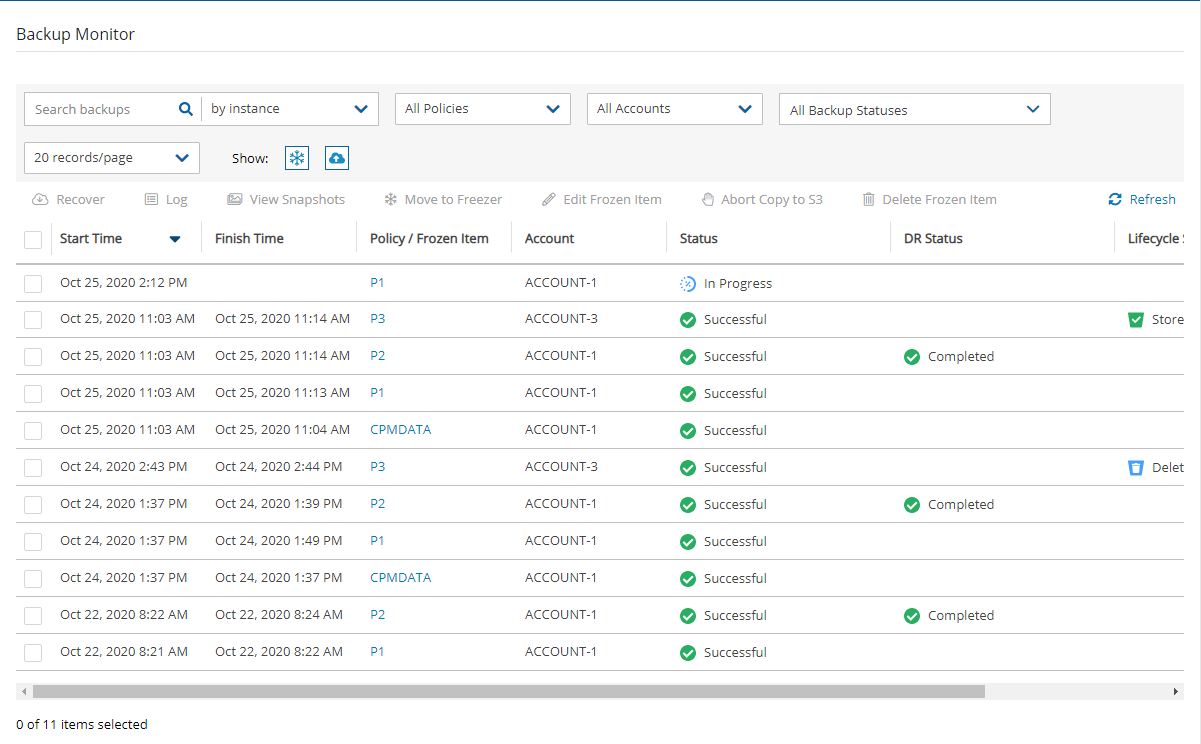

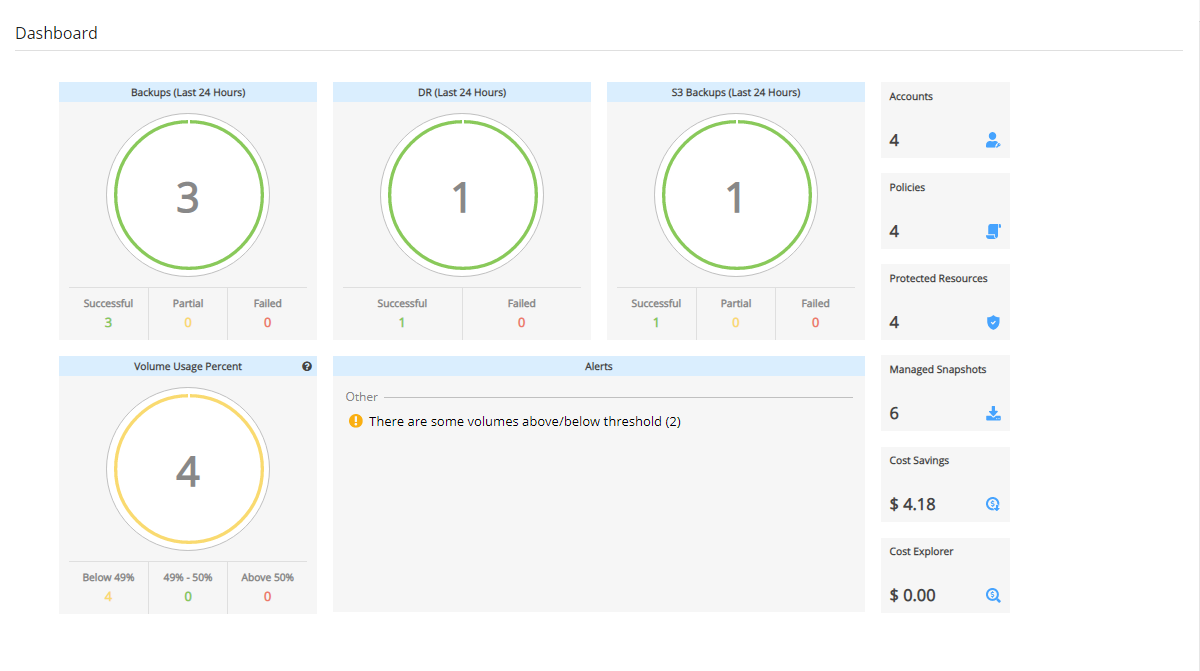

N2W provides monitoring at multiple levels. The Dashboard displays key performance indicators for backups, disaster recoveries, volume usage, backups to S3, and other metrics. Operation-specific monitors allow you to view details. And support for additional monitoring using Datadog and Splunk is available.

Following is a summary of the supported services for AWS and Azure backup targets:

AWS Main Backup Targets

*Cross-account DR to the original region incurs additional costs.

**Snapshots of EBS/RDS encrypted with default key cannot be copied cross account.

AWS FSx Backup Targets with Exceptions, Services, and Options

*Cross Account - FSx and Vaults must be encrypted with custom encryption key.

Azure Backup Targets

N2W is available in several different editions that support different usage tiers of the solution, e.g. number of protected instances, number of AWS, Azure, and Wasabi accounts supported, etc. The price for using the N2W software is a fixed monthly price which varies between the different N2W editions.

To see the different features for each edition, along with pricing and details, go to the . Once you subscribe to one of the N2W editions, you can launch an N2W Server instance and begin protecting your AWS environment. Only one N2W Server per subscription will actually perform a backup. If you run additional instances, they will only perform recovery operations (section ).

If you are already subscribed and using one N2W edition and want to move to another that better fits your needs, you need to perform the following steps:

Before proceeding, it is highly recommended that you create a snapshot of your CPM data volume. You can delete that snapshot once your new N2W Server is up and running. The data volume is typically named N2W – Data Volume.

Terminate your existing N2W instance. N2W recommends that you do so while no backup is running.

Unsubscribe from your current N2W edition. It is important since you will continue to be billed for that edition if you don’t cancel your subscription. You will only be able to unsubscribe if you don’t have any running instances of your old edition. You manage your subscriptions on the AWS Marketplace site on the page.

Subscribe to the new N2W Edition and launch an instance. You need to launch the instance in the same Availability Zone (AZ) as the old one. If you want to launch your new N2W Server in a different zone or region, you will need to create a snapshot of the data volume and either create the volume in another zone or copy the snapshot to another region and create the volume there.

Once configuration completes, continue to work with your existing configuration with the new N2W edition.

If you moved to a lower N2W edition, you may find yourself in a situation where you exceed the resources your new edition allows. For example, you used N2W Advanced Edition and you moved to N2W Standard Edition, which allows fewer instances. N2W will detect such a situation as a compliance issue, will cease to perform backups, display a message, and issue an alert detailing the problem.

To fix the problem:

Move back to an N2W edition that fits your current configuration, or

Remove the excessive resources, e.g., remove users, AWS accounts, or instances from policies.

Once the resources are back in line with the current edition, N2W will automatically resume normal operations.

The N2W Server is a Linux-based virtual appliance. It uses AWS APIs to access your AWS account. It allows managing snapshots of EBS volumes, RDS instances and clusters, Redshift clusters, DocumentDB, and DynamoDB tables. Except in cases where the user chooses to install our Thin Backup Agent for Windows Servers or use the AWS Simple System Manager (SSM) Remote Agent, N2W does not directly access your instances. Access is performed by the agent, or by a script that the user provides, which performs application quiescence.

N2W consists of the following parts, all of which reside on the N2W virtual server:

A database that holds your backup related metadata.

A Web/Management server that manages metadata.

A backup server that performs the backup operations. These components reside in the N2W server.

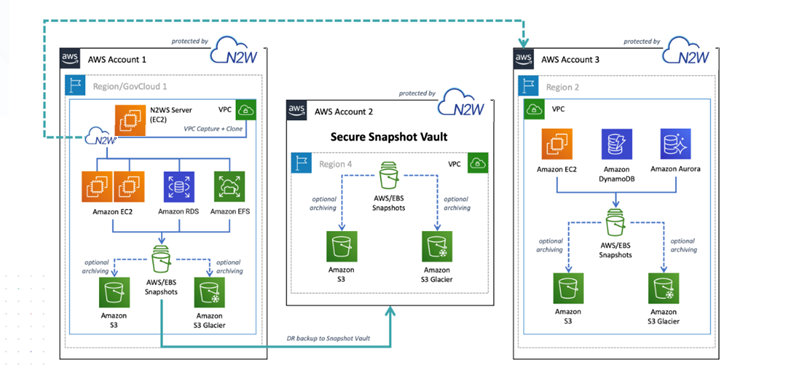

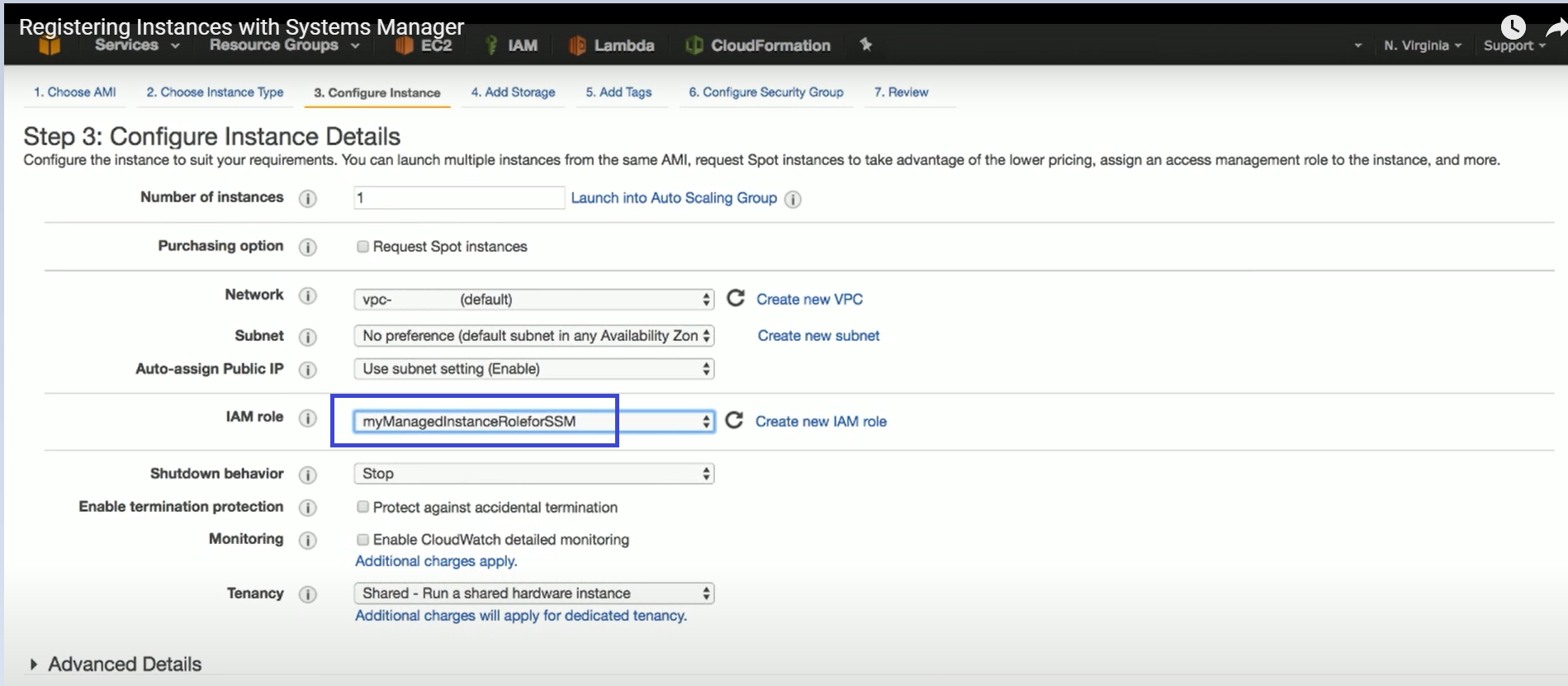

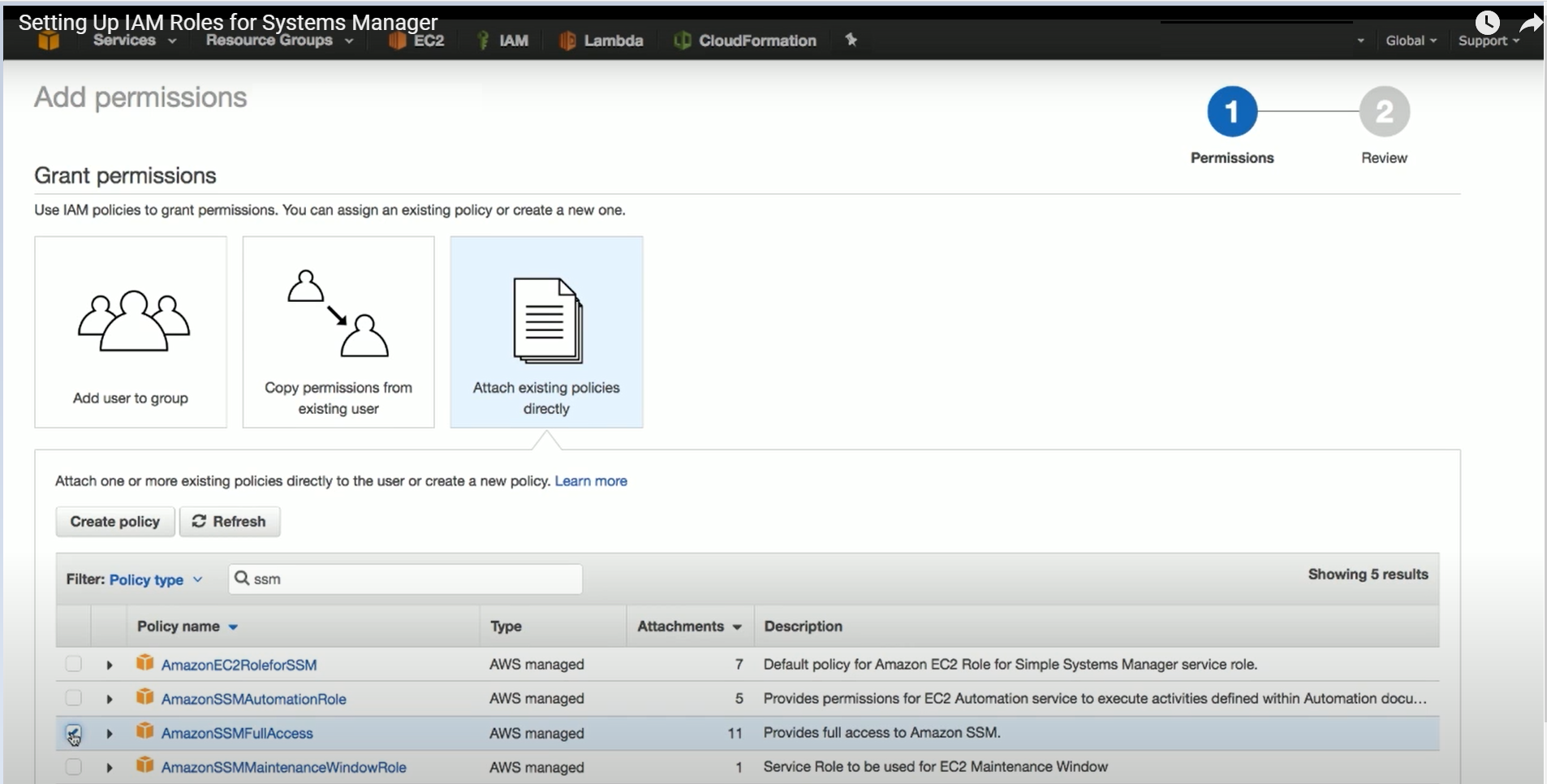

The N2W architecture is shown below. N2W Server is an EC2 instance inside the cloud, but it also connects to the AWS infrastructure to manage the backup of other instances. N2W does not need to communicate or interfere in any way with the operation of other instances. The only case where the N2W server communicates directly with and has software installed on an instance is when backing up Windows Servers for customers who want to use Microsoft VSS for application quiescing.

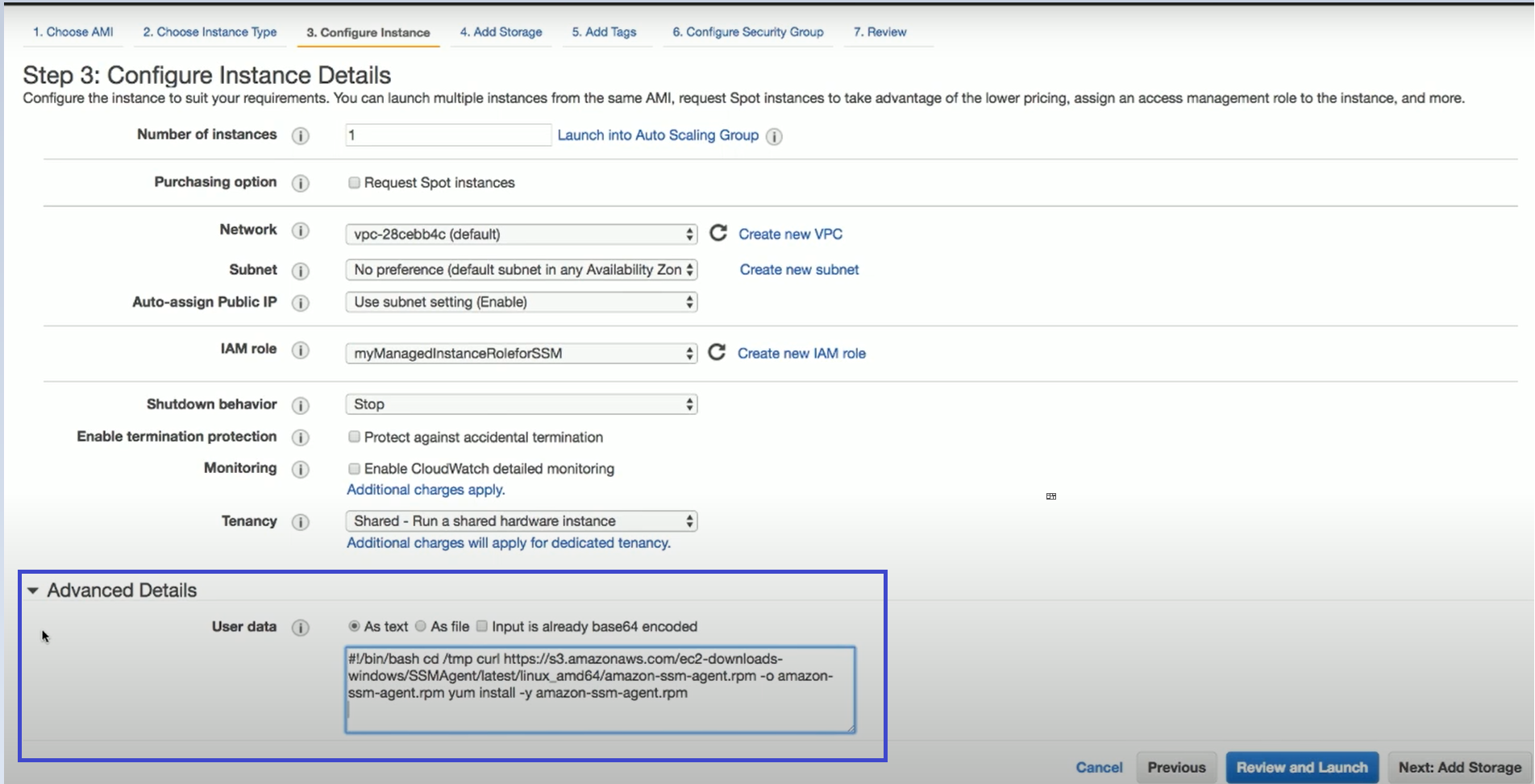

If you wish to have VSS or script support for application quiescence, you need to install the AWS SSM Agent or the N2W Thin Backup Agent. The agent gets its configuration from the N2W server, using the HTTPS protocol.

The SSM Agent doesn't require any inbound ports to be opened. All communication from the agent is outbound from HTTPS to the SSM and EC2 Message endpoints in the region where your instances are registered.

The N2W instance is an EBS-based instance with two EBS volumes. One is the root device, and the other is the CPM data volume. All persistent data and configuration information reside on the data volume. From N2W’s perspective, the root device is dispensable. You can always terminate your N2W instance and launch a new one, then using a short configuration process continue working with your existing data volume.

Although you have access to the N2W Server instance via SSH, N2W Software expects the N2W Server instance will be used as a virtual appliance. N2W Software expects you not to change the OS and not to start running additional products or services on the instance. If you do so and it affects N2W, N2W Software will not be able to provide you with support. Our first requirement will be for you to launch a clean N2W server.

Remember that all your changes in the OS will be wiped out as soon as you upgrade to a new release of N2W, which will come in the form of a new image (AMI). If you need to install software to use with backup scripts (e.g., Oracle client) or you need to install a Linux OS security update, you can. N2W Software recommends that you consult before doing so.

N2W server runs on an EBS-based instance. This means that you can stop and start it whenever you like. But if you create an image (AMI) of it and launch a new one with the system and data volume, you will find that the new server will not be fully functional. It will load and will allow you to perform recovery, but it will not continue performing backup as this is not the supported way to back up N2W servers. What you need to do, is to back up only the data volume, launch a fresh N2W server, and connect it to a recovered data volume. See section .

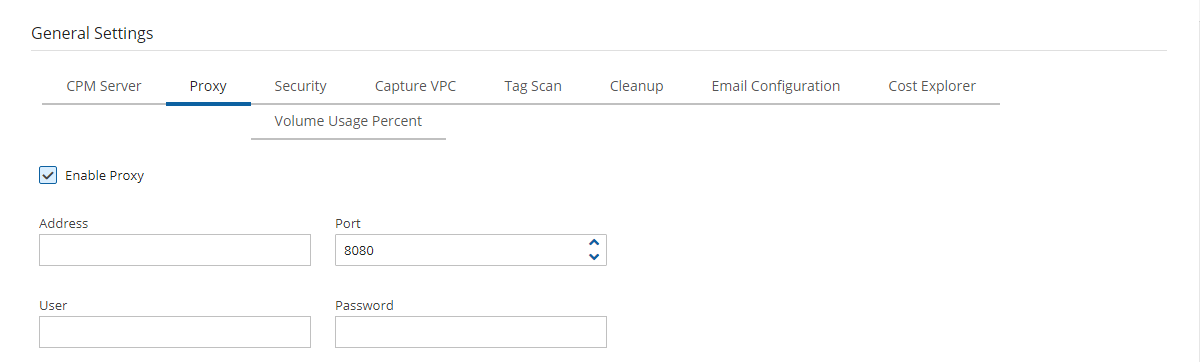

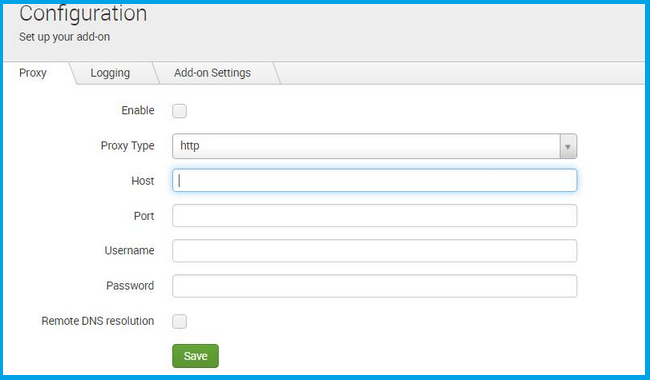

N2W needs connectivity to AWS endpoints to be able to use AWS APIs. This requires Internet connectivity. If you need N2W to connect to the Internet via an HTTP Proxy, that is fully supported. During configuration, you will be able to enable proxy use and enter all the required details and credentials: proxy address, port, user, and password. User and password are optional and can be left empty if the proxy server does not require authentication. Once you configure proxy settings at the configuration stage, they will also be set for use in the main application.

The proxy setting can be modified at any time in the Proxy tab of N2W Server Settings > General Settings. Select or clear Enable Proxy. If enabled, enter the requested proxy information.

If you are trying to launch multiple N2W servers of the same edition in the same account, you will find that from the second one on, no backup will be performed. Each such server will assume it is a temporary server for recovery purposes and will allow only recovery. Typically, one N2W server should be enough to back up your entire EC2 environment. If you need more resources, you should upgrade to a higher edition of N2W. If you do need to use more than one N2W server in your account, contact .

As part of the cloud ecosystem, N2W relies on web technology. The management interface through which you manage backup and recovery operations is web-based. The APIs which N2W uses to communicate with AWS are web-based. All communication with the N2W server is performed using the HTTPS protocol, which means it is all encrypted. This is important since sensitive data will be JavaScript communicated to/from the N2W server, for example, AWS credentials, N2W credentials, object IDs of your AWS objects (instances, volumes, databases, images, snapshot IDs, etc.).

Most interactions with the N2W server are performed via a web browser.

Since N2W uses modern web technologies, you will need your browser to be enabled for JavaScript.

N2W supports Microsoft Chromium Edge, Mozilla Firefox, and Google Chrome.

Other browsers are not supported.

If you want to view a getting-started tutorial, or to try the fully-functional N2W free for 30 days, go to . Follow the instructions in the ‘Getting Started with N2W Backup & Recovery for AWS’ video.

It is not necessary to reinstall N2W after purchasing a license.

It is now possible to have a free trial of N2W with the usage limitations customized for your specific AWS infrastructure. Contact to start your customized free trial. The N2W Software sales team may provide a reference code for your customized installation.

N2W provides customers the ability to back up and recover on-premise workloads running on AWS Outposts as well as workloads on AWS. N2W can run the core backup application on the AWS cloud and protect workloads running either on regions outside of AWS Outposts or protect applications that need to be backed up on AWS Outposts.

N2W supports the following AWS services running on Outposts:

EC2/EBS/RDS/SES/S3/VPC

The services can be deployed in all AWS regions.

N2W is available on AWS Marketplace with different editions ready to support any size environment:

You can launch N2W as an AMI directly from the AWS Marketplace or use a pre-configured CloudFormation (CF) template. Configuration takes a few minutes. Find our install videos here:

For further information regarding the AWS Outposts service, go to

The prerequisite for support is complete installation of N2W Backup & Recovery. Use cases are:

Backup - N2W can either back up applications, such as a media server, that run on AWS Outposts by storing the backup data on Outposts, as well as protect applications running outside of AWS Outposts by storing backup data in the same AWS region.

Disaster Recovery (DR) - In the case of Disaster Recovery, N2W protects resources running on AWS Outposts and copies data to another AWS Region or AWS account.

Be sure to review this entire section before starting an N2W installation (section or an upgrade (section ).

You might want to review the configuration options available in silent mode installation. See section for AWS, section for AWS with Secrets Manager, and section for Azure.

The primary differences between an installation and an upgrade are:·

RDS Aurora clusters, including Aurora Serverless

Redshift clusters

DocumentDB

DynamoDB tables

Elastic File System (EFS)

FSx File Systems - Lustre, NetApp ONTAP, Windows with managed Active Directory, OpenZFS

S3 Sync to copy objects between S3 buckets

EKS Clusters and Namespaces

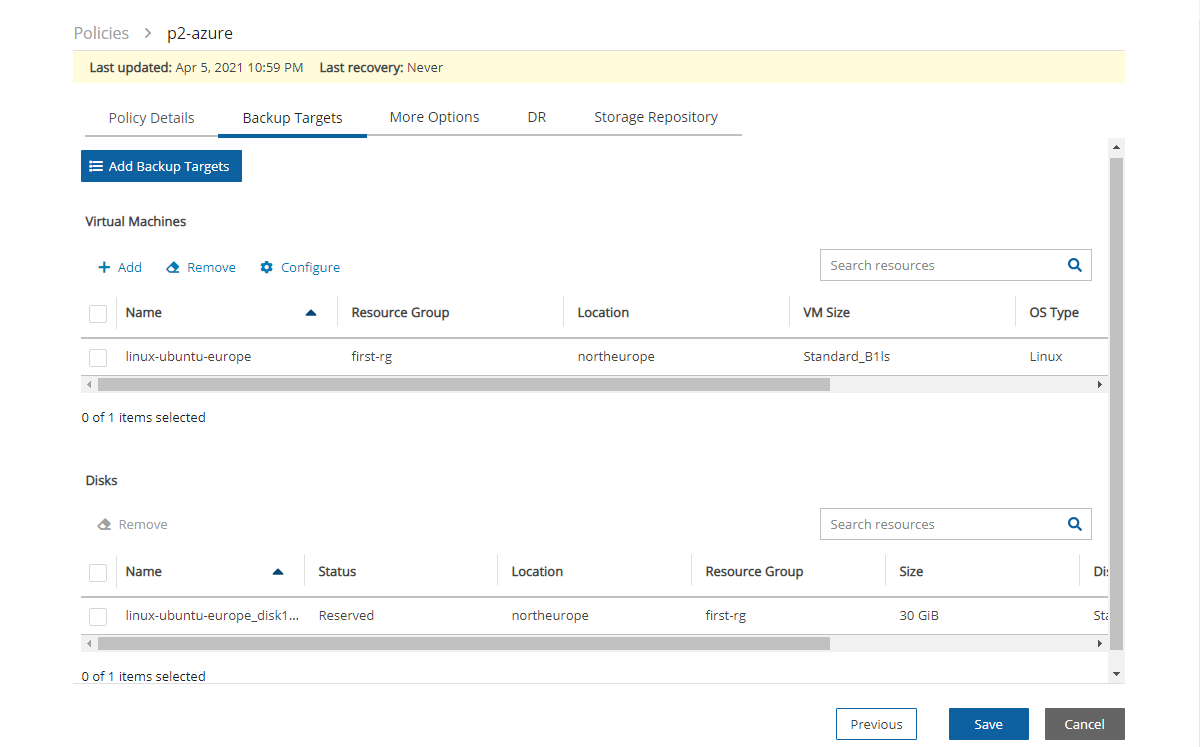

For Azure policies, Virtual Machines (VM), SQL Servers, and Disks

EBS

Y

Y **

Y

Y

EFS

Y

Y

N

N

FSX

See below

See below

See below

N

Redshift Cluster

N

Y *

XAccount to original region is always Full

Y

Y

RDS

Y

Y

Y

Y

S3 Bucket

Y

Y

N

N

Y

N

N

OpenZFS

Y

Y AWS

Y AWS - Optional XRegion

Windows File Server

Y

Y FSx

Y AWS - Optional XRegion

Y

DR – XRegion ONLY

Y

VM

Y

Y

N

During configuration, choose Use Existing Data Volume and select the existing data volume.

DR failback reverses the workflow.

EC2

Y

Y

Y

Service/Option

Backup

Cross Region DR

Cross Account DR*

Lustre

Y

Y FSx

Y Persistent HDD -

AWS - Optional XRegion

Service

Backup

DR - Cross Region

Copy to Repository

Disk

Y

Y

N

Y

NetApp ONTAP

SQL Server

In an upgrade, you use an existing CPM data volume, rather than creating a new volume.

In an upgrade, for each version you are upgrading from, there are specific steps to follow before performing the actual upgrade.

The N2W management console is accessed via a web browser over HTTPS.

When a new N2W Server is launched, the server will automatically generate a new self-signed SSL certificate. This certificate will be used for the web application in the configuration step.-

If no other SSL certificate is uploaded to the N2W Server, the same certificate will be used also for the main N2W application.

Every N2W Server will get its own certificate.

Since the certificate is not signed by an external Certificate Authority, you will need to approve an exception in your browser to start using N2W.

For complete details about securing default certificates on N2W server, see Appendix G – Securing Default Certificates on N2W Server.

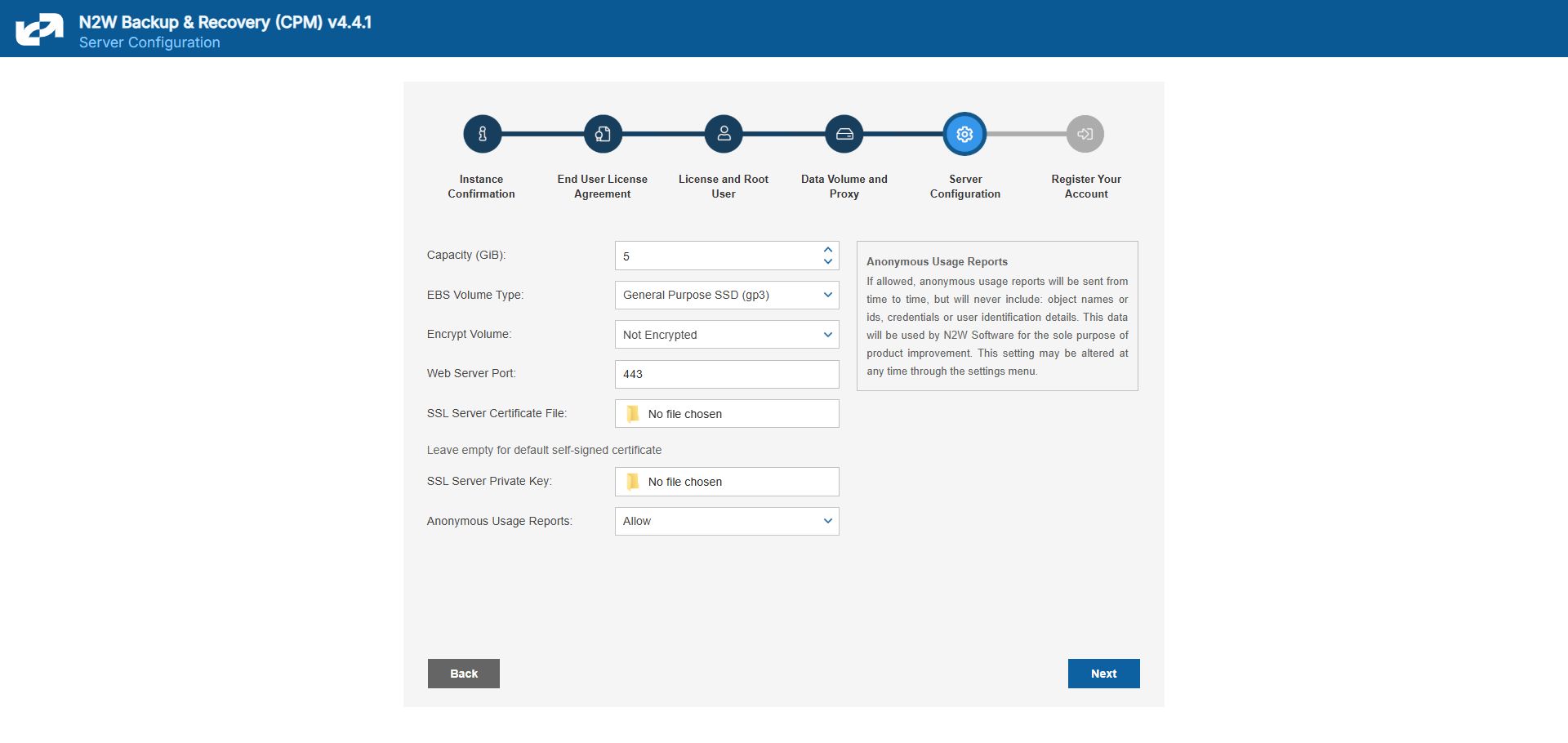

When configuring the N2W server, define the following settings:

AWS Credentials for the N2W root user.

Time zone for the server.

Whether to create a new CPM data volume or attach an existing one from a previous N2W server.

Whether to create an additional N2W server from an existing data volume during Force Recovery Mode.

Proxy settings. Configure proxy settings in case the N2W server needs to connect to the Internet via a proxy. These settings will also apply to the main application.

The port the web server will listen on. The default is 443. See section .

Whether to upload an SSL certificate and a private key for the N2W server to use. If you provide a certificate, you will also need to provide a key, which must not be protected by a passphrase.

Register the AWS account with N2W Software. This is mandatory only for free trials but is recommended for all users. It will allow N2W to provide quicker and enhanced support. Registration information is not shared.

For the configuration process to work, as well as for normal N2W operations, N2W needs to have outbound connectivity to the Internet, for the HTTPS protocol. Assuming the N2W server was launched in a VPC, it needs to have:

A public IP, or

An Elastic IP attached to it, or

Connectivity via a NAT setup, Internet Gateway, or HTTP proxy.

If an access issue occurs, verify that the:

Instance has Internet connectivity.

DNS is configured properly.

Security groups allow outbound connections for port 443 (HTTPS) or other (if you chose to use a different port).

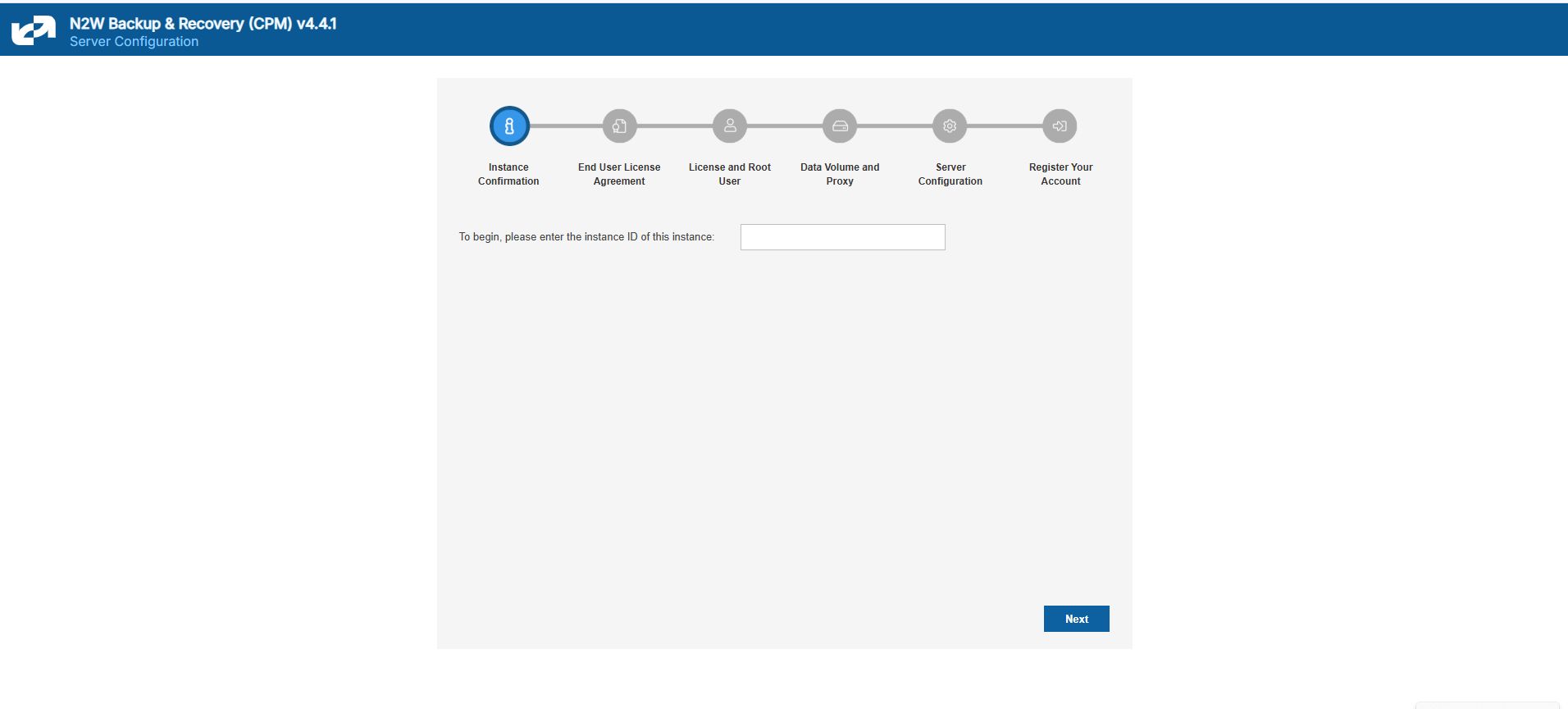

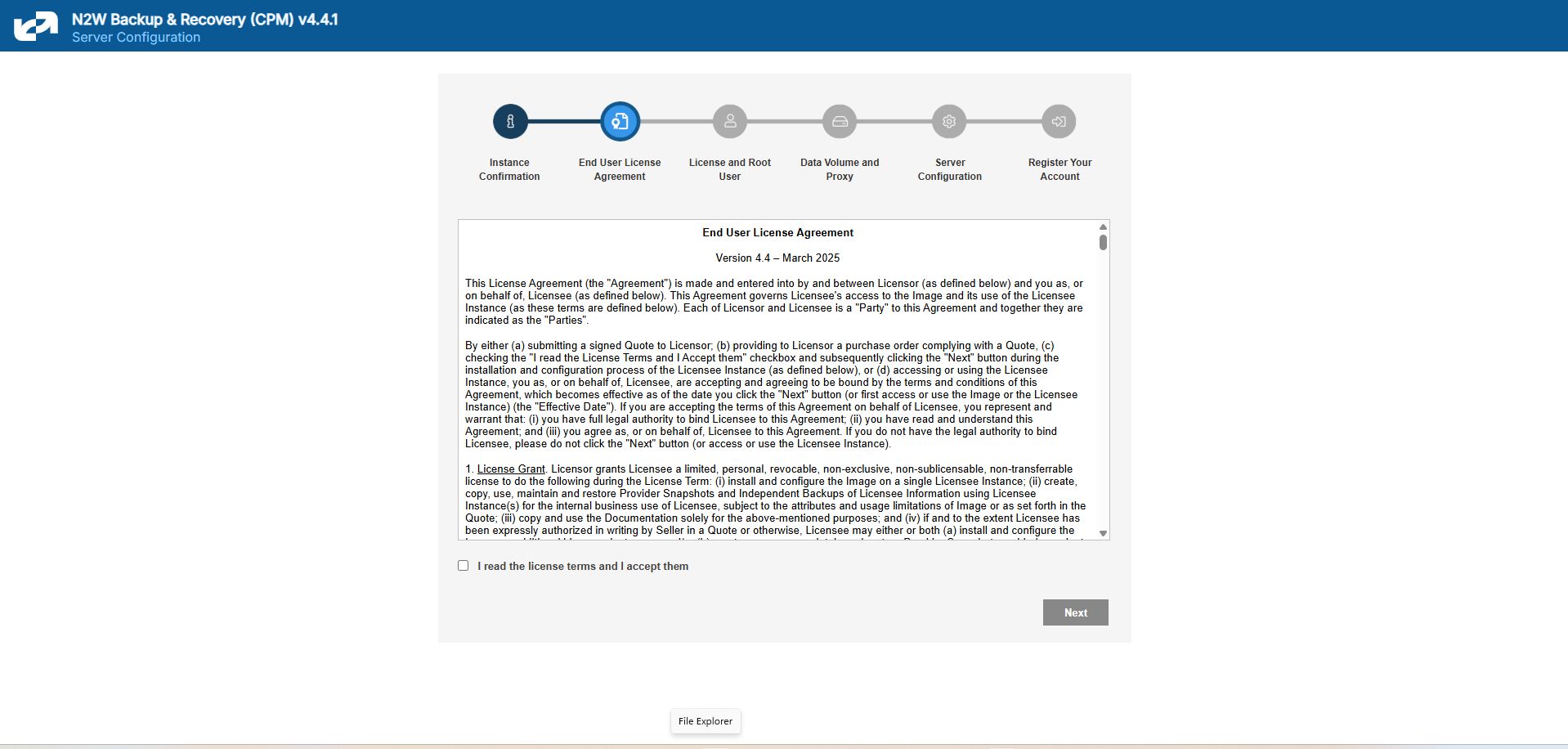

Following are the configuration steps:

Approve the end-user license agreement.

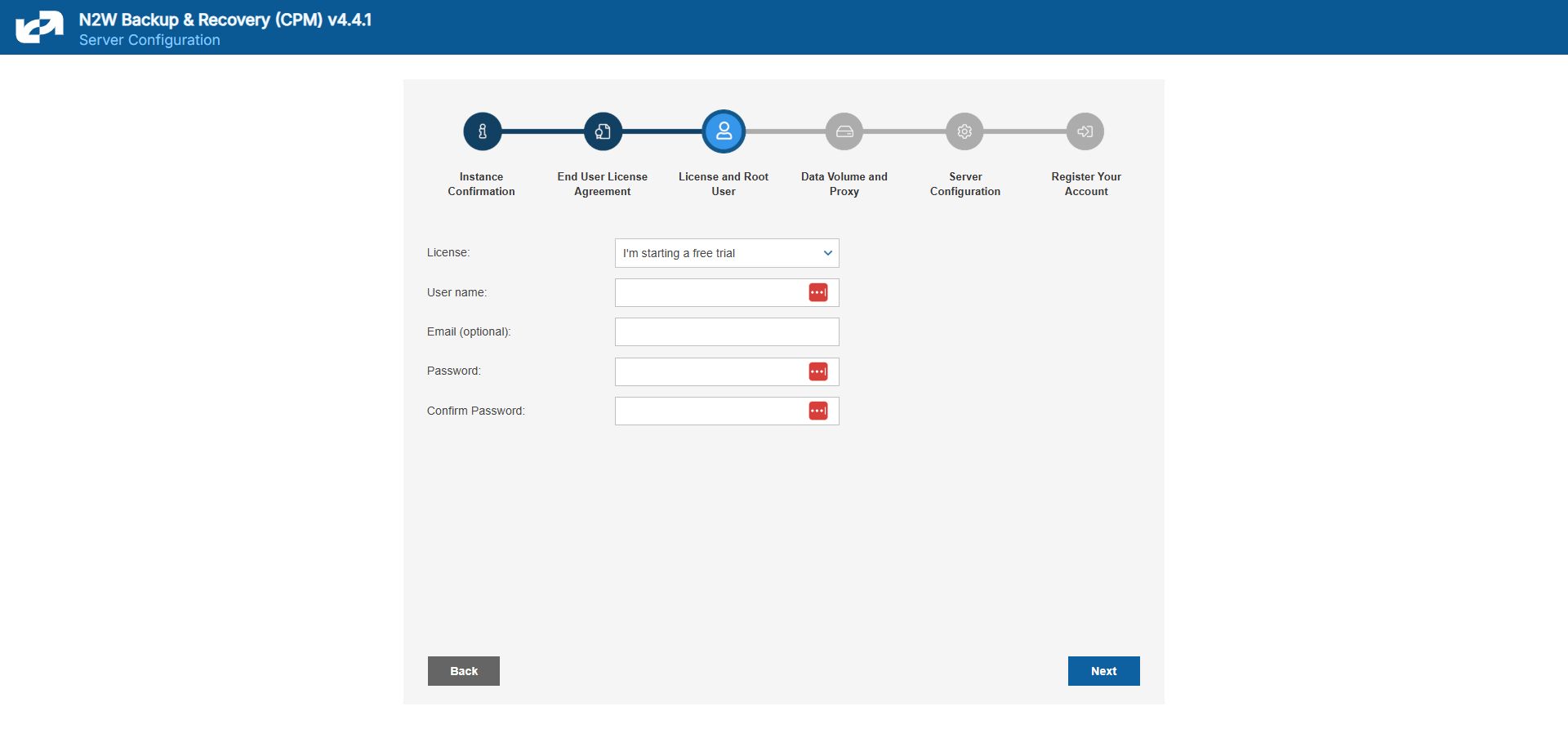

Define the root username, email, and password.

The admin/root username CAN'T be changed after setting it.

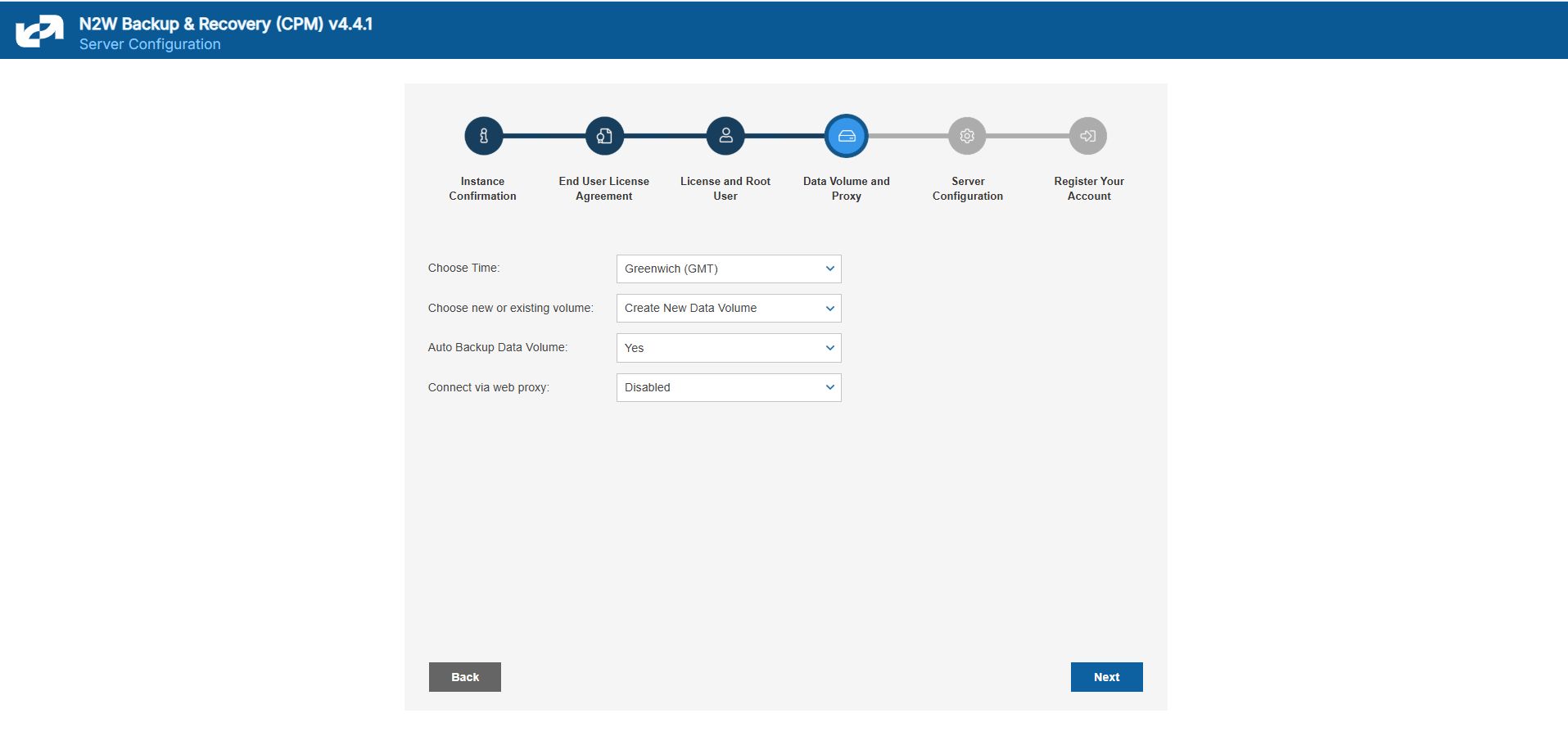

3. Define the time zone of the N2W Server and usage of data volume.

4. Fill in the rest of the information needed to complete the configuration process.

N2W Server Sizing Guidelines

Following are the recommended instance type sizes to consider when deploying your N2W server:

Up to 200 instances - T3.medium

Up to 500 instances - M5.large, C5.large, R5.large, C6i.large, R6i.large, M6i.large, C7i.large, M7i.large

Up to 1000 instances - M5.xlarge, C5.xlarge,R5.xlarge,C6i.xlarge, R6i.xlarge, M6i.xlarge, C7i.xlarge, M7i.xlarge

Up to 2000 instances - M5.2xlarge, C5.2xlarge, R5.2xlarge, C6i.2xlarge, R6i.2xlarge, M6i.2xlarge, C7i.2xlarge, M7i.2xlarge

Up to 4000 instances - M5.4xlarge, C5.4xlarge, R5.4xlarge, C6i.4xlarge, R6i.4xlarge, M6i.4xlarge, C7i.4xlarge, M7i.4xlarge

Over 4000 instances – Move additional instances to a new CPM Server

When using the S3 Backup feature, upgrade to the next available instance size listed above but not smaller than 'large'.

To initially be identified as the owner of this instance, you are required to type or paste the N2W server instance ID. This is just a security precaution.

In the next step of the configuration process, you will also be required to approve the end-user license agreement.

The End User License Agreement field is presented. Select I’m starting a free trial for a free trial. Otherwise, select the appropriate license option in the list, such as Bring Your Own License (BYOL) Edition. Alternatively, if your organization purchased a license directly from N2W Software, additional instructions are shown.

The AWS root user (IAM User) is no longer allowed to control the operation of the N2W server. A user with the Authentication credentials for N2WS Instance IAM Role is the only user allowed to install N2W, log on to the system server, and operate it. As shown below, you need to define the root username, email, and password. This is the third step in the configuration process. The email may be used when defining Amazon Simple Notification Service (SNS) based alerts. Once created, choose to automatically add this email to the SNS topic recipients.

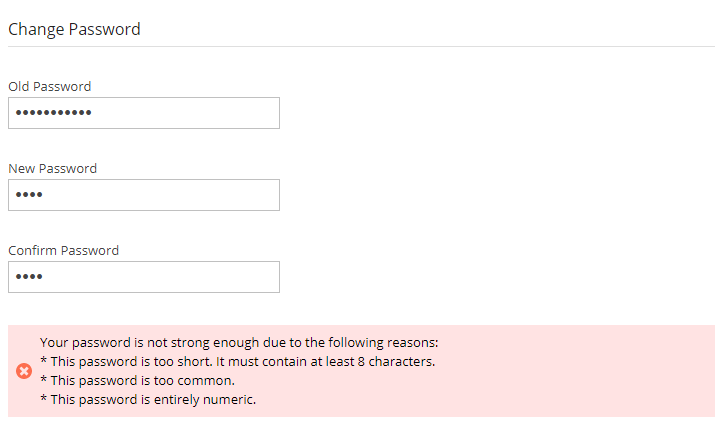

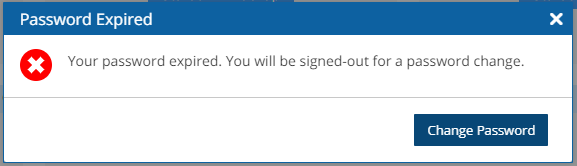

Passwords: N2W recommends that you use passwords that are difficult to guess and that are changed from time to time. For the password rules that N2W enforces, see section 16.2.3.

In the fourth step of the configuration process, you can:

Set the time zone of the N2W Server.

If using a paid license, choose whether to create a new data volume or to use an existing one. Your AWS credentials will be used for the data volume setup process.

Create an additional N2W server in recovery mode only, by choosing an existing data volume and set Force Recovery Mode.

Configure proxy settings for the N2W server. See section .

As you will see in section 4.1.3, all scheduling of backup is performed according to the local time of the N2W Server. You will see all time fields displayed by local time; however, all time fields are stored in the N2W database in UTC. This means that if you wish to change the time zone later, all scheduling will still work as before.

Here, you choose to use a new or existing data volume. Actual configuration of the volume, including encryption, will be accomplished at the next step. See section 2.1.4.1.

AWS credentials are required to create a new Elastic Block Storage (EBS) data volume if needed and to attach the volume to the N2W Server instance.

If you are using AWS Identity and Access Management (IAM) credentials that have limited permissions, these credentials need to have permissions to view EBS volumes in your account, to create new EBS volumes, and to attach volumes to instances. See section 16.3. These credentials are kept for file-level recovery later and are used only for these purposes.

If you assigned an IAM Role to the N2W Server instance, and this role includes the needed permissions, select Use Instance’s IAM Role, and then you will not be required to enter credentials

If the N2W server needs an HTTP proxy to connect to the Internet, define the proxy address, port, user, and password. The proxy settings will be kept as the default for the main application. In the N2W UI, proxy settings are made in the Proxy tab of Server Settings > General Settings.

Make sure to enable SSH connections (port 22) through your proxy.

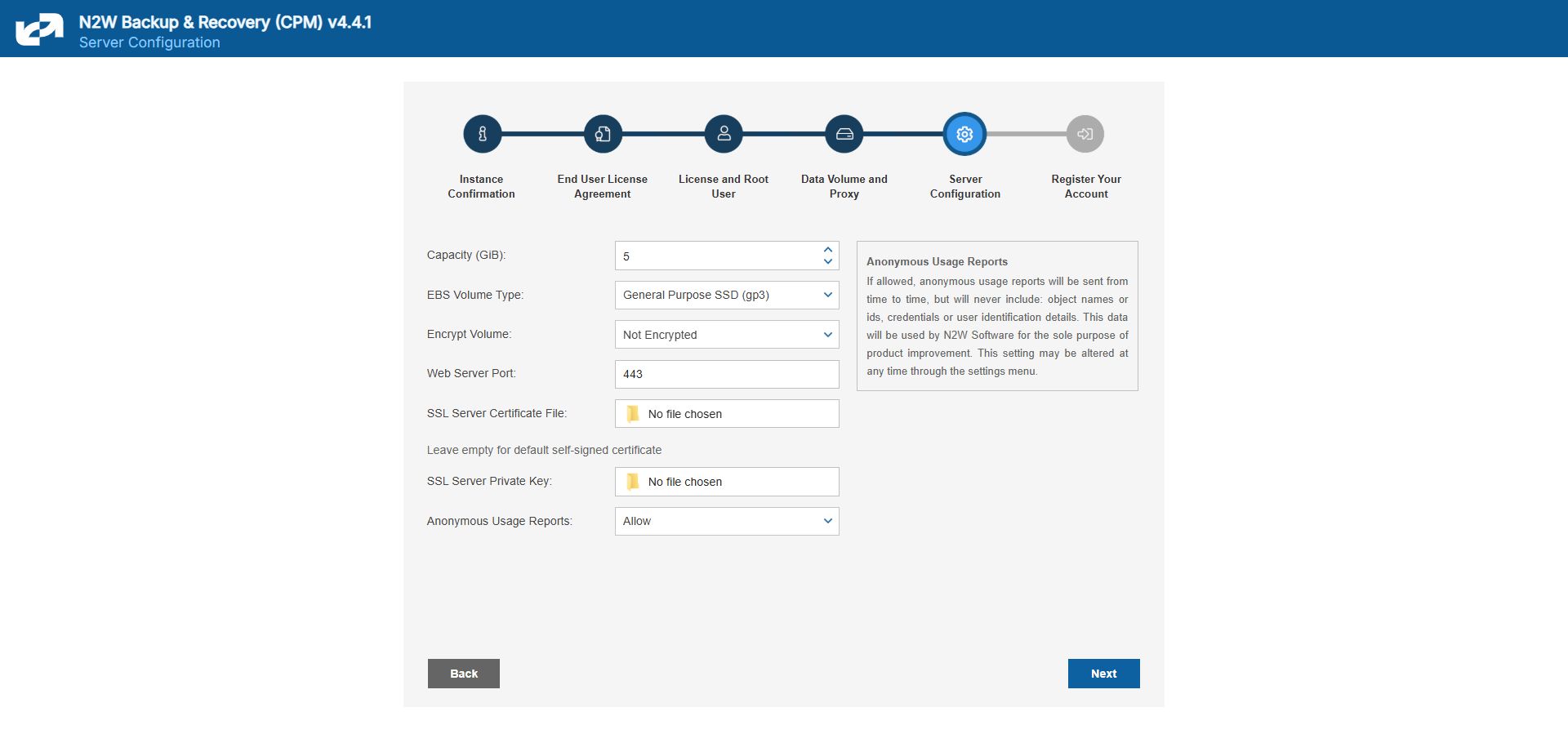

In the fifth step, you will fill in the rest of the information needed for the configuration of the data volume for the N2W Server.

When creating a new data volume, the only thing you need to define is the capacity of the created volume at this step. The default size of the data volume is 10 GiB.

The volume is going to contain the database of N2W’s data, plus any backup scripts or special configuration you choose to create for the backup of your servers. The backup itself is stored by AWS, so normally the data volume will not contain a large amount of data.

Volume Recommendations

Volume size should be at least 10 GB, which is large enough to manage roughly 50 instances and about 3 times as many EBS volumes.

If your environment is larger than 50 instances, increase the volume at about the ratio of 1 GB per 10 backed-up instances·

Volume type should be at least GP3.

For Azure, Volume Type defaults to Premium LRS.

GP3 volume types are cheaper and deliver better performance than GP2 volume types. For details, see https://docs.aws.amazon.com/emr/latest/ManagementGuide/emr-plan-storage-compare-volume-types.html

The new volume will be automatically created in the same AZ as the N2W instance. It will be named N2W Data Volume. During the configuration process, the volume will be created and attached to the instance. The N2W database will be created on it.

Select Encrypted in the Encrypt Volume drop-down list and choose a key in the Encryption Key list. You have the option to use a custom ARN.

Port 443 is the default port for the HTTPS protocol, which is used by the N2W manager. If you wish, you can configure a different port for the web server. But, keep in mind that the specified port will need to be open in the instance’s security groups for the management console to work, and for any Thin Backup Agents that will need to access it.

The final detail you can configure is an SSL certificate and private key.

If you leave them empty, the main application will continue to use the self-signed certificate that was used so far.

If you choose to upload a new certificate, you need to upload a private key as well. The key cannot be protected by a passphrase, or the application will not work.

If a corrupted SSL certificate is installed, it will prevent the N2W server from starting.

Leaving the Anonymous Usage Reports value as Allow permits N2W to send anonymous usage data to N2W Software. This data does not contain any identifying information:

No AWS account numbers or credentials.

No AWS objects or IDs like instances or volumes.

No N2W names of objects names, such as policy and schedule.

It contains only details like:

How many policies run on an N2W server

How many instances per policy

How many volumes

What the scheduling is, etc.

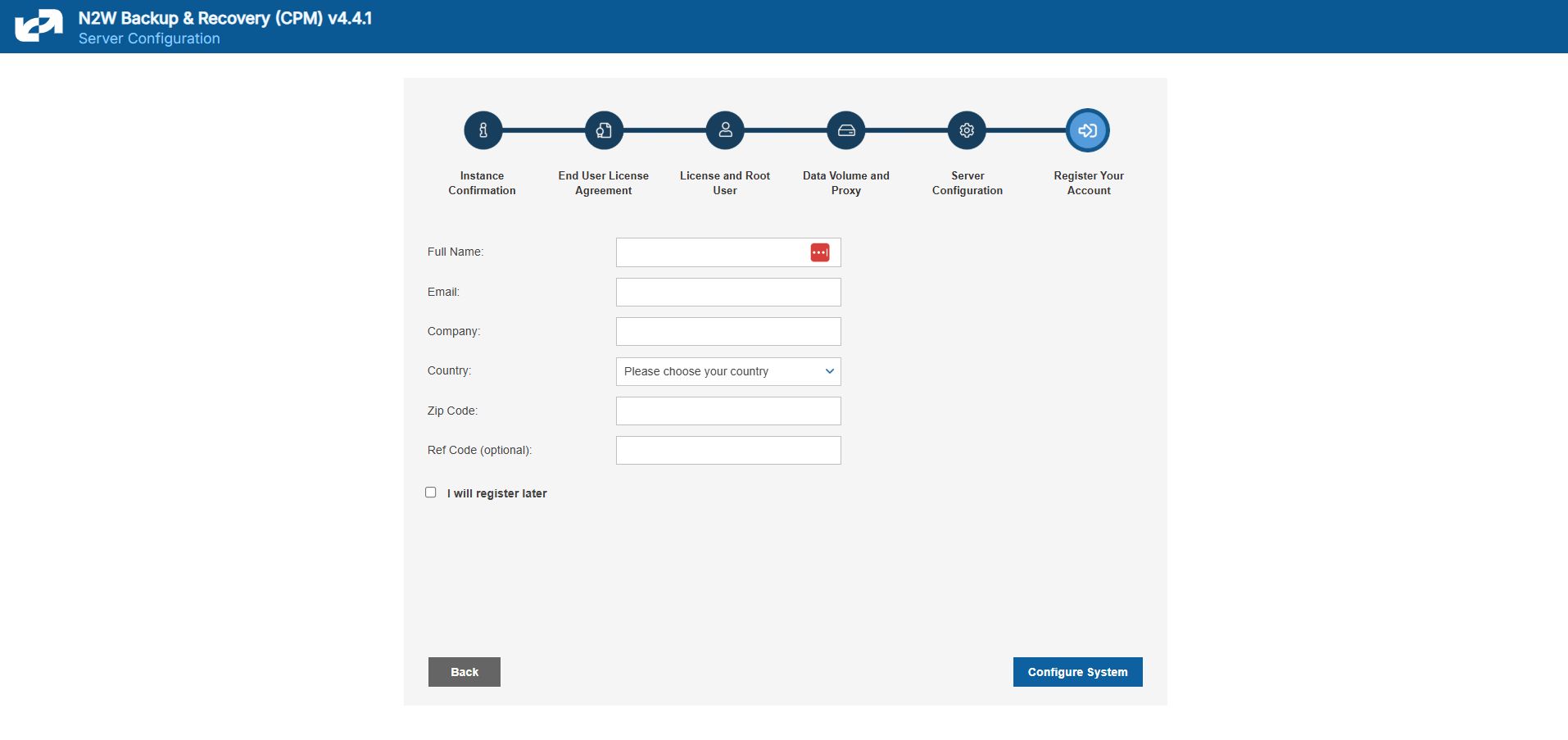

After filling in the details in the last step, you are prompted to register. This is mandatory for free trials and optional for paid products.

Select Configure System to finalize the configuration. The configuration will take between 30 seconds and 3 minutes for new volumes, and usually less for attaching existing volumes. After the configuration is complete, a ‘Configuration Successful – Starting Server …’ message appears. It will take a few seconds until you are redirected to the login screen of the N2W application.

If you are not redirected, refresh the browser manually. If you are still not redirected, reboot the N2W server via AWS Management Console, and it will come back up, configured, and running.

Most inputs you have in the configuration steps are validated when you select Next. You will get an informative message indicating what went wrong.

A less obvious problem you may encounter is if you reach the third step and get the existing volume select box with only one value in it: No Volumes found. This can arise:

If you chose to use an existing volume and there are no available EBS volumes in the N2W Server’s AZ, you will get this response. In this case, you probably did not have your existing data volume in the same AZ. To correct this:

Terminate and relaunch the N2W server instance in the correct zone and start over the configuration process, or

Take a snapshot of the data volume, and create a volume from it in the zone the server is in.

If there is a problem with the credentials you typed in, the “No Instances found” message may appear, even if you chose to create a new data volume. This usually happens if you are using invalid credentials, or if you mistyped them. To fix, go back and enter the credentials correctly.

In rare cases, you may encounter a more difficult error after you configured the server. In this case, you will usually get a clear message regarding the nature of the problem. This type of problem can occur for several reasons:

If there is a connectivity problem between the instance and the Internet (low probability).

If the AWS credentials you entered are correct, but lack the permissions to do what is needed, particularly if they were created using IAM.

If you chose an incorrect port, e.g., the SSH port which is already in use.

If you specified an invalid SSL certificate and/or private key file.

If the error occurred after completing the last configuration stage, N2W recommends that you:

Terminate the N2W server instance.

Delete the new data volume (if one was already created).

Try again with a fresh instance.

If the configuration still fails, the following message will display. If configuring a new instance does not solve the problem, contact the N2W Software Support Team. To access configuration error details, select Download Configuration Logs.

The AWS Key Management Service (KMS) allows you to securely share custom encryption keys between accounts. For details on enabling shared custom keys, see https://aws.amazon.com/blogs/security/share-custom-encryption-keys-more-securely-between-accounts-by-using-aws-key-management-service/.

The use of custom keys is required in the following cases:

Authentication of cpmuser to N2W server using a non-default certificate with a private key.

Encrypting new volumes.

Associating an account for File Level Recovery.

Authentication of IAM User.

Running scripts.

Performing Recoveries, DR, and Cross-Account activities for RDS, EC2, and EFS resources.

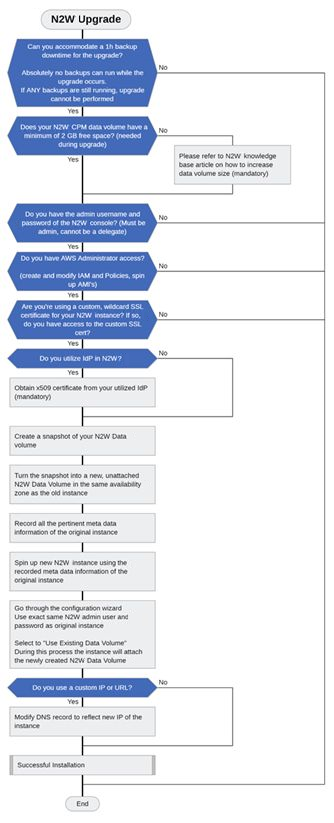

Important: Choose the right upgrade method and sequence.

There are 3 upgrade paths depending on the version from which you are upgrading:

For versions 4.2 onward, you can upgrade by installing a patch. See section .

For versions 2.6 onward, you can use Amazon Machine Images (AMI).

For versions older than 2.6, you must first upgrade to 4.2 using an Amazon Machine Image (AMI), and then upgrade from 4.2 to 4.3.0.

The following diagram shows the major steps and considerations in an N2W upgrade. The upgrade flow is the same as the installation flow with the addition of using an existing CPM data volume (section 2.2.2).

We strongly recommend that you read this entire section BEFORE starting the upgrade.

The upgrade process consists of the following phases:

Before starting the upgrade, refer to instructions specific to your current version in section 2.2.

Stop the current CPM instance.

Select the existing data volume from the snapshot to be used in the upgrade.

Configure the new version instance according to instructions in section .

Terminate the old version instance, and launch the new version as described in section .

After the upgrade, there are still a few steps to ensure a complete transition. See section .

If you have any questions or encounter issues, visit the N2W Support Center where you will find helpful resources in a variety of formats or can open a Support Ticket.

The following sections outline the steps required to upgrade to the latest N2W Backup & Recovery version.

Due to new functionality in v4.4.x, you may need to update your permission policies. If you have more than one AWS Account added to the N2W console, you will have to update the IAM Policies for each account.

For AWS: See https://n2ws.zendesk.com/hc/en-us/articles/33252616725533--4-5-0-Required-Minimum-AWS-permissions-for-N2W-operations

For Azure: See https://n2ws.zendesk.com/hc/en-us/articles/33252710830109--4-5-0-Required-Minimum-Azure-permissions-for-N2W-operations

Collecting Information Before Starting Upgrade

Following is the important information you must have ready before starting:

Verify that there are no backups, DRs, or Cleanups running or scheduled to run within the next 15-30 minutes.

If the only thing processing is an S3 archive, you are able to abort it if you want.

Have the username and password for the root/admin user ready.

If you are using a proxy in the N2W settings, write down the details.

Take a screenshot of the N2W EC2 instance network settings: IP, VPC, Subnet, Security Groups, and IAM Role and Keypair name.

Take a screenshot of the Tags if you have more than a few.

Terminate the N2W EC2 instance.

Terminating is only necessary BEFORE launching the new AMI if it is a Marketplace Subscription.

If you are using BYOL, you can keep the old server until the new upgrade is complete and tested for an easy rollback if necessary.

Take a snapshot of the N2W Data Volume. Only the Data Volume is important, as it contains all your settings, backup entries, etc.

Download the latest IAM permissions (section ) and update the IAM Policies from your role.

If you are using a custom SSL certificate, make sure you have the .CRT and .KEY files available in a place where you can easily add them during the configuration process.

The new CPM instance needs to be in the same Availability Zone as the cpmdata EBS volume.

Use the cpmdata volume created from the snapshot, and leave the original volume attached to the stopped instance.

If your data volume is very big, wait 10 minutes before starting the upgrade, as AWS is creating new volumes from snapshots. The Ready message may show before the volume is actually ready.

To upgrade/redeploy the N2W Server Instance:

About 1 minute after launching the new instance, it should in the running state. Connect to the user interface (UI) with a browser using https://[ip-of-your-new-instance].

Confirm the Instance ID of your newly launched instance.

Accept the Terms and Conditions.

Enter the username and password of the admin/root user.

The admin/root username CAN'T be changed after setting it.

5. Approve the exception to the SSL certificate.

6. Choose the time zone and select Use Existing Data Volume in step #4, “Data Volume and Proxy”.

7. Select your old data volume in the Existing CPM Data Volume list in step #5, “Server Configuration”.

8. Select Configure System in step #6, “Register Your Account”. N2W will automatically resume operations. Wait until the login mask appears.

See section 2 for complete details for the Server Configuration.

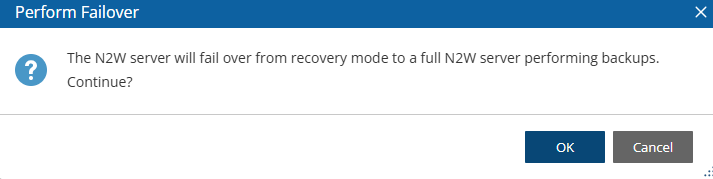

If you have a Marketplace instance, after a successful upgrade, the new CPM will automatically detect the existence of the old instance and will launch in recovery mode. You will need to terminate the old CPM and perform a failover.

Terminate the existing CPM instance.

Launch a new N2W Server instance in the same region and AZ as the old one. You can launch the instance using the Your Marketplace Software page on the AWS web site.

To determine the AZ of the new instance, launch the instance using the EC2 console rather than using the 1-click option.

Wait until the old CPM instance is in the terminated state.

5. Confirm Perform Failover prompt.

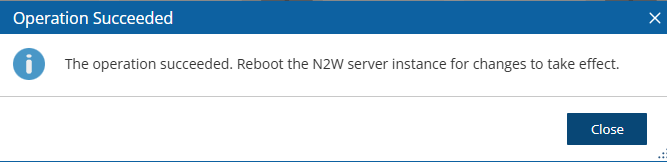

6. Wait 5 minutes for the ‘Operation Succeeded’ message.

Reboot.

The Existing data volume option is used if:

You have already run N2W and terminated the old N2W server, but now wish to continue where you stopped.

You are upgrading to new N2W releases.

You are changing some of the configuration details.

You want to configure an additional N2W server in recovery mode only. See section .

The select box for choosing the volumes will show all available EBS volumes in the same AZ as the N2W Server instance. When choosing the volumes, consider the following:

It is important to create the instance in the AZ your volume was created in the first place.

Another option is to create a snapshot from the original volume, and then create a volume from it in the AZ you require.

Although CPM data volumes typically have a special name, it is not a requirement. If you choose a volume that was not created by an N2W server for an existing data volume, the application will not work.

After upgrading:

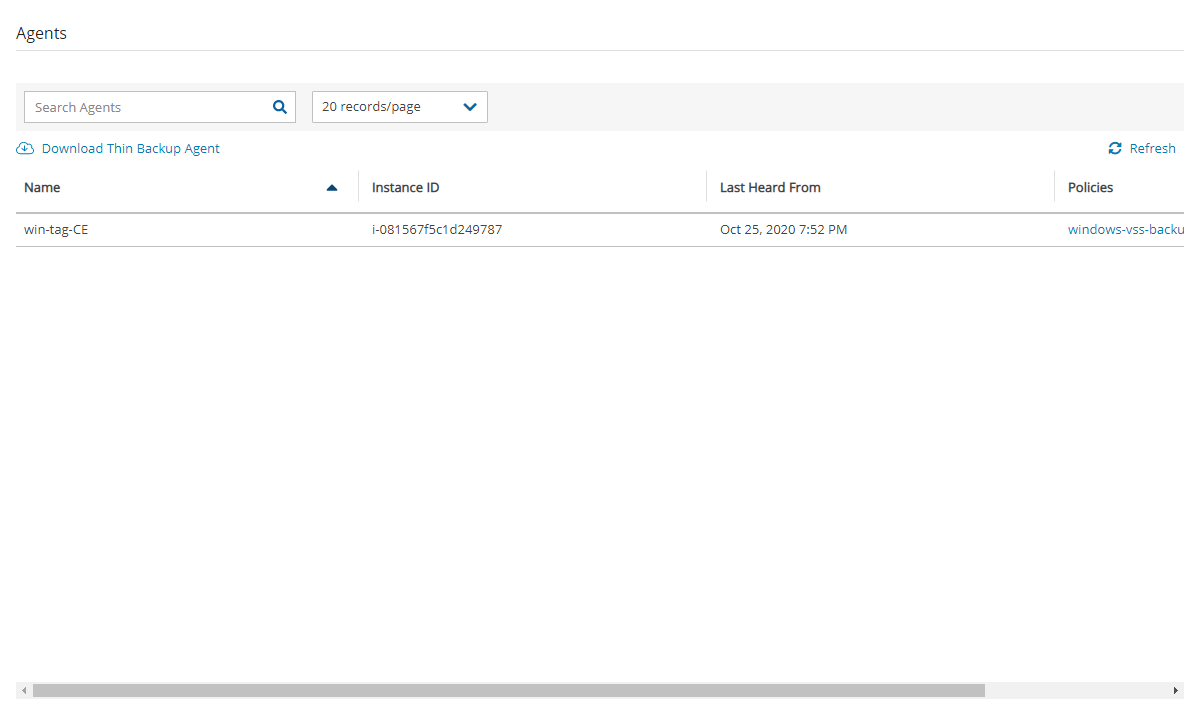

If you were using N2W Thin Backup Agents to perform app-consistent backups:

Check the Agents tab and see if “last heard from” is updated with a recent date and time.

If not, you may have to download and install the N2W Thin Backup Agent on your Windows EC2 instances.

If you were using the AWS SSM Remote Agent to perform app-consistent backups, note that the SSM Agent will not appear in the Agents tab. You will need to verify the SSM Agent separately.

If you were using backup scripts that utilize SSH, you may need to log in to the N2W Server once and run the scripts manually so that the use of the private key will be approved.

If you have more than one AWS Account added to the N2W console:

Update the IAM Policies for each Account by downloading the latest IAM permissions (section ) and updating the IAM policies for your role.

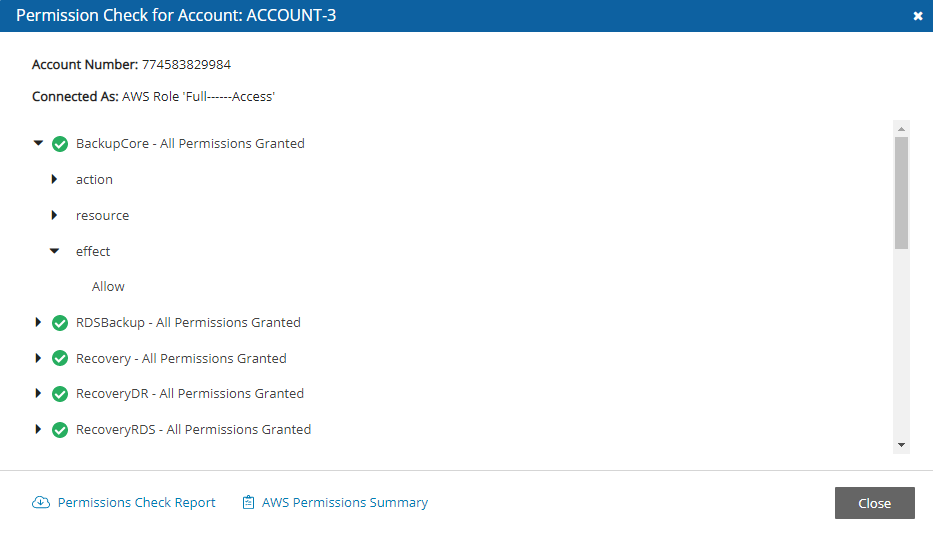

Confirm using Check AWS Permissions for each Account. See note in section about limitation.

You can configure an additional N2W server, in recovery mode only, by choosing an existing data volume:

In step 4, choose to use an existing volume and in the Force Recovery Mode, select Yes.

In step 5, in the Existing CPM Data Volume list, select the volume that holds your backup records.

The N2W server configured for recovery mode will NOT:

Perform backups.

Perform data Lifecycle Management operations.

Have Resource Control management.

Perform any scheduled operations.

Configuring N2W in silent mode is available using AWS user data, using AWS Secrets Manager, and for Azure.

Launching an EC2 instance in AWS can optionally be set with User Data. See the description of how such user data can be utilized at https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/user-data.html.

The N2W instance can also use this user data when launching.

If the string CPMCONFIG exists in the user data text, then the text following it is used for the CPM configuration.

The extraction is until the string CPMCONFIGEND or the end of the data.

The extracted text is assumed to be in .ini file format.

The extracted configuration text of the new N2W instance should start with a [SERVER] section, followed by the configuration details.

For the relevant time_zone parameter value, see .

Following is an example of the whole script:

To use AWS Secrets Manager in Silent Mode for AWS, also see 2.3.2.

Additionally, if you need the N2W server to connect to the Internet via an HTTP proxy, add a [PROXY] section:

The snapshot option does not exist in the UI. It can be used for the automation of a Disaster Recovery (DR) server recovery. Additionally, if you state a volume ID from another AZ, N2W will attempt to create a snapshot of that volume and migrate it to the AZ of the new N2W server. This option is for DR only.

You are not required to select the license terms when using the silent configuration option, since you already approved the terms when subscribing to the product on AWS Marketplace.

After executing the configuration, on the AWS Instances page, select the Tags tab. If the CPM_Silent_Configuration key value equals ‘succeeded’, then the CPM instance was successfully launched with the user data configured in silent mode.

To verify configuration user data:

In AWS, select the CPM instance.

In the right-click menu, select Instance Settings, and then select View/Change User Data.

You can keep Silent Configuration values, such as username and password, on AWS Secrets Manager. Secrets Manager can be used on any textual (not numeric or Boolean) field value in the configuration file. Secrets Manager is:

Not available for proxy settings

Available only for AWS.

The format is <silent config key>=@<Secret name>#<key in secret>@

Different secrets may be used within a configuration file, such as a user's password from another secret.

Silent configuration for Azure can only be executed programmatically using the Azure CLI, not through the Azure Portal.

The Azure Portal has a limitation whereby a User Managed Identity is not associated with a Virtual Machine until after its creation.

An existing Managed Identity with predefined permissions must be assigned to the Virtual Machine immediately upon its creation in order to perform various operations.

To deploy N2W using the Azure CLI:

In the Azure Portal, accept the license terms for N2W Backup & Recovery for Azure one time.

Select the Get Started link.

In the Configure Programmatic Deployment screen, Enable the Subscription for the image you are deploying.

Create a text file containing the following configuration parameters, including the Managed Identity Client ID, in the indented format shown:

5. Pass the file to the Azure CLI VM creation command as follows:

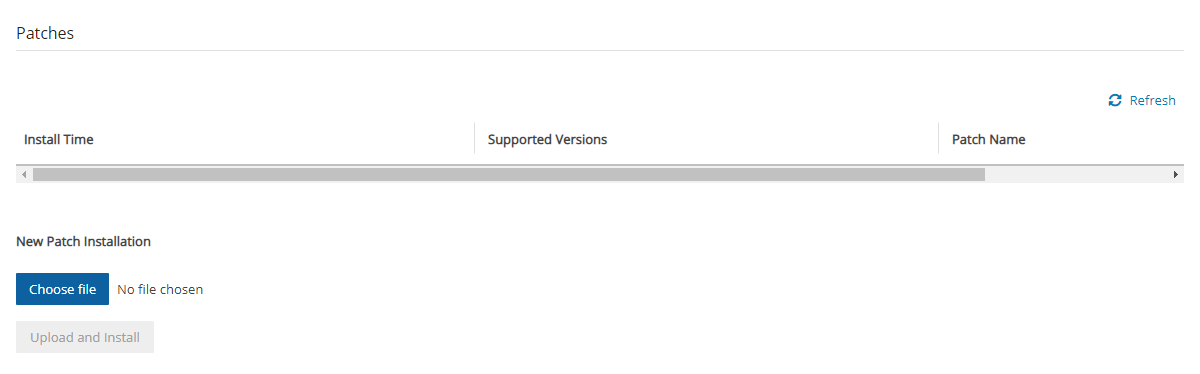

To keep your N2W running at its highest efficiency, N2W will occasionally send you notification of the existence of a patch through an Announcement or an email. Download the patch according to the notification instructions.

To install patches:

In the top right toolbar, select Server Settings and then select Patches.

Select Choose file to select the patch file.

Select Upload and Install.

[any-script-before-cpmconfig]

CPMCONFIG

[SERVER]

user=<username for the N2W user>

password=<password>

volume_option=<new or existing>

volume_size=<in GB, used only for the new volume option>

volume_id=<Volume ID for the data volume, used only in the existing volume option>

volume_type=<set your storage performance and cost.

The default is “gp3”. It can be set to “io1”, "io2", “gp2” or "gp3">

snapshot_id=<snapshot ID to create the data volume from, used only with the existing volume option, and only if volume_id is not present>

encryption_key=<encrypt user-data volume by setting the ARN of the

KMS key. used only for the new volume option>

time_zone=<set N2W server’s local time.

The default timezone is GMT. See Appendix C for available time zones.>

allow_anonymous_reports=<send anonymous usage data to N2W Software.

The default is “False”>

force_recovery_mode=<allow additional N2W server to perform recovery

operations only. The default is “False”. If it set to “True” - it

requires volume_option=existing>

activation_key=<Activation Key>

CPMCONFIGEND

[any-script-after-cpmconfig][PROXY]

proxy_server=<address of the proxy server>

proxy_port=<proxy port>

proxy_user=<user to authenticate, if needed>

proxy_password=<password to authenticate, if needed>CPMCONFIG

[SERVER]

user=@<secret_name>#<secret_name_user_key>@

password=@<secret_name>#<secret_name_pw_key>@

volume_option=existing

volume_id=@<secret_name>#<secret_name_vol_key>@

volume_type=gp2

time_zone=Europe/Berlin

CPMCONFIGENDuser=@CPMCredentials#N2W_User2@

password=@CPMAlternativeCredentials#N2W_Password@ [SERVER]

user=root

password=rootroot

disk_size=30

disk_option=new

time_zone = Asia/Jerusalem

allow_anonymous_reports=True

managed_identity_client_id=66d64e6d-e735-47e0-a3a5-f6b5fbbdbd26

path: /etc/cpm/cpm_silent_config.cfgaz vm create --resource-group my-resource-group --name my-n2w-backup-and-recovery-vm --image n2wsoftwareinc1657117813969:n2ws_backup_and_recovery:byol_edition_and_free_trial:4.1.2 --ssh-key-name my-ssh-key --admin-username cpmuser --assign-identity /subscriptions/<my-subscription-id>/resourceGroups/my-resource-group/providers/Microsoft.ManagedIdentity/userAssignedIdentities/my-n2w-vm-identity --nsg my-n2w-nsg --public-ip-sku <basic|standard> --custom-data cloud-init.txt

None of the following procedures should be attempted when Backups/DR/Cleaning/S3 Copy are running. Linux Knowledge is required.

The N2W server comes with a default self-signed HTTPS certificate that will show as ‘Not Secure’ in the browser. You can secure the certificate and reach the UI by either:

Selecting the Advanced button in the ‘Your connection is not private” message, or

Adding an exception to the browser. See the Appendix B screenshot in the N2W Quick Start Guide at

If you purchased an HTTPS certificate from a certificate authority, you can replace the default certificate with the new one as follows:

Connect to the N2W instance over SSH.

Use ‘sudo’ to reach the certificate folder, keeping the ownership and permissions of the files (‘cp’).

Go to /opt/n2wsoftware/cert

4. In the folder, replace cpm_server.crt and cpm_server.key with new files having the same names.

5. If you are using MobaXterm, you can drag/drop files to the SSH session, and then copy the files to the correct folder.

6. After replacing the files, restart Apache: sudo service apache2 restart

For full details, see:

To test the certificate before deploying to production:

The user can launch a new N2W trial instance to see if the new certificate works there.

If there are any issues, you can restore/recreate the original default certificate as follows:

Only perform these steps if you know how to use SSH and Linux commands.

Connect to the N2W instance over SSH using a tool such as PuTTY or MobaXterm.

Use ‘sudo’ to reach the certificate folder, keeping the ownership and permissions of the files: sudo su

Go to /opt/n2wsoftware/cert:

4. Move the existing .crt and .key files to a new name:

5. Stop/Start the instance.

For full details, see:

This section explains a few key concepts to help you use N2W correctly.

By default, snapshots taken using N2W are Crash-consistent. When you back up an EC2 instance at a certain time, and later want to restore this instance from backup, it will start the same as a physical machine booting after a power outage. The file system and any other applications using EBS volumes were not prepared or even aware that a backup was taking place, so they may have been in the middle of an operation or transaction.

Being in the middle of a transaction implies that this backup will not be consistent, but this is not the case. Most modern applications that deal with important business data are built for robustness. A modern database, be it MySQL, PostgreSQL, Oracle, or SQL Server, has transaction logs. Transaction logs are kept separately from the data itself, and you can always play the logs to get to a specific consistent point in time. A database can start after a crash and use transaction logs to get to the most recent consistent state. NTFS in Windows and EXT3 in Linux have implemented journaling, which is not unlike transaction logs in databases.

Learn how to set the time zone for a server.

The following example is the CPMCONFIG time_zone parameter for Israel:

To obtain the list of time zones or to set the time zone:

SSH into the CPM instance using the logon cpmuser and the instance’s private key.

During application-consistent backups, any application may be informed about the backup progress. The application can then prepare, freeze, and thaw in minimal-required time to perform operations to make sure the actual data on disk is consistent before the backup starts, making minimal changes during backup time (backup mode) and returning to full-scale operation as soon as possible.

There is also one more function that application-consistent backups perform especially for databases. Databases keep transaction logs which occasionally need to be deleted to recover storage space. This operation is called log truncation. When can transaction logs be deleted without impairing the robustness of the database? Probably after you make sure you have a successful backup of the database. In many cases, it is up to the backup software to notify the database it can truncate its transaction logs.

When taking snapshots, the point in time is the exact time that the snapshot started. The content of the snapshot reflects the exact state of the disk at that point in time, regardless of how long it took to complete the snapshot.

The type of backup to choose depends on your needs and limitations. Every approach has its pros and cons:

Pros:

Does not require writing any scripts.

Does not require installing agents in Windows Servers.

Does not affect the operation and performance of your instances and applications.

Fastest.

Cons:

Does not guarantee a consistent state of your applications.

Does not guarantee the exact point in time across multiple volumes/disks.

No way to automatically truncate database transaction logs after backup.

Pros:

Prepares the application for backup and therefore achieves a consistent state.

Can ensure one exact point in time across multiple volumes/disks.

Can truncate database transaction logs automatically.

Cons:

May require writing and maintaining backup scripts.

Requires installing an N2W Thin Backup Agent or the AWS SSM Agent for Windows Servers.

May slightly affect the performance of your application, especially for the freezing/flushing phase.

To obtain a list of all time zones, type: sudo cpm-set-timezone

To set the time zone, type: sudo cpm-set-timezone <new-time-zone>

For example, to set the time zone to 'New York', type:

CPMCONFIG

[SERVER]

user=demo

password=1

volume_option=new

time_zone=Asia/Jerusalemsudo cpm-set-timezone America/New_Yorkcd /opt/n2wsoftwar/certcd /opt/n2wsoftwar/certmv cpm_server.crt backup_cpm_server.crt

mv cpm_server.key backup_cpm_server.key

In this section, you will learn how to configure agents.

N2W allows configuring remote and local agents from the UI. See section 6.1.2.

The configuration in the text box needs to be in ‘INI’ format.

According to the section header, N2W will pass the key-value pairs to the appropriate agents.

Each agent writes the set of key-value pairs it receives for a section to its configuration file and restarts to reload the configuration.

To configure agents:

Select Server Settings > Agents Configuration.

Write the configuration in the text box with the section header followed by its key-pair, as shown in the sample rules below.

Select Publish.

The following sample rules show how to configure relevant agents:

Pass configuration to all remote agents of a given policy. The following will pass the key-value max_seconds_to_wait_for_vss=100 to all remote agents that belong to the policy by the name ‘p1’:

Pass configuration to a specific remote agent. The following will pass the key-value max_seconds_to_wait_for_vss=100 to the remote agent whose AWS instance ID is ‘agent_id ‘:

Pass configuration to all remote agents. The following will pass the key-value max_seconds_to_wait_for_policy=600 to all remote agents:

Pass configuration to a local agent. The following will pass the key-value max_seconds_to_wait_for_policy=600 to the local agent:

One or more instances of all of the above can be pasted together to the text box in the Agent Configuration screen. On Publish, N2W iterates over all sections and passes the relevant configuration to each agent.

With cross-account backup and recovery, you can easily copy backups between accounts as part of the disaster recovery process.

Available only in Standard, Advanced, and Enterprise Editions, N2W's cross-account functionality allows you to automatically copy snapshots between AWS accounts as part of the DR module. With cross-region DR, you can copy snapshots between regions as well as between accounts and any combination of both. In addition, you can recover resources (e.g., EC2 instances) to a different AWS account even if you did not copy the snapshots to that account. This cross-account functionality is important for security reasons.

The ability to copy snapshots between regions can prove crucial if your AWS credentials have been compromised and there is a risk of deletion of your production data as well as your snapshot data. N2W utilizes the snapshot share option in AWS to enable copying them across accounts. Cross-account functionality is currently supported only for EC2 instances, EBS volumes, and RDS instances, including Aurora.

Cross-account functionality is enabled for encrypted EBS volumes and instances with encrypted EBS volumes and RDS databases.

Learn how to reset a root password or multi-factor authentication.

The reset method depends on whether you have SSH access to the N2W instance and what is your version of N2W:

If you have SSH access to the N2W instance and are running version 4.2 and above, see section .

If you do not have SSH access to the instance or are running an older version, see section .

FSx types need to be encrypted with a custom key.

Supported cross-region FSx types are Lustre, Windows, and OpenZFS.

Supported cross-account FSx types are Lustre (Persistent, HDD), Windows, and OpenZFS

Backup and DR vaults need a custom key.

Users will need to share the encrypted key used for the encryption of the volumes or instances to the other account as N2W will not do it.

In addition, N2W expects to find a key in the target account with the same alias as the original key (or just uses the default key).

For information on sharing encryption keys between different accounts, see https://n2ws.zendesk.com/hc/en-us/articles/28815492111005-Use-custom-KMS-encryption-keys-for-cross-region-cross-account-DR

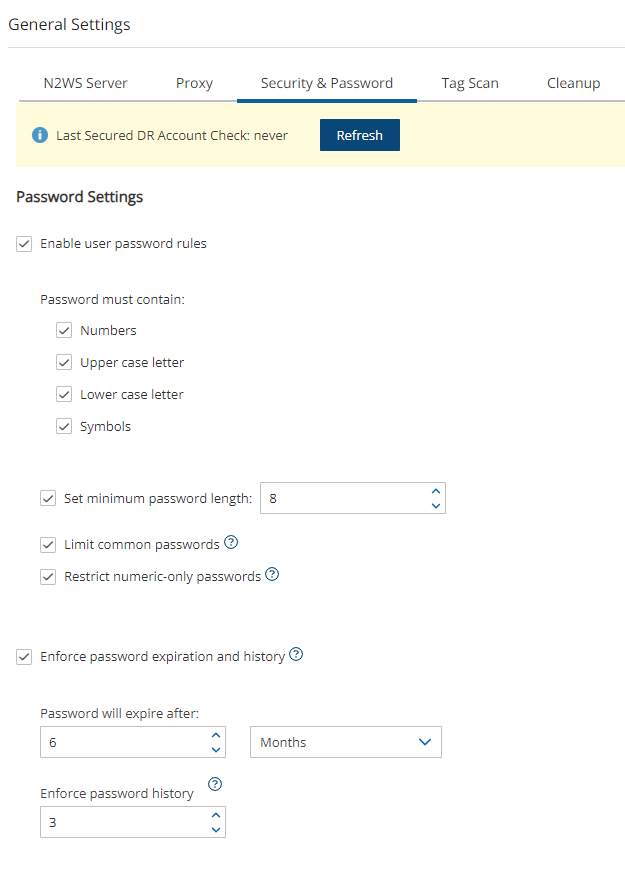

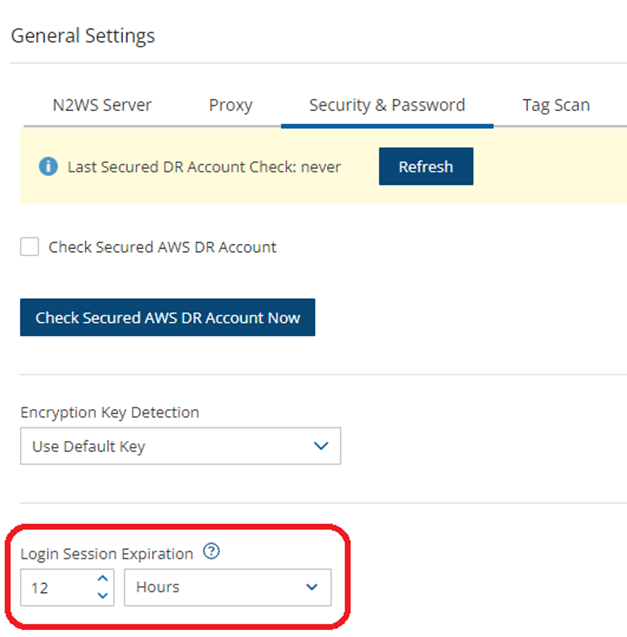

If a matching encryption key is not found with an alias or with custom tags, the behaviour of the backup depends on the setting in the Encryption Key Detection list in the Security & Password tab of the General Settings screen:

Use Default Key – If the encryption key is not matched, the default encryption key is used.

Strict – DR encryption key must match, either with an alias or a custom tag.

Use Default Key & Alert – Use the default key and send an alert.

N2W can support a DR scheme where a special AWS account is used only for snapshot data. This account’s credentials are not shared with anyone and used only to copy snapshots. The IAM credentials used in N2W can have limited permissions that do not allow snapshot deletion.

N2W will tag outdated snapshots instead of deleting them, allowing an authorized user to delete them separately using the EC2 console or a script. The tag cpm_deleted will have a value of ‘CPM deleted this snapshot (<time-of-deletion>)’. Also, you may choose to keep the snapshots only in the vault account and not in their original account. This will allow you to save storage costs and utilize the cross-recovery capability to recover resources from the vault account back to the original one.

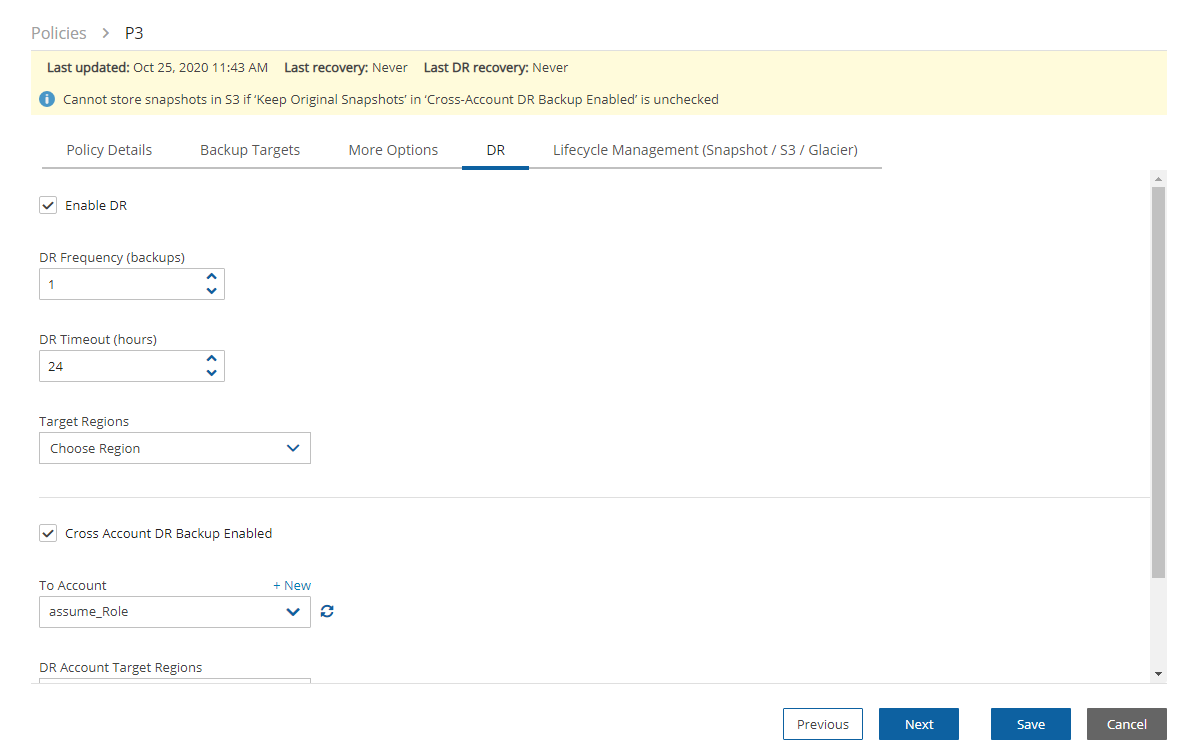

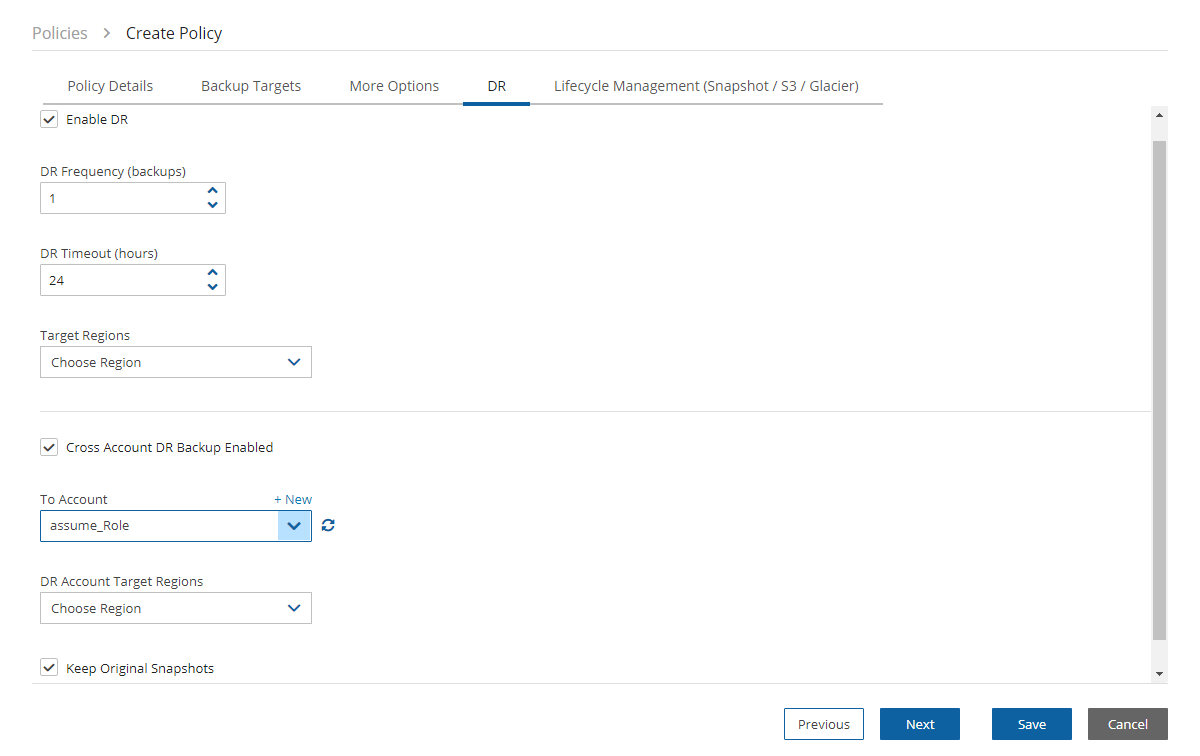

Once you have created an account with the Account Type DR, you can configure cross-account DR from the DR tab of a policy.

Cross-account fields will be available only if your N2W is licensed for cross-account functionality. See the pricing page on our website to see which N2W editions include cross-account backup and recovery.

Once you select Cross-Account DR Backup Enabled, other fields become visible:

To Account – Which account to copy the snapshots to. This account needs to have been defined as a DR Account Type in the Accounts screen.

DR Account Target Regions – Which region or regions you want to copy the snapshots of the policy to. To include all Target Regions selected for backup, select Original in the list. Select additional regions as needed.

Keep Original Snapshots – Enabled by default, the original snapshot from the source region will be kept. If disabled, once the snapshot is copied to the DR account, it will be deleted from the source region.

Keep Original Snapshots must be enabled for Copy to S3 for Cross-Account DR Backup.

N2W performs clean-up on backup policies and deletes backups and snapshots that are out of the retention window, according to the policy’s definition. By default, N2W will clean up snapshots copied to other accounts as well. However, if you do not wish for N2W to clean up, because you want to provide IAM credentials that are limited and cannot delete data, you have that option. If you defined the DR account with Allow Deleting Snapshots set as False, N2W will not try to delete snapshots in the DR account. It will rather flag a snapshot for subsequent deletion by adding a tag to the snapshot called cpm_deleted. The tag value will contain the time when the snapshot was flagged for deletion by N2W.

When using this option, occasionally make sure that these snapshots are actually deleted. You can either run a script on a schedule, with proper permissions or make it delete all snapshots with the tag cpm_deleted. Or, using the EC2 console, filter snapshots by the tag name and delete them.

If you configure the backup policy to copy snapshots across accounts as well as across regions, be aware of how the increased number of copies might affect your AWS costs.

Cross-account with cross-region DR with AMIs is supported.

AWS limits RDS snapshot cross-account copy to the DR account in the original region. To overcome this limitation, N2W performs two snapshot copies during the DR process:

Copy from 'Backup account - origin region' to ‘DR account - origin region'.

Copy from 'DR account - origin region' to ‘DR account - DR region'.

To ensure incremental snapshot copies across regions, enable Keep Original Snapshots on the Policy DR tab. If ‘Keep Original Snapshots’ is not enabled, then the cross-region copies will be full copies of the database.

In most usage scenarios, it is more cost efficient to enable Keep Original Snapshots. Incremental copies will use less space in the aggregate, reduce cross-region data transfer costs, and reduce Recovery Time Objective.

If you have cross-account functionality enabled in your N2W license, and even if you configured N2Wto copy snapshots between accounts, you can recover across accounts. This is already mentioned in Recovery section 10. You need to choose which account to recover the resource (EC2 instance, EBS volume, or RDS database) to.

Only account type DR may be the target of a cross-account recovery.

When copying snapshots between accounts and not keeping the original snapshots, you will also have the option to restore the instance/volume to the original account. N2W will utilize the AWS share snapshot option to enable recovering resources across accounts.

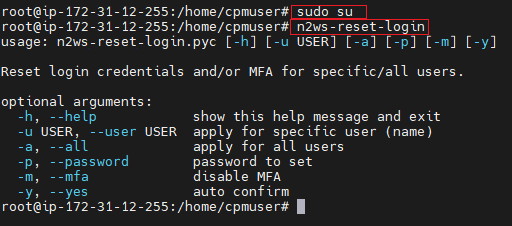

N2W Login Reset Utility n2ws-reset-login:

To reset login credentials and/or MFA for specific or all users:

Connect to N2W instance via SSH with user 'cpmuser' and your SSH key.

Switch to root: ‘sudo su’.

To see options for using the utility to reset the password or MFA, type ‘n2ws-reset-login’.

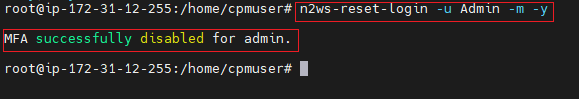

4. Disable the root user MFA without changing the password:

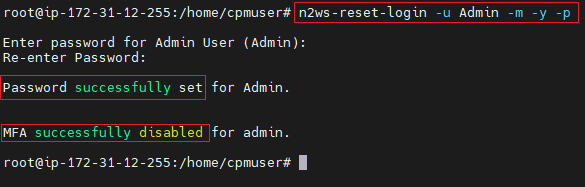

5. Disable the root user MFA and reset the password:

6. Enter password and confirmation entry.

7. Reset MFA if relevant.

If you don’t have SSH access, perhaps because the key is lost, or if you just need to recover your password and disable the MFA, redeploy the instance as follows:

If you know the root/admin username:

Make sure there are no backups or DR in progress.

Follow the upgrade procedure in the N2W User Guide at https://docs.n2ws.com/user-guide/#1-4-upgrading-n-2-ws.

When configuring the new instance, use the old username of the root/admin (important!) and the new password.

After the new CPM is launched, apply any necessary patches to make sure it’s up-to-date with the latest fixes and features: https://support.n2ws.com/portal/en/kb/articles/release-notes-for-the-latest-v4-1-x-cpm-release

If you don’t know the root/admin username:

Make sure there are no backups or DR in progress.

Follow the upgrade procedure in the N2W User Guide at https://docs.n2ws.com/user-guide/#1-4-upgrading-n-2-ws.

During the “upgrade” process, type the username you think it is and the new password.

If you typed the wrong root username, N2W will assume you forgot it and will create a file on the server, '/tmp/username_reminder', containing the old username.

To view this file, connect to the N2W server using SSH as ‘cpmuser’.

Now you will be able to use the older username with the new password.

After the new CPM is launched, apply any necessary patches to make sure it’s up-to-date with the latest fixes and features:

For further information, see https://support.n2ws.com/portal/en/kb/articles/how-to-reset-the-password-for-the-root-admin-cpm-user

Wasabi Storage is an independent third-party provider of storage account services. Wasabi storage is compatible with Amazon S3 object storage and is available for use through N2W. Storing snapshots with Wasabi instead of S3 allows customers to save on their long-term storage costs.

Because Wasabi is an external cloud storage provider, customers should be aware that Data Transfer charges to the Internet will be applied by their cloud provider for data sent to Wasabi.

A Wasabi repository is based on a bucket in the Wasabi system. Wasabi repositories can be used to store snapshots for both AWS and Azure policies. You can cross-cloud backups by going from N2W on AWS to Wasabi or from N2W on Azure to Wasabi.

Make sure that your license supports Wasabi.

Enable Wasabi Cloud as one of your UI defaults in N2W Server Settings > General Settings > User UI Default.

Compliance-only Immutable backups are supported for Wasabi repositories.

Authentication to Wasabi using user-specified credentials is required first for N2W to access a Wasabi bucket.

Authentication of Wasabi accounts is by Access Key ID and Secret Access Key only.

Create a Wasabi account in N2W with the required credentials.

Create a Wasabi repository in any bucket accessible to the specified account. See section .

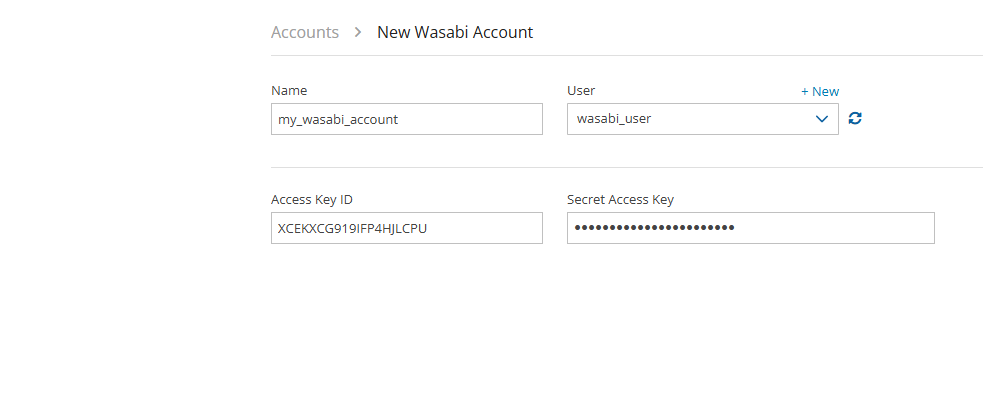

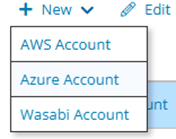

To create a Wasabi account:

Log on to N2W using the root username and password used during the N2W configuration.

Select the Accounts tab.

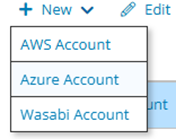

In the New menu, select Wasabi Account..

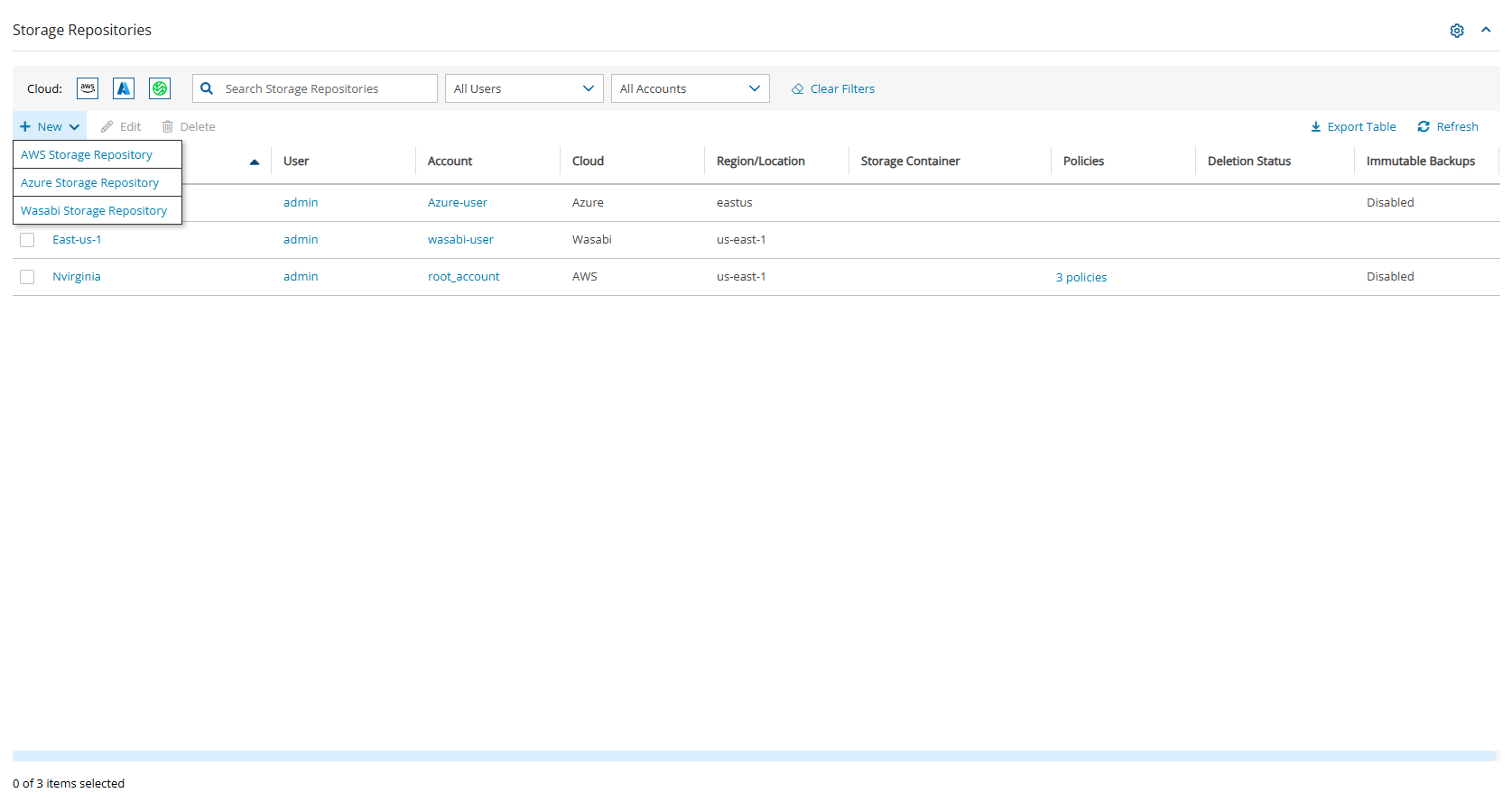

Wasabi repositories are where backups of SQL servers are stored. Wasabi repositories can also be used to store disk snapshots via a Lifecycle policy or serve as cross-cloud storage for AWS volume snapshots via a Lifecycle policy. For further details, see section

A single Wasabi container can have multiple repositories.

Wasabi buckets may not be versioned.

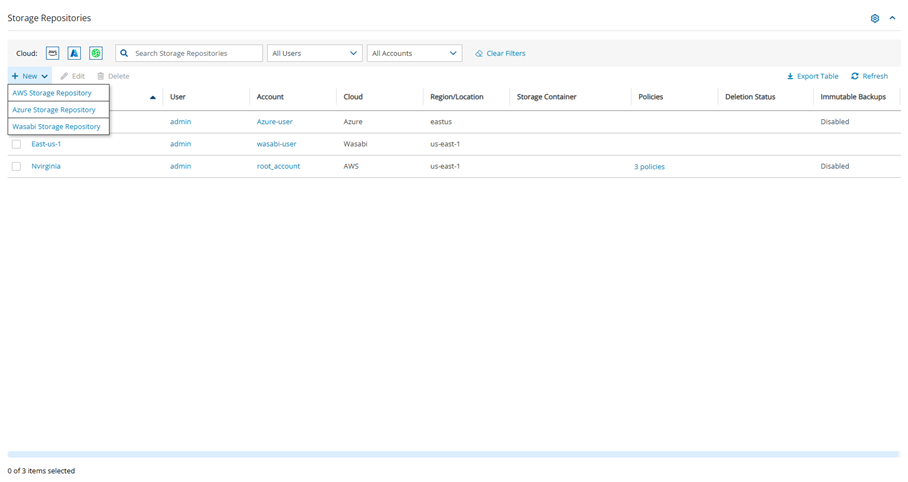

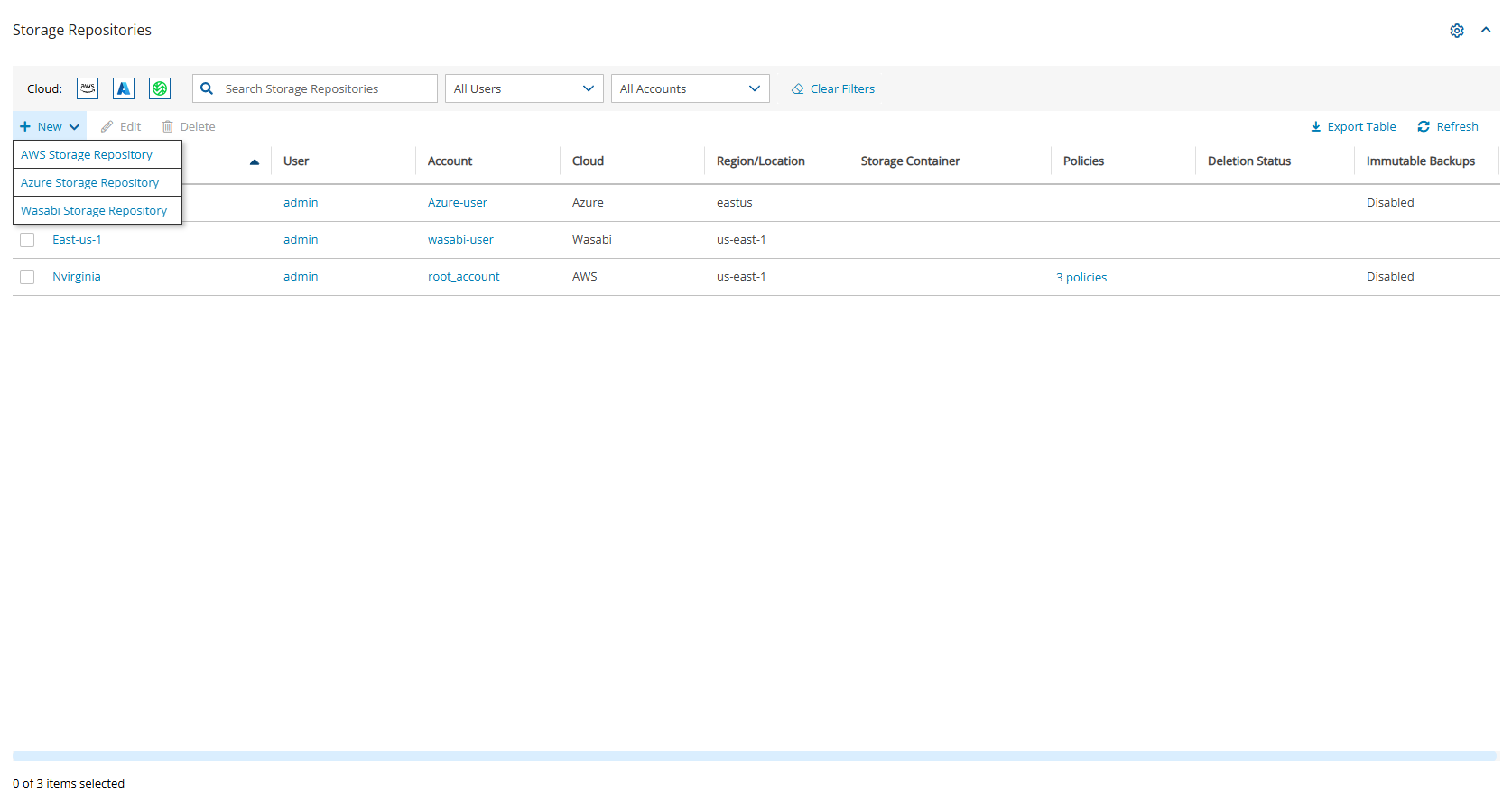

In N2W, select the Storage Repositories tab.

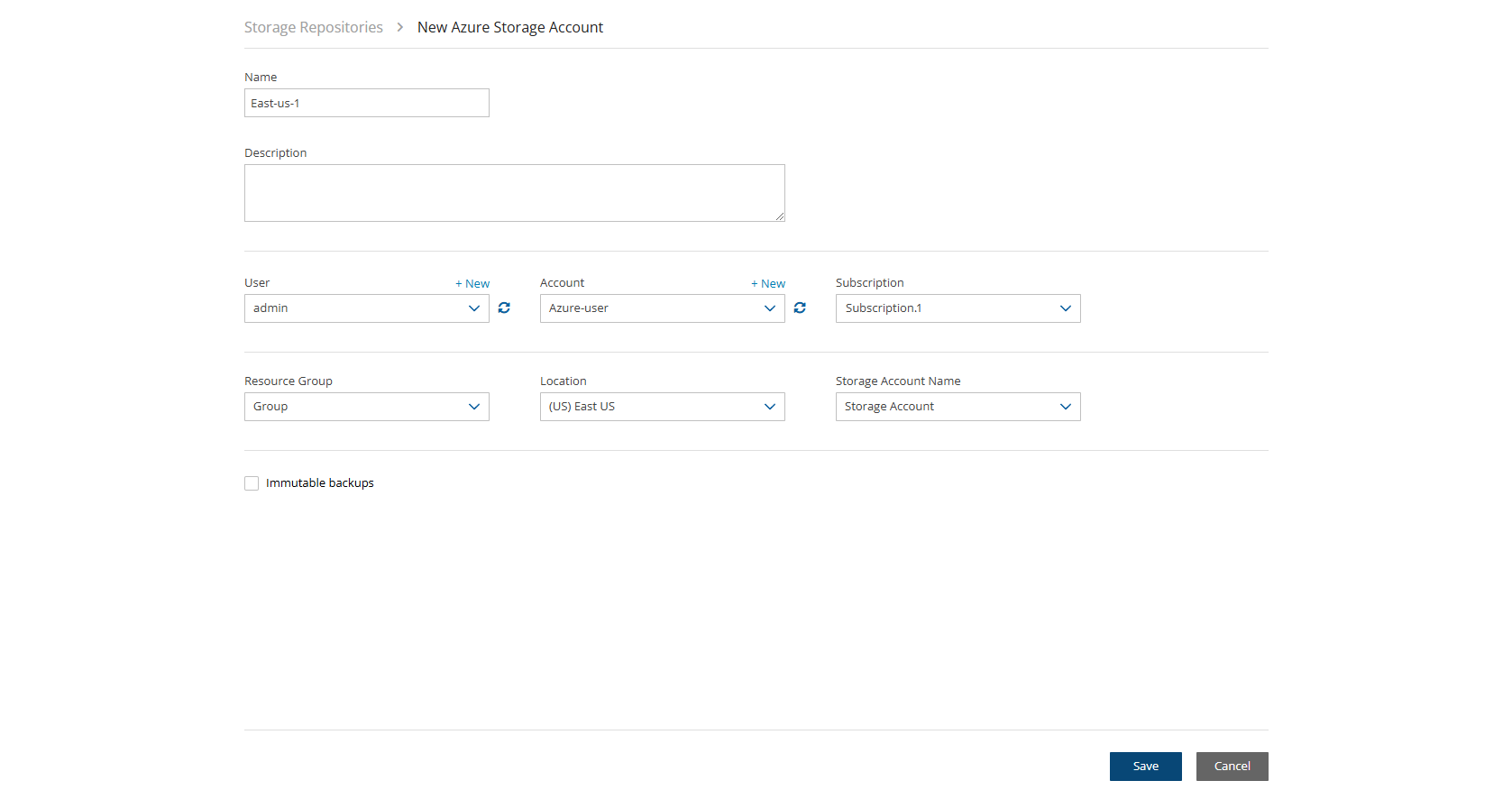

In the New menu, select Wasabi Storage Repository.

In the Storage Repository screen, complete the following fields, and then select Save.

Do not rename this bucket after selection for the Repository as the copy will fail.

f. Immutable backups (Using Compliance Lock) - Select to enable immutable backups. It will not be possible to delete snapshots before their retention expiration dates in an enabled repository.

You can delete all snapshots copied to a specific Wasabi repository.

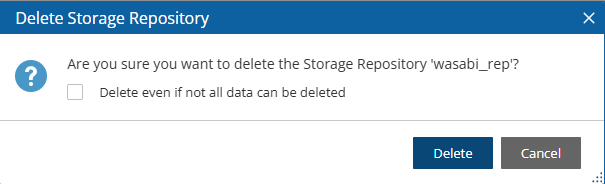

Deleting a repository is not possible when the repository is used by a policy. You must change the policy’s repository to a different one before you can delete the target repository.

To delete a Wasabi repository:

Select the Storage Repositories tab.

Select the repository to delete.

Select Delete, and confirm.

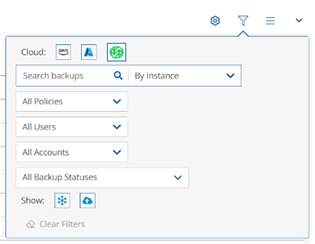

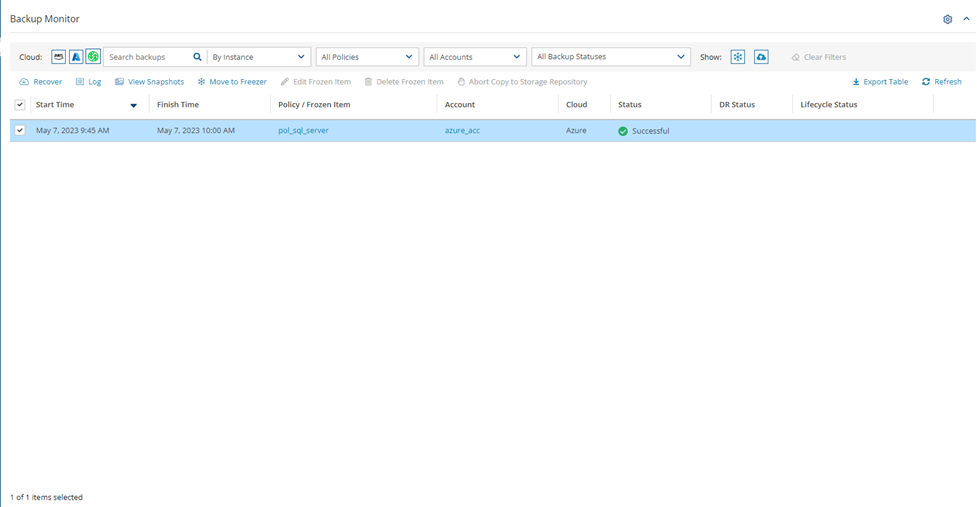

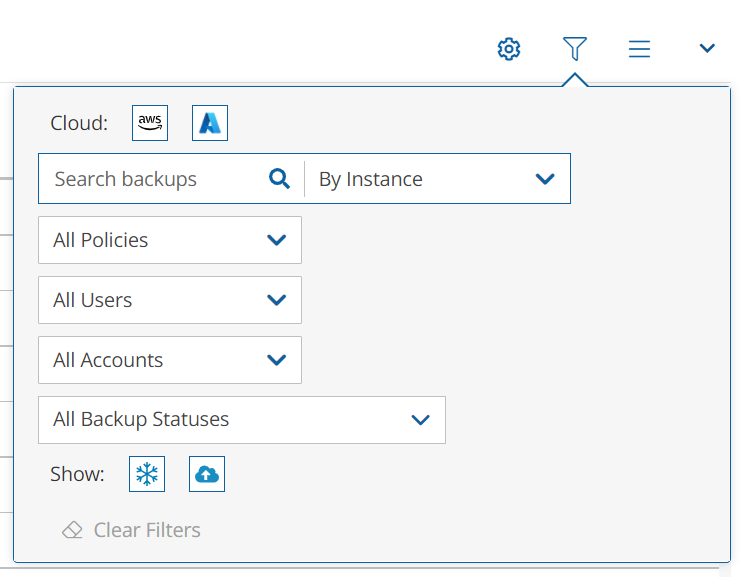

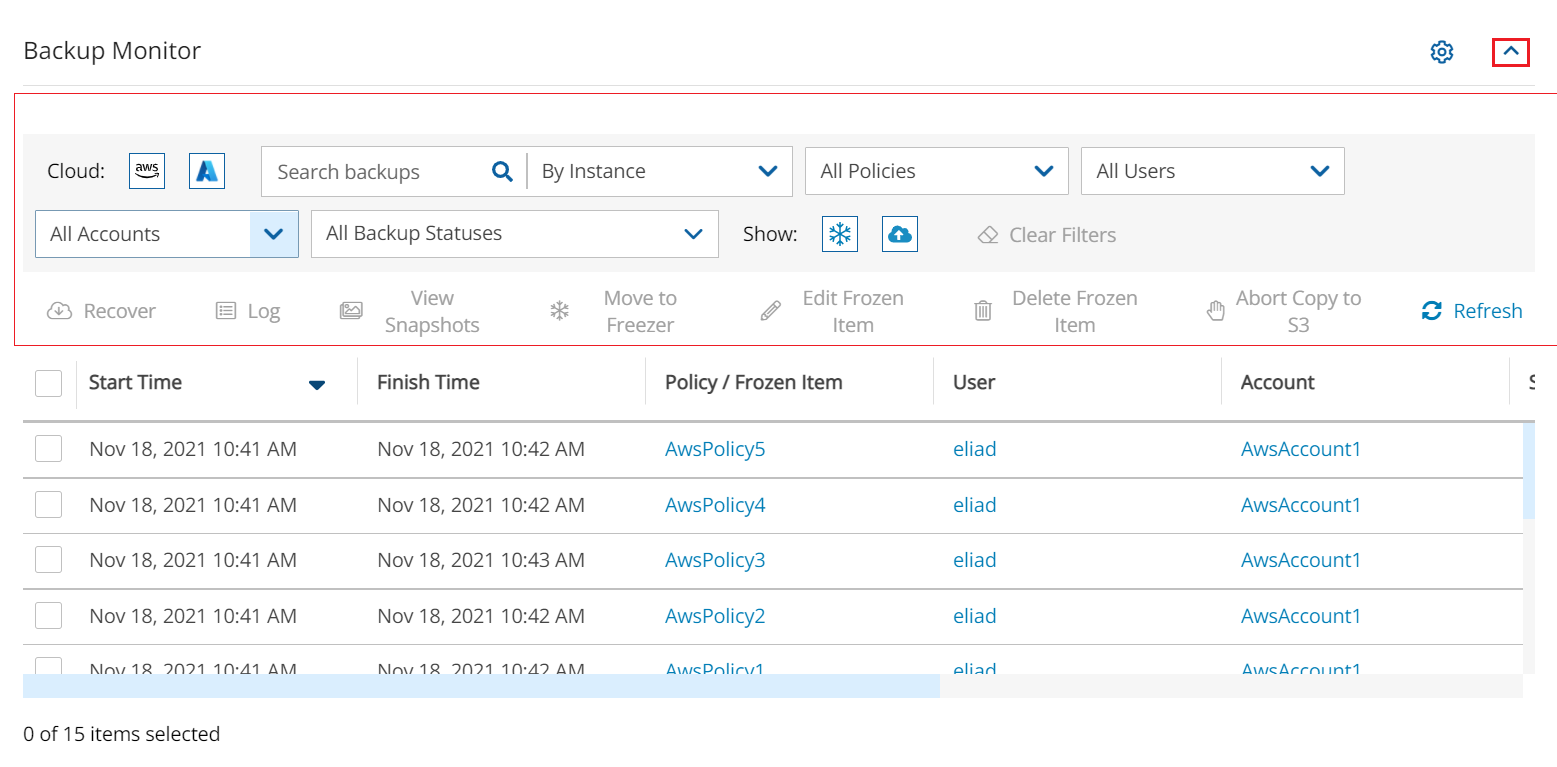

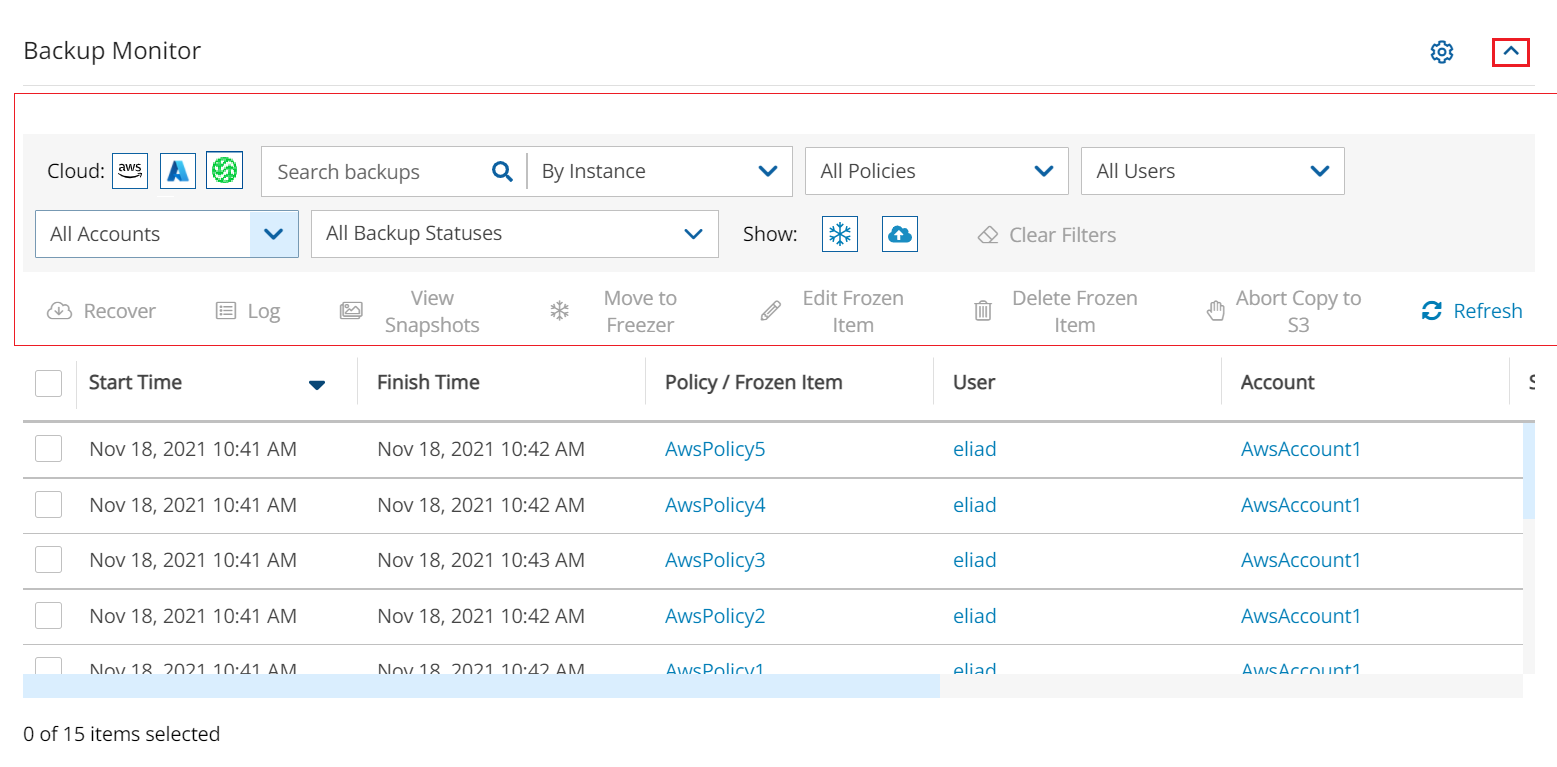

In the Backup Monitor, select the Cloud button for Wasabi .

To minimize the top bars, select the up arrow in the top right corner. To open the filters dialog box, select the filter icon ( ).

Filter as necessary by type of target, policies, users, accounts, backup status, and freezer status.

4. Select a backup, and then select Recover. The Recover screen opens. For backups with multiple resource types as targets, the Recover screen will have a separate tab for each type.

5. Follow the recovery instructions for the resource type as described in section .

You can model and manage your infrastructure resources with AWS CloudFormation.

The process to configure N2W to work with CloudFormation is a single stream that starts with subscribing to N2W on the Amazon Marketplace and ends with configuring the N2W server.

N2W provides several editions, all of which support CloudFormation.

An IAM role will automatically be created with minimal permissions and assigned to the N2W instance.

Configure CPM with CloudFormation will fail where the requested Instance type is not supported in the requested Availability Zone. Retry your request, but do not specify an Availability Zone or choose us-east-1a, us-east-1b, us-east-1c, us-east-1d, or us-east-1f.

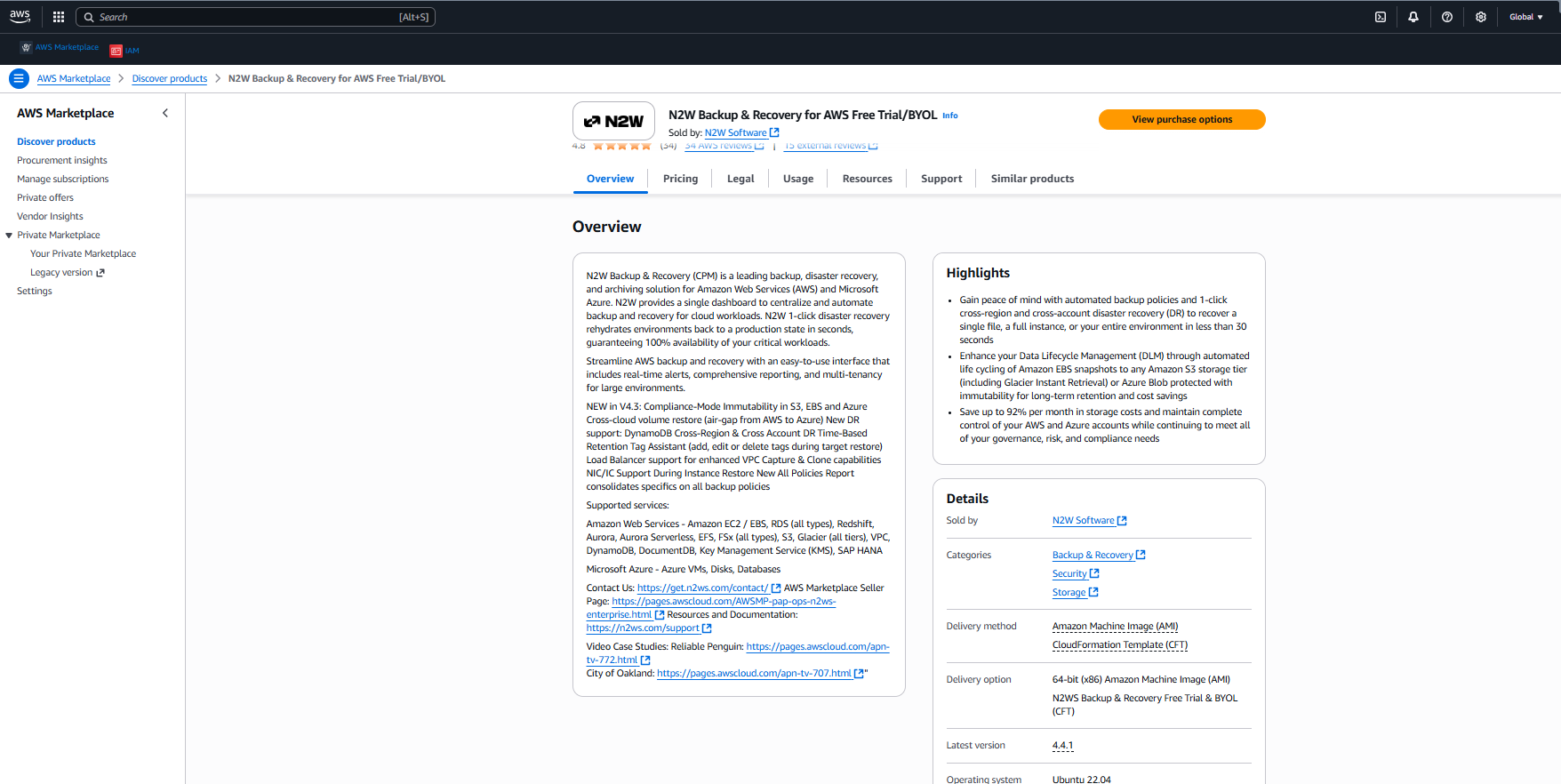

Go to

Search for N2W Software.

Select the AWS Free Trial & BYOL CPM edition, and then select View purchase options.

4. After reviewing the Overview, select View purchase options again.

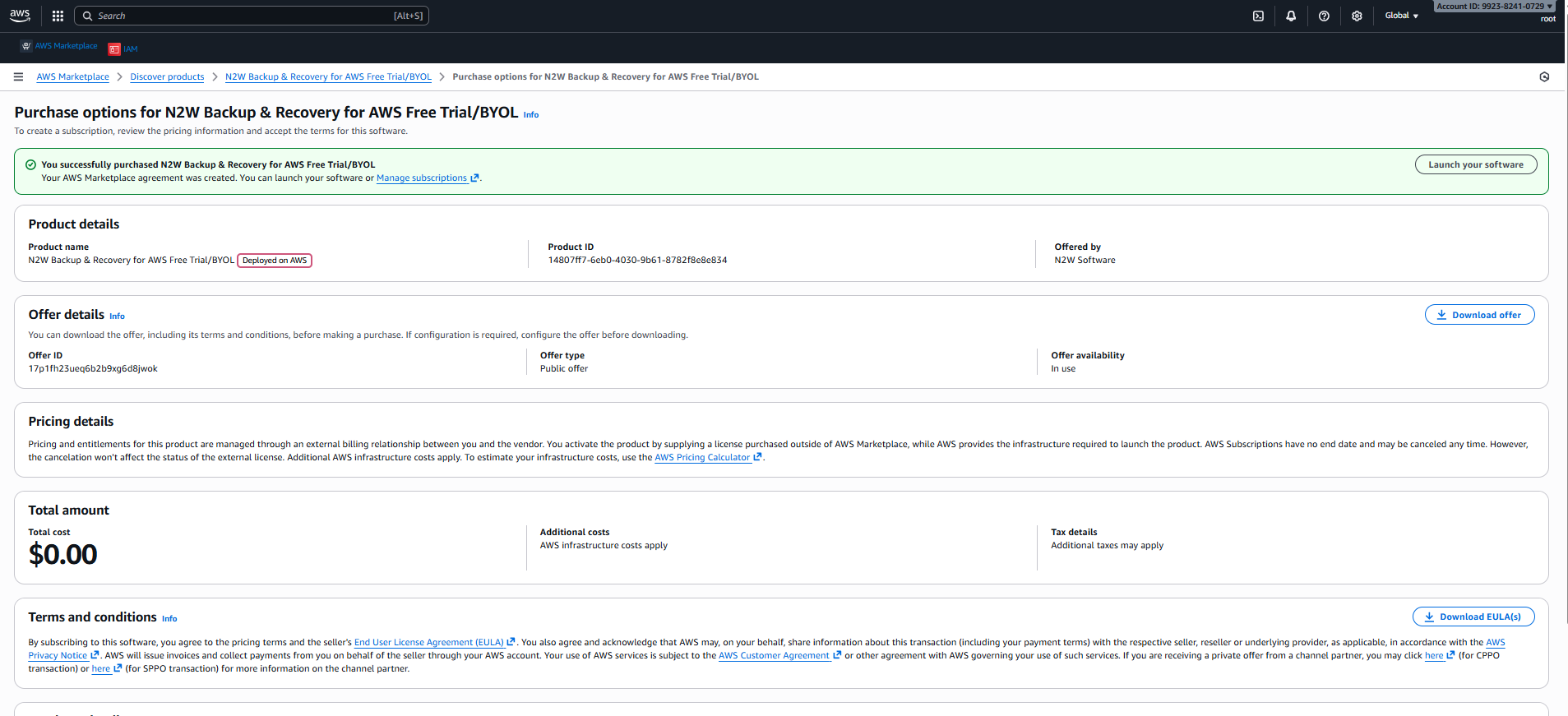

5. After purchasing N2W Backup & Recovery for AWS Free Trial/BYOL, select Launch your software.

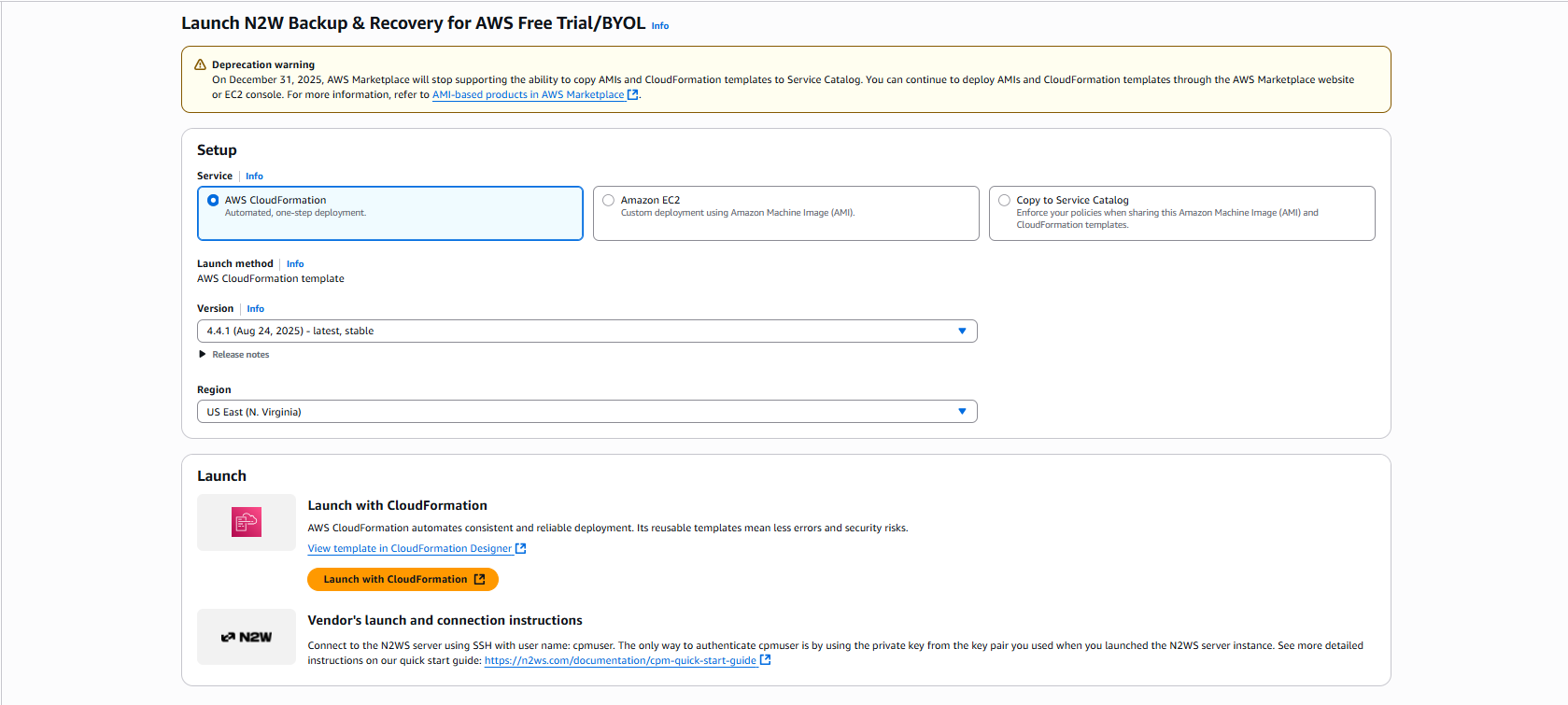

6. In the Setup section, select AWS CloudFormation for the Service. Select the relevant Version and Region, and then select Launch with CloudFormation.

7. In the Create Stack page:

a. In the Prerequisite - Prepare template section, select Choose an existing template.

b. In the Specify template section, select Amazon S3 URL. In the Amazon S3 URL window, the address of the template will appear.

c. Select View in Infrastructure Composer.

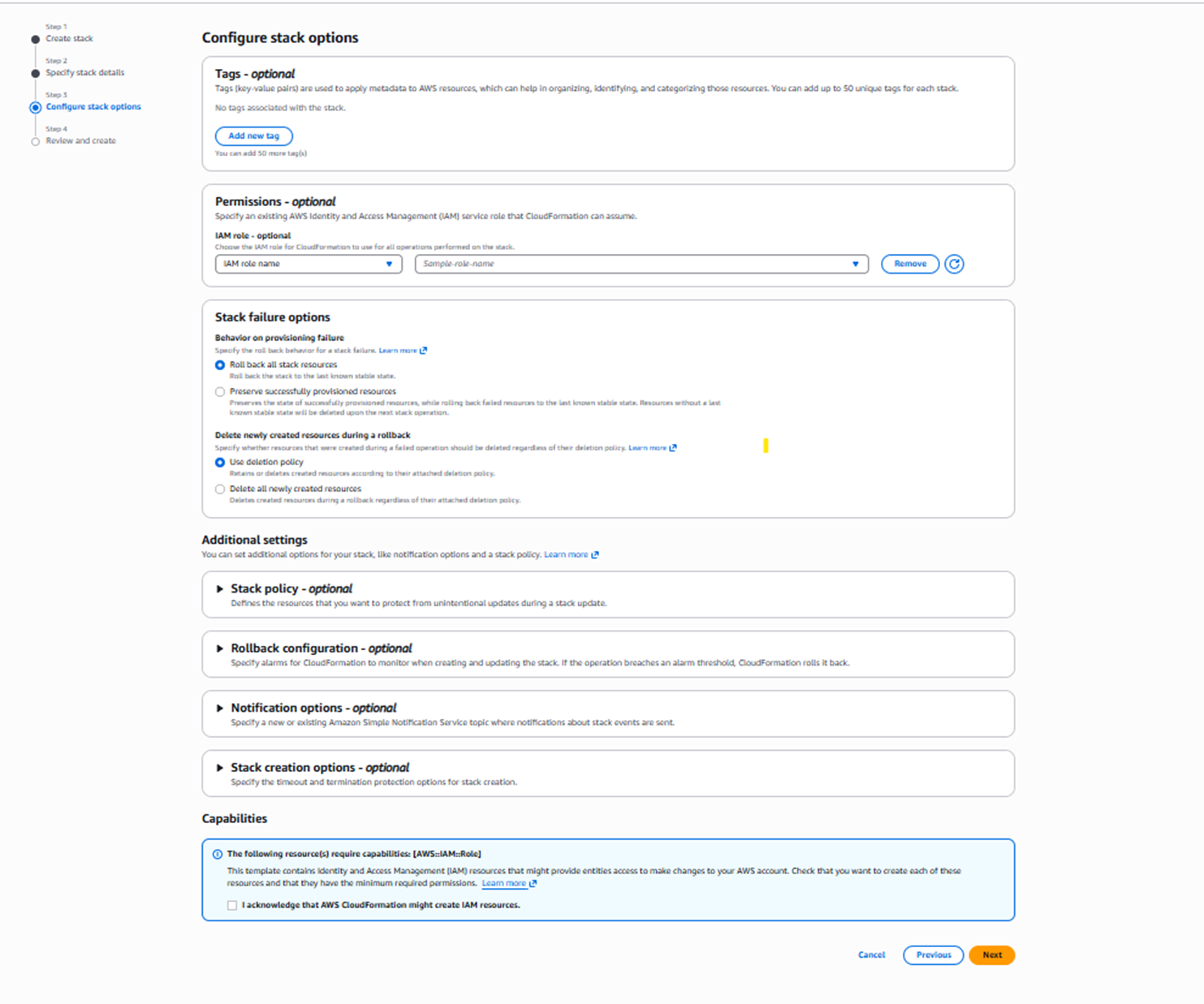

8. Select Next. The Configure stack options page opens.

a. If needed, select Add new tag.

b. In the Capabilities section, select I acknowledge that AWS CloudFormation might create IAM resources.

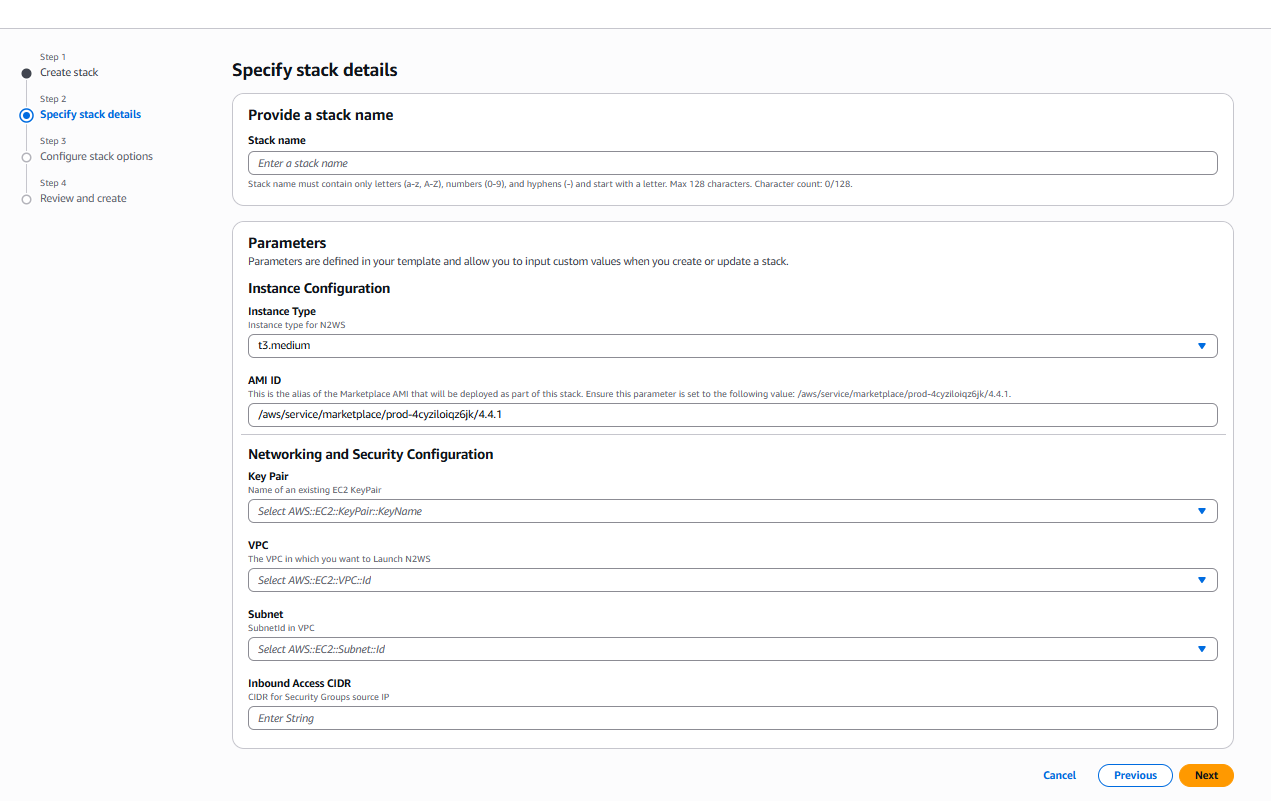

9. Select Next. The Specify stack details page opens. Provide a stack name and parameters.

For Inbound Access CIDR, security groups act as a firewall for associated instances, controlling both inbound and outbound traffic at the instance level. Configuring Inbound Access CIDR allows you to add rules to a security group that enable you to connect to your Linux instance from your IP address using SSH:

If your IPv4 address is 203.0.113.25, specify 203.0.113.25/32 to list this single IPv4 address in CIDR notation.

If your company allocates addresses within a range, specify the entire range, such as 203.0.113.0/24

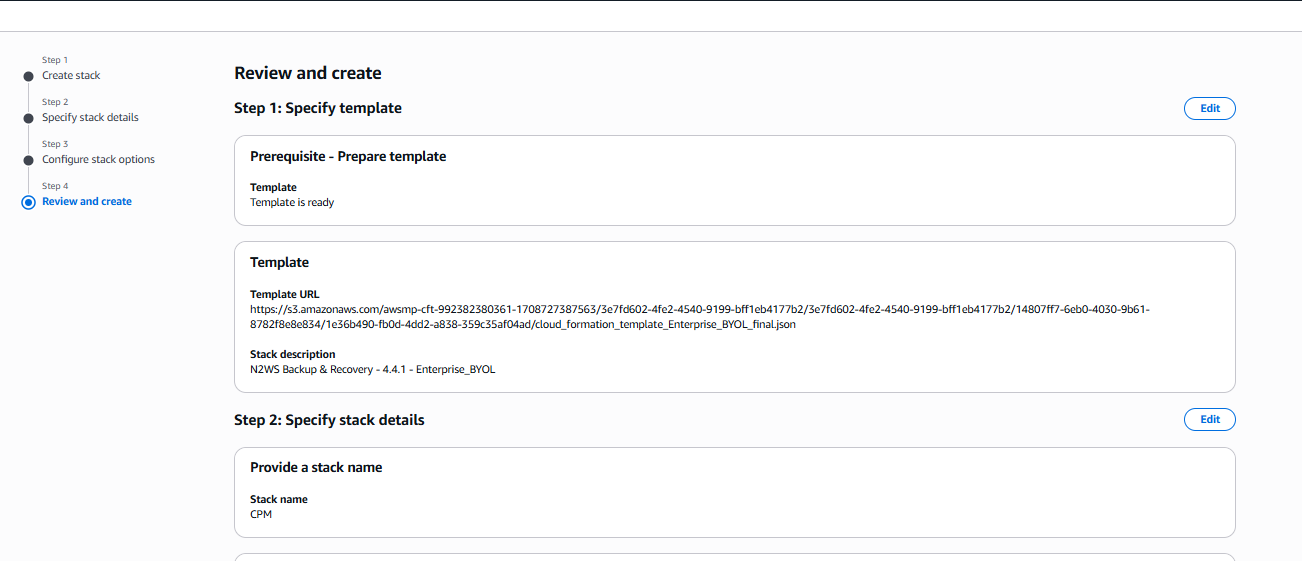

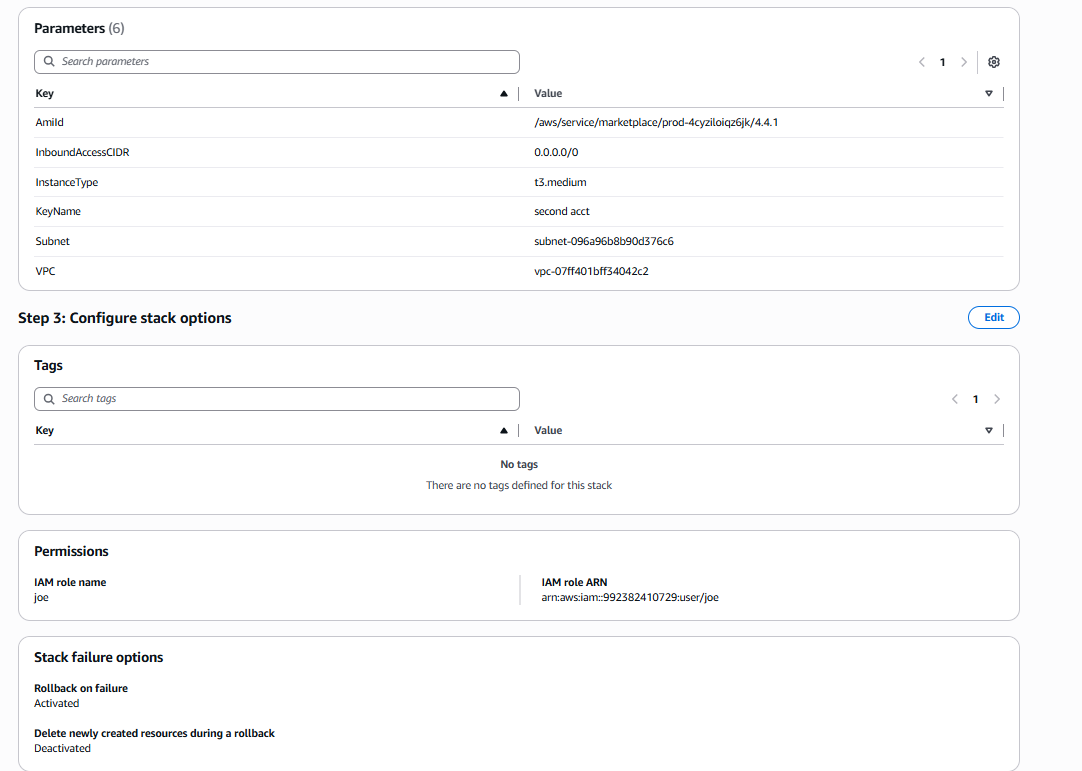

Select Next. The Review and create page opens. Review and edit as necessary.

Step 1, Specify template, and Step 2, Specify stack details:

b. Step 2, Parameters, and Step 3 Configure stack options, such as Tags and Permissions:

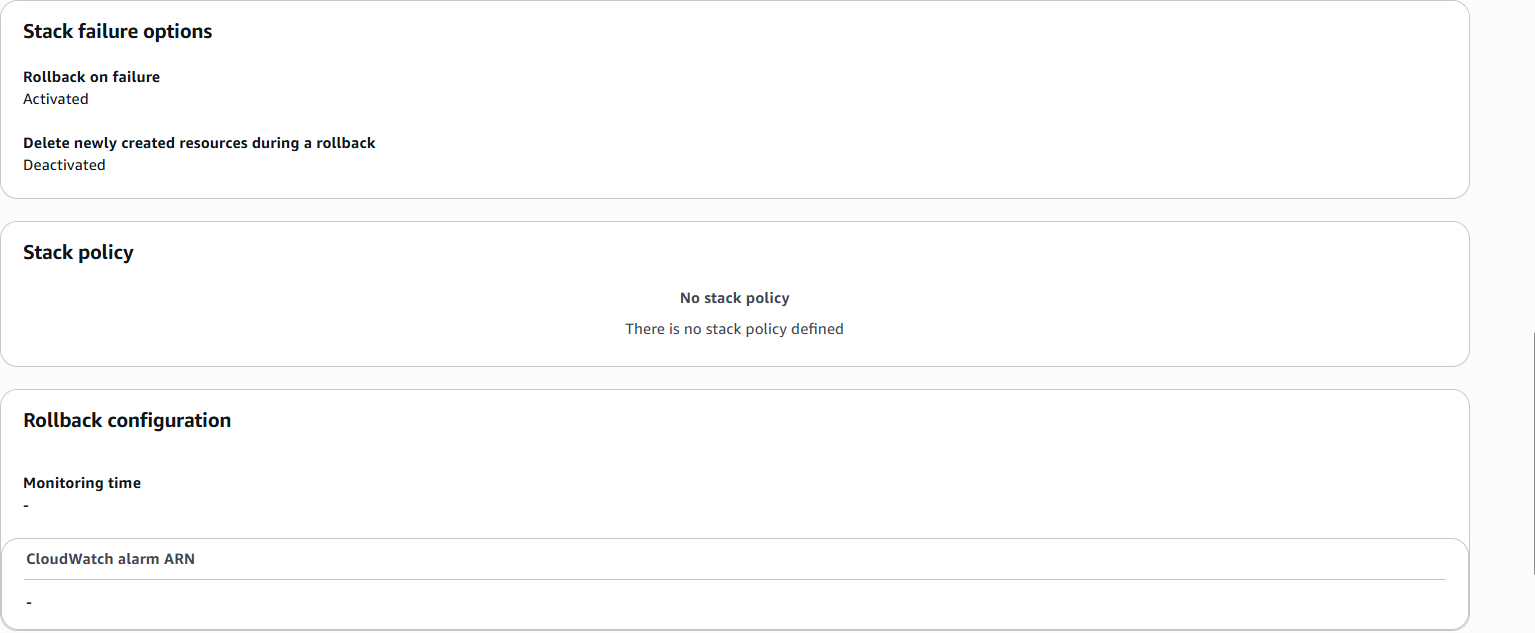

c. Step 3, Stack failure options, Stack policy, and Rollback configuration:

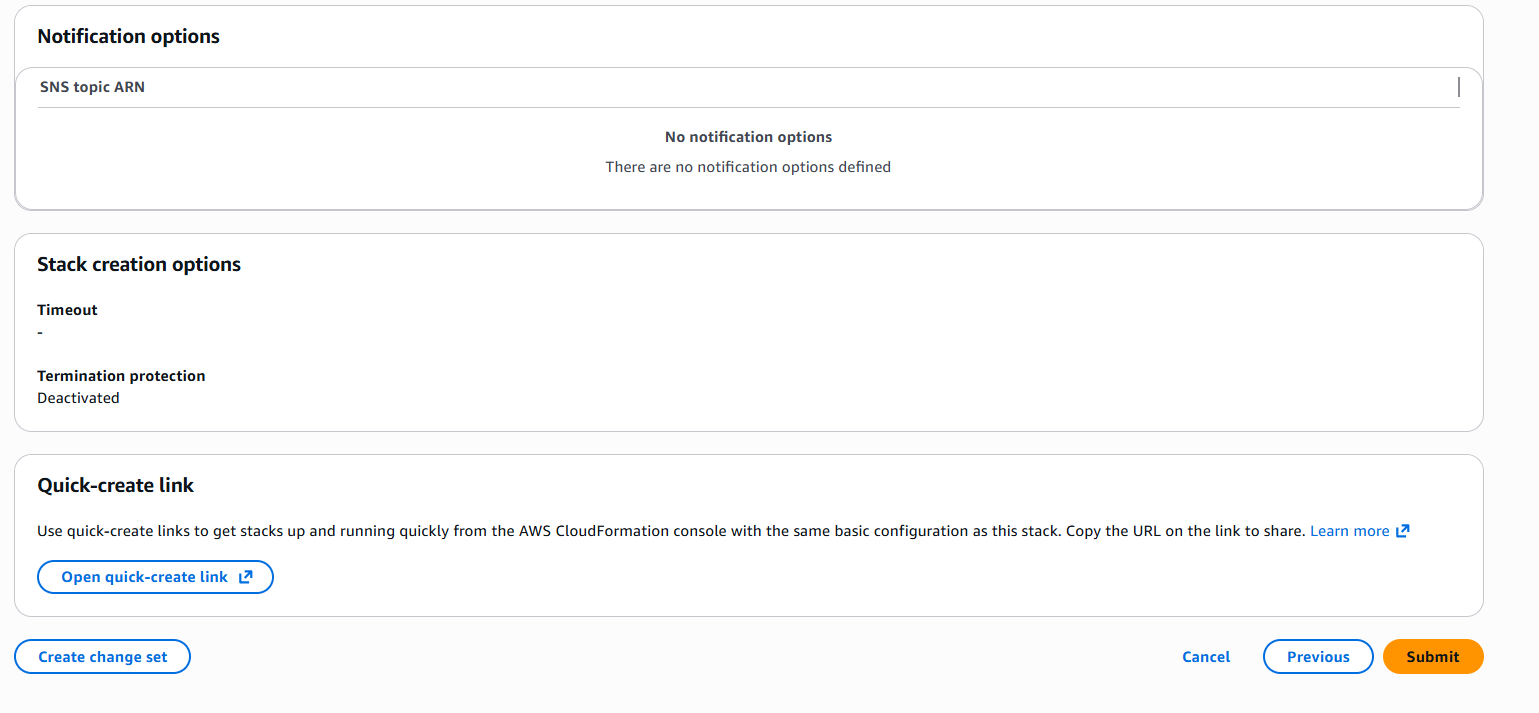

d. Step 3, Notification options, Stack create options, and Quick-create link:

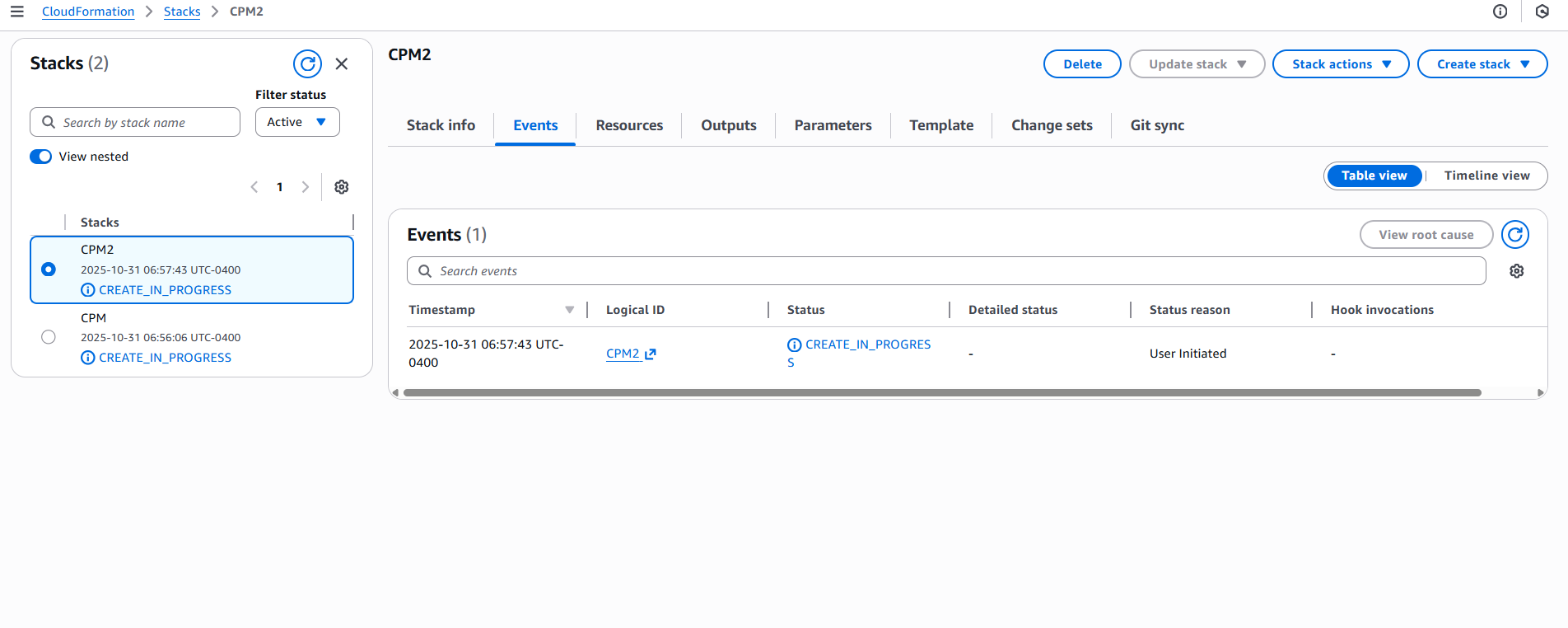

After all sections are reviewed, select Submit. The last configuration page opens, where you can observe the progress of the stack creation.

12. Continue configuring N2W as in section .

This section describes how to monitor and manage N2W costs.

N2W Software customers have a single point of control and management over the procedure of backing up their cloud-based services and data stores. Monitoring the costs will help customers define backup plans that fit their budget and thereby avoid unexpected costs. N2W provides the following services for monitoring costs:

Cost Explorer – Cost of storage that was created by N2W to hold short-term backup snapshots of the customer’s cloud-based assets.

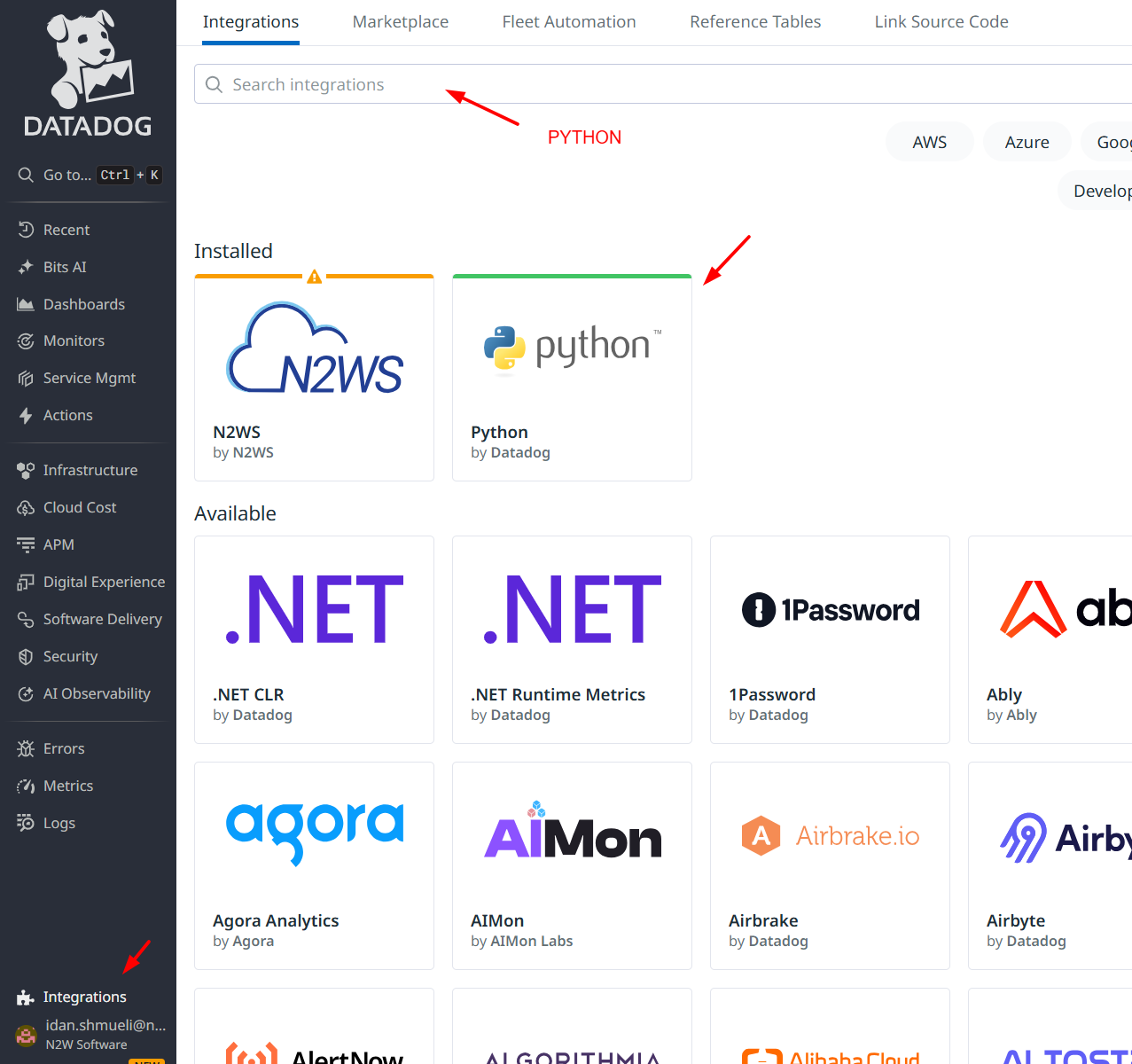

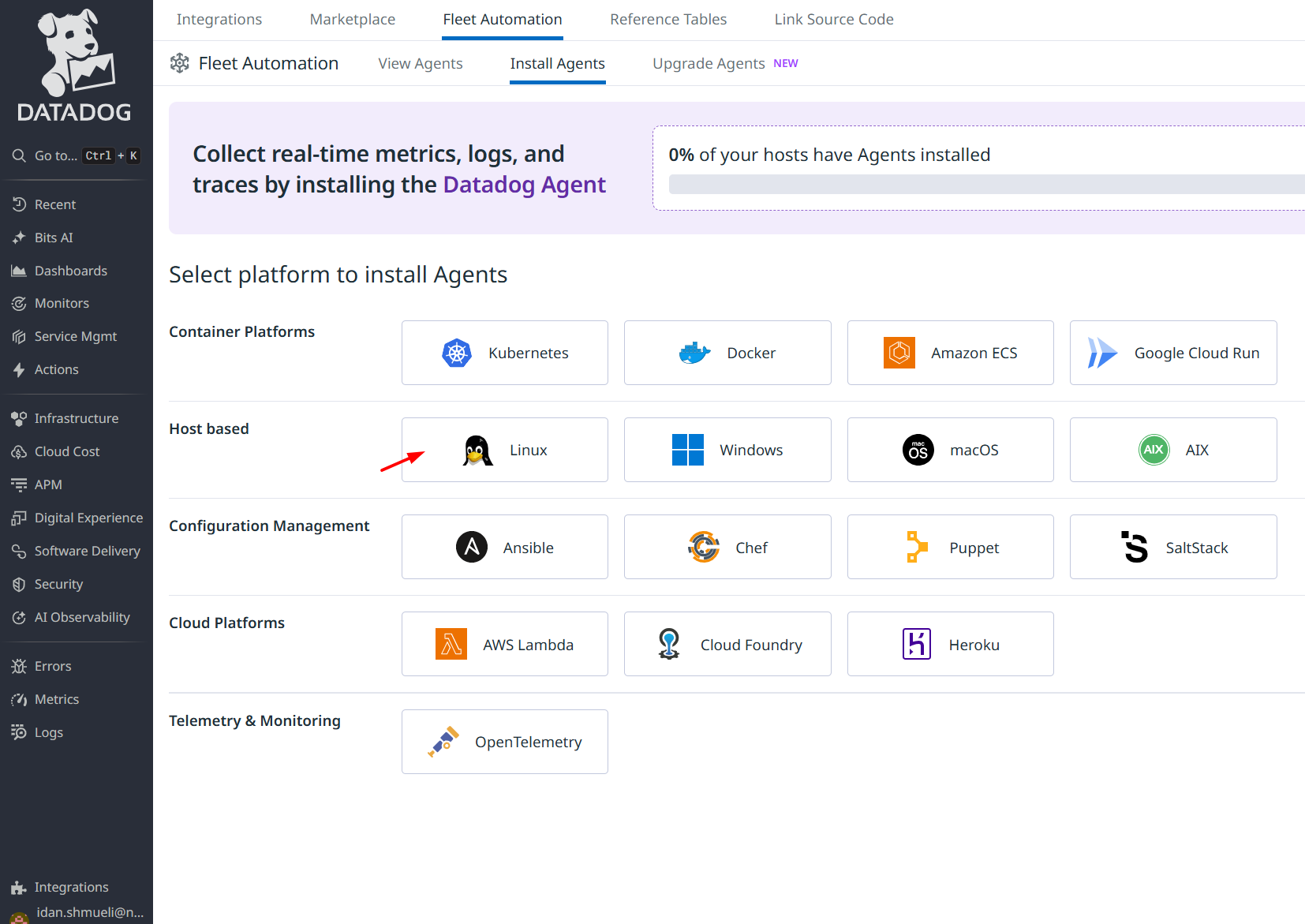

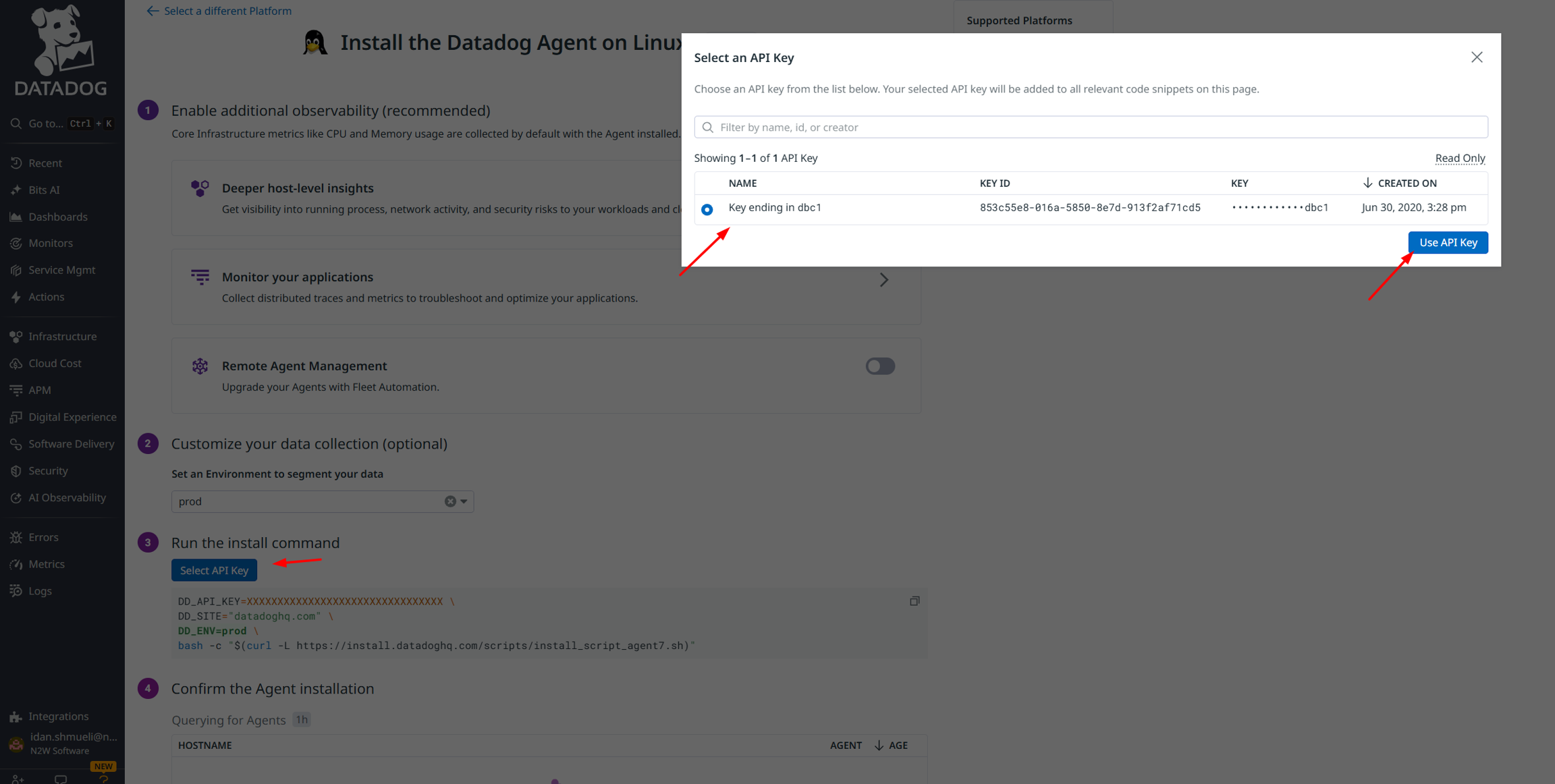

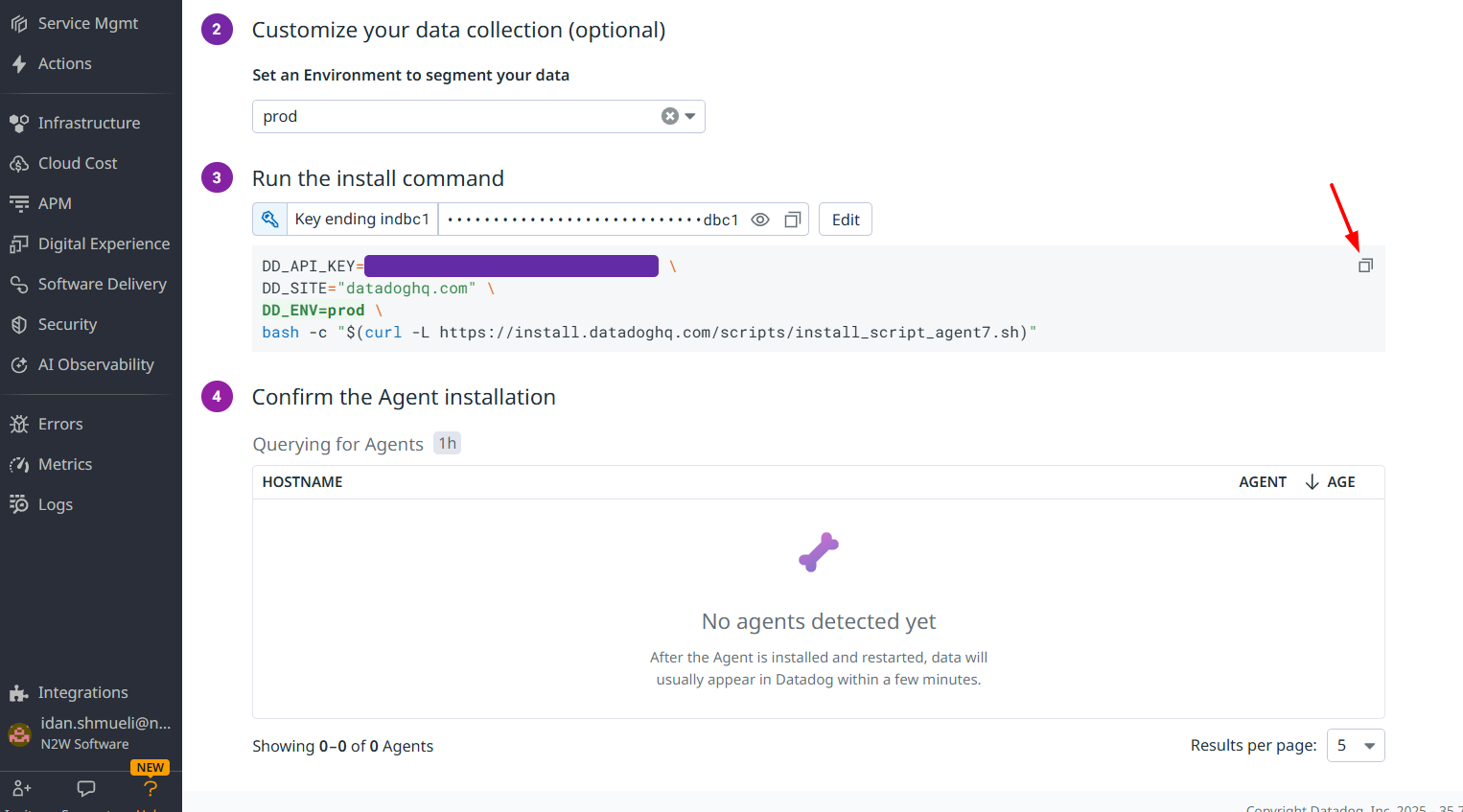

In this section, you will learn how to integrate Datadog with N2W.

N2W Backup & Recovery Instance is now supporting the monitoring of backups, DR, copy to S3, alerts, and more by Datadog. Datadog is a monitoring service for cloud-scale applications, providing monitoring of servers, databases, tools, and services, through a SaaS-based data analytics platform. Datadog will allow CPM users to monitor and analyze the N2W Backup & Recovery Dashboard metrics.

Use the following procedure to activate Datadog. Ready-made dashboards for monitoring are included.

usage: n2ws-reset-login.pyc [-h] [-u USER] [-a] [-p] [-m] [-y]

Reset login credentials and/or MFA for specific/all users.

optional arguments:

-h, --help show this help message and exit

-u USER, --user USER apply for specific user (name)

-a, --all apply for all users

-p, --password password to set

-m, --mfa disable MFA

-y, --yes auto confirmn2ws-reset-login -u <root_user_name> -m -yn2ws-reset-login -u <root_user_name> -m -y -p[policy__p1]

max_seconds_to_wait_for_vss=100[agent__agent_id]

max_seconds_to_wait_for_vss=100[all_remote_agents]

max_seconds_to_wait_for_policy=600[local_agent]

max_seconds_to_wait_for_policy=600If you specify 0.0.0.0/0, it will enable all IPv4 addresses to access your instance using SSH.

For further details, refer to “Adding a Rule for Inbound SSH Traffic to a Linux Instance” at https://docs.aws.amazon.com/AWSEC2/latest/UserGuide/authorizing-access-to-an-instance.html

Wasabi Repository buckets may not be versioned.

Don't rename a Wasabi bucket after selection as a repository as the copy will fail.

Name – Name for this N2W account.

User – The N2W user that this account belongs to. See section 18.

Access Key ID - ID provided by N2W for authentication.

Secret Access Key - Key provided by N2W for authentication.

Name - Type a unique name for the new repository, which will also be used as a folder name in the Wasabi Repository container. Only alphanumeric characters and the underscore are allowed.

Description - Optional brief description of the contents of the repository.

User – Select the user in the list.

Account - Select the account that has access to the repository.

Wasabi S3 Bucket Name – Select the name of the S3 bucket from the list.

When creating a policy with the S3 option enabled, N2W will manage the lifecycle according to the policy settings. When configuring S3, consider the differences in the storage types:

Snapshot – The fastest option. It enables free recovery within seconds, but it has the highest storage cost. Snapshot is useful for backups that you need to recover quickly and keep for a short time.

S3 Storage – Provides much cheaper storage costs, but recovery is slower and costs a little more. S3 Storage is useful for backups that do not need quick recovery time and that you want to keep for a long time.

Glacier – Provides the cheapest storage costs, but recovery is the most expensive and the slowest. Glacier is useful for backups that you need to keep for several decades for compliance purposes and which you don’t expect to have to recover urgently.

N2W does not support File Level Recovery from Glacier.

When configuring Copy to S3, consider the following when deciding where to put your S3 buckets:

Having the bucket in a different region then the target snapshot will incur AWS cross-region charges and will also result in slower copy speed due to the greater physical distance.

Putting the bucket in the same region as your protected resources will:

Help avoid cross-region data charges by AWS.

Help make the copy faster.

One bucket for all regions makes for easier management, while one bucket per region makes for optimal cost.

Since each AWS S3 bucket has some performance limits, configuring an AWS S3 Repository per policy will result in faster and more stable copies to S3. Or, if several policies are all writing to the same bucket, you can stagger the copies to avoid putting too much load on the same bucket at the same time.

Using VPC endpoint enables instances to use their private IP to communicate with resources in other services, such as S3, within the AWS network without incurring network transfer fees.

You can set up a VPC S3/EBS endpoint in the VPC where the S3 worker is launched to make sure that the communication from the worker will be over private IP. Using an VPC S3 Endpoint can help avoid NAT costs (if used in the VPC) as there is no data processing or hourly charges for using Gateway Type VPC endpoints. Additionally, the copy process will be more secure as the communication will be over private IP, and the copy speed over the S3 Endpoint should be faster than over the Internet.

If the bucket is in another region or in another account, the transport charges will be incurred anyway.

In addition, you can set up VPC peering between the worker VPC and the N2W server VPC so that communication between the worker and the server will also be routed over private IP.

When creating the S3 bucket in AWS, you have 2 encryption options:

SSE-S3 server-side encryption with Amazon S3-managed keys. This option is free, but it is less secure than SSE-KMS.

SSE-KMS server-side encryption with AWS KMS. This option has a cost per request, but it is more secure than SS3-S3.

When setting up the bucket, consider whether the free option (SSE-S3) is sufficient for your use case, or if you need greater security with SSE-KMS, which is more expensive.

1. In AWS, create a subnet within the VPC of the region.

After successful creation, the successful creation message appears.

The subnet is automatically associated with the default route table.

2. Create a new route table.

3. Change the subnet association by associating the previously created subnet with this route table. 4. Create a VPC endpoint for S3 in the region and associate it with the previously created route table.

5. Choose a region.

6. Then choose the previously defined route table. The permissions to access the bucket will be defined by the IAM policies attached to the roles of N2W.

7. Grant Full Access.

The route table of the subnet now looks like the following:

8. If N2W is in a different account/region/VPC, add an Internet Gateway to the route table so the ‘worker’ can communicate with N2W.

1. Add the following rule:

The route table will look like:

2. In this configuration, the connection to S3 will be routed to the VPC endpoint. See the example below:

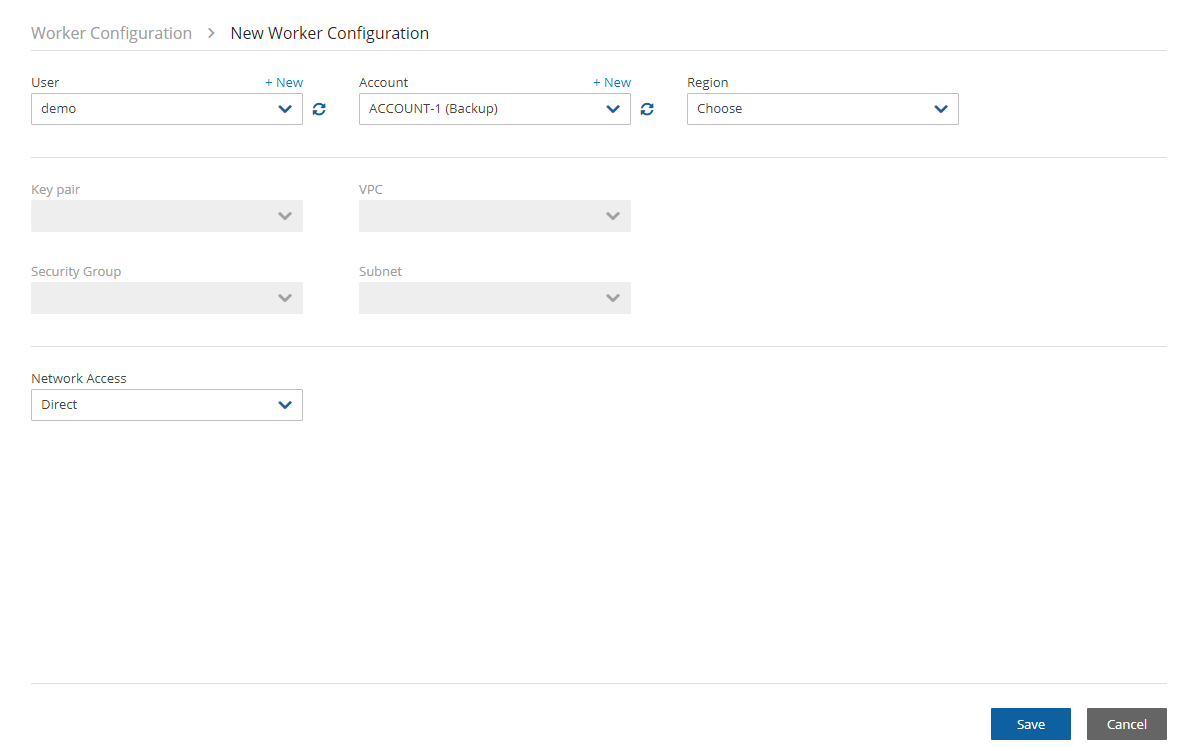

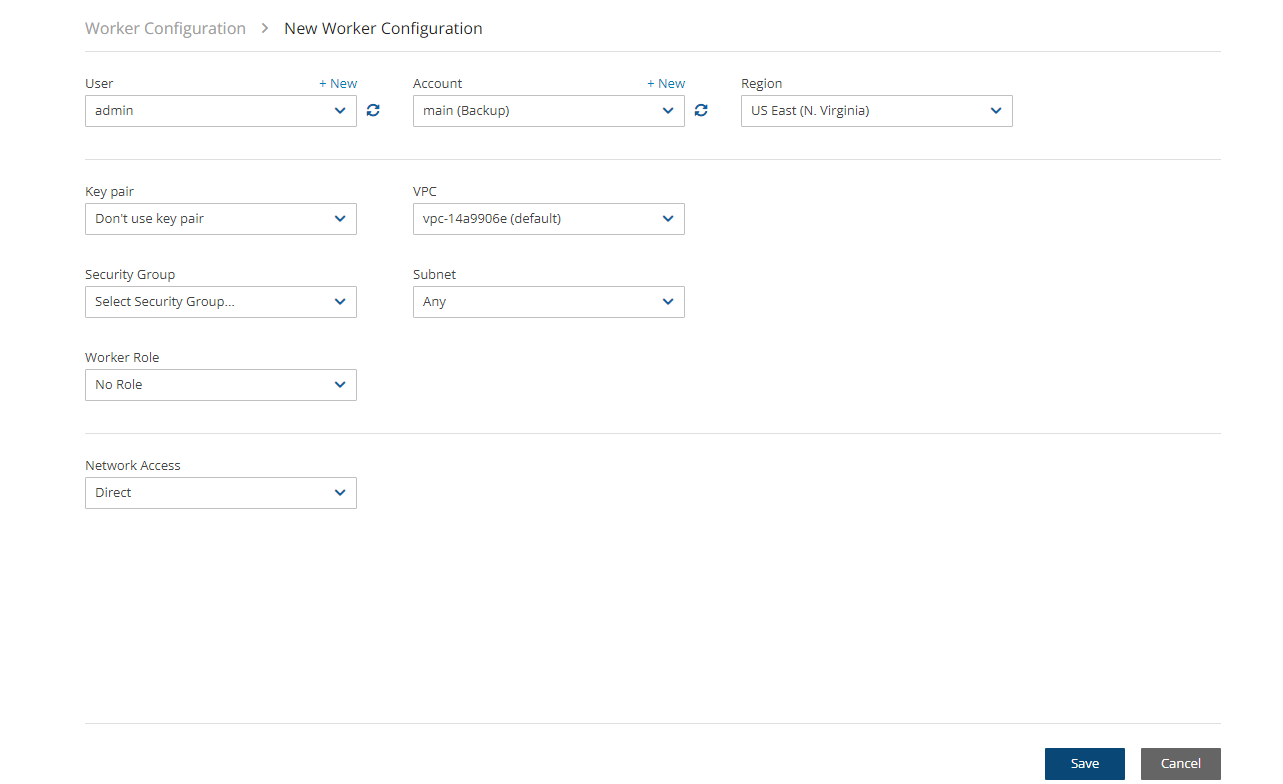

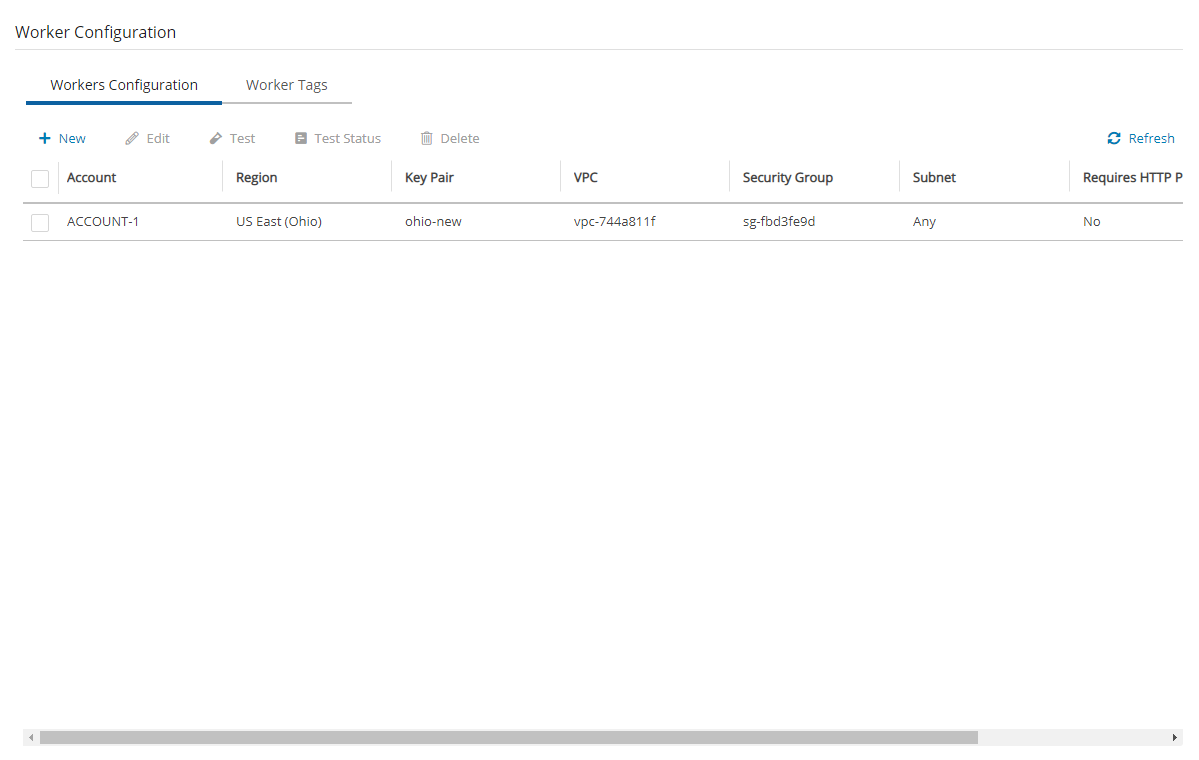

9. In N2W, select the Worker Configuration tab.

1. Select New. 2. Configure the worker to use this subnet in the specific region and the VPC where it is defined.

For additional information about setting up VPC Gateway Endpoints, see https://docs.aws.amazon.com/vpc/latest/userguide/vpce-gateway.html

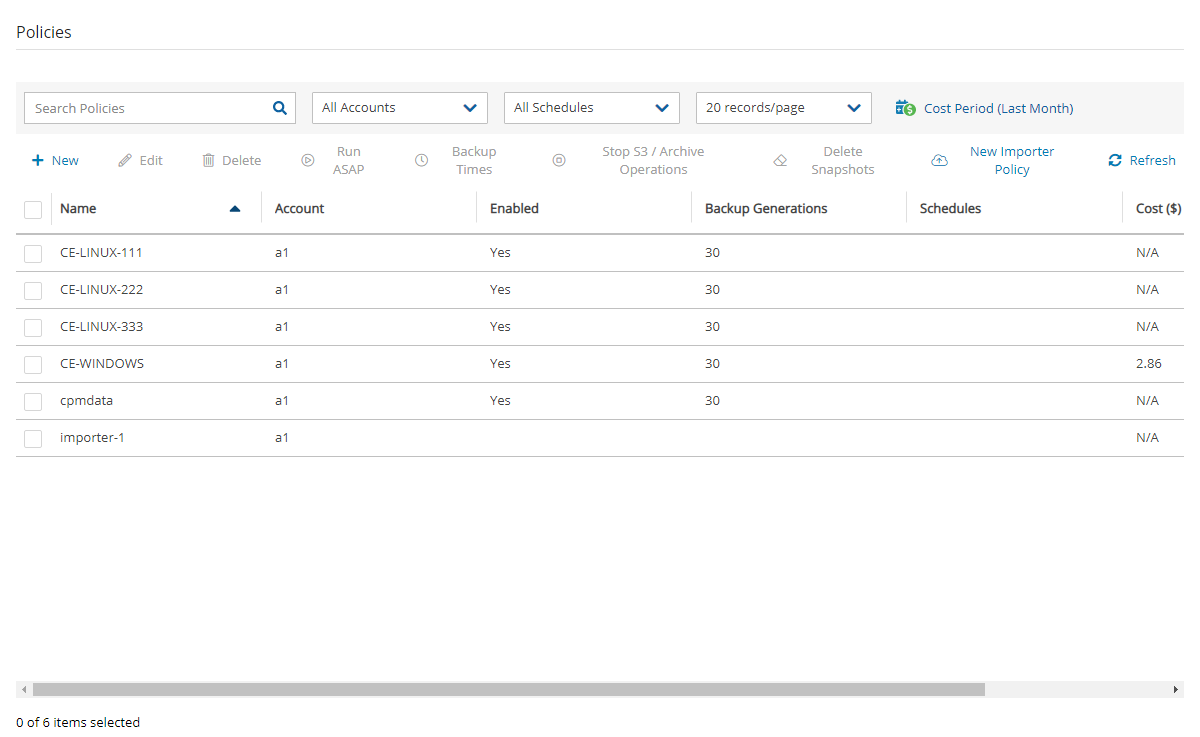

Allows customers to issue monthly bills per policy backups.

Calculations are made for the last month by default and can be set to prior periods.

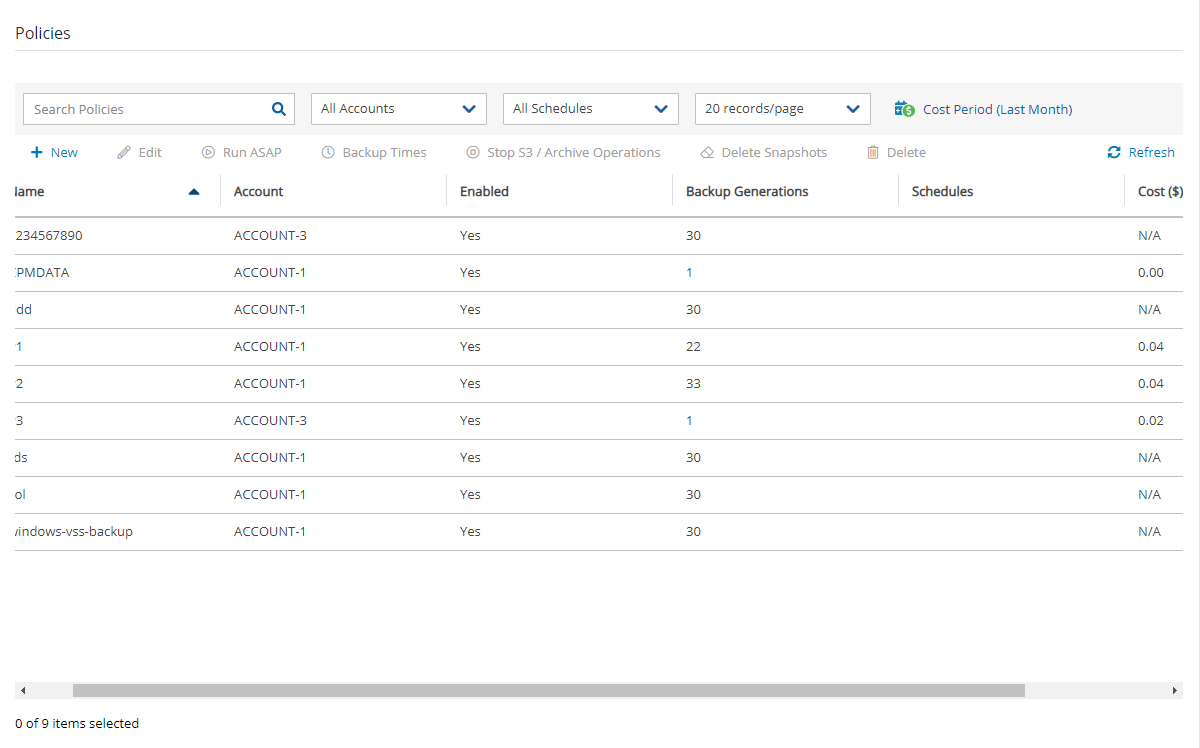

Breakdown of costs is found in the Costs ($) column of the Policies tab.

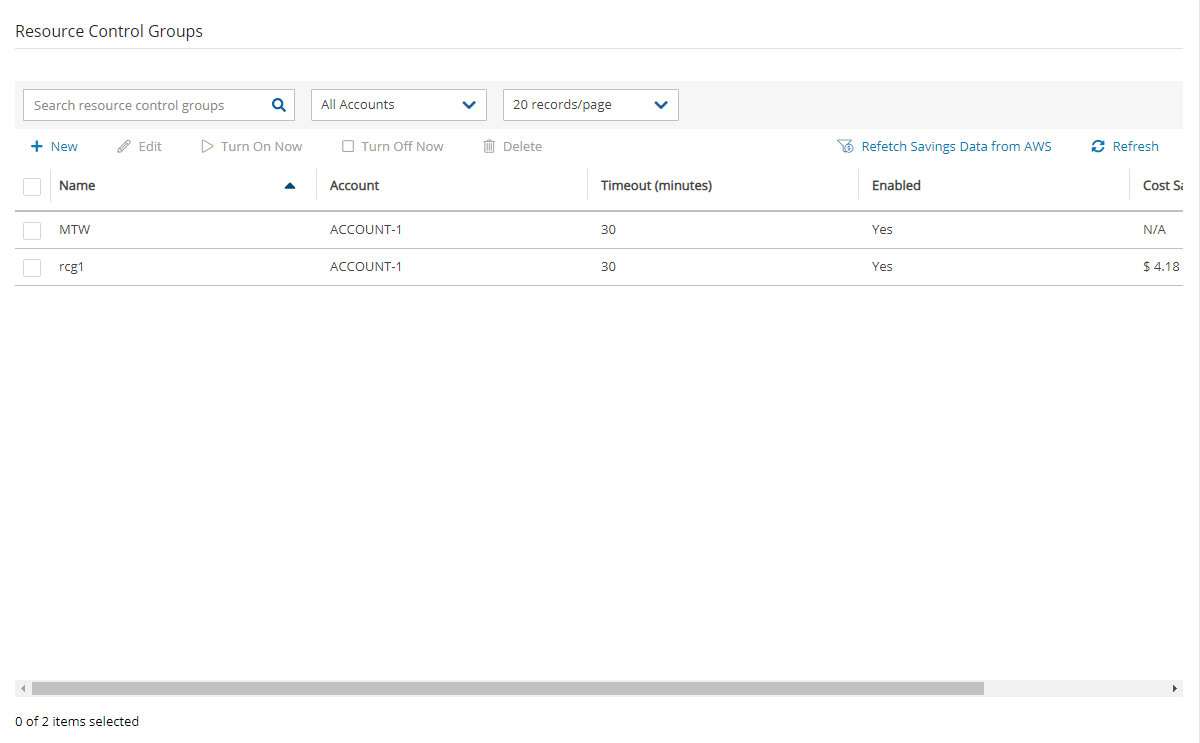

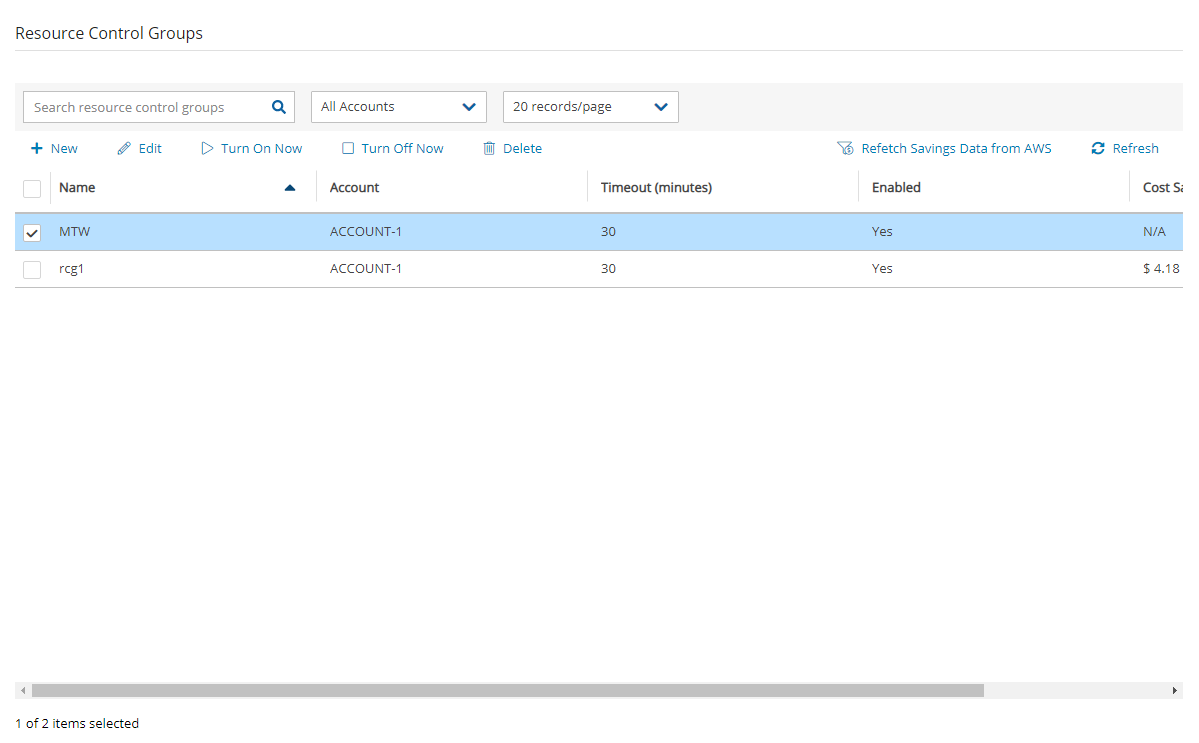

Cost Savings – Amount of money that users can save by enabling Resource Control management.

Calculations are made for the next month.

Breakdown of savings is found in the Cost Savings column of the Resource Control Groups tab.

Cost Explorer support is currently limited to the AWS resource EBS.

N2W uses the AWS REST API for retrieving costs for the specified policy. The Cost Explorer API allows us to programmatically query your data usage and compute the cost and usage data. It can take up to 48 hours for the cost increase to take effect.

The costs will include both short-term and long-term backups (cross-region DR), but not snapshots that were copied onto cheaper media such as S3 and Glacier.

In the Dashboard screen, you can find both Cost Explorer and Cost Savings information in their respective tiles:

Cost Explorer is not available currently in AWS GovCloud (US).

Following are the steps necessary for using Cost Explorer:

In AWS, activate cost allocation tags. See section 25.1.1.

In N2W:

For CPM, select Enable Cost Explorer in the Cost Explorer tab of General Settings.

For each designated user, enable Allow Cost Explorer. See section .

To disable Cost Explorer, it is sufficient to clear Enable Cost Explorer in the Cost Explorer tab.

To allow CPM Cost Explorer calculations in AWS, users must add cost allocation tags once.

To activate user cost allocation tags:

Log in to the AWS Management Console at https://console.aws.amazon.com/billing/home#/.

Open the Billing and Cost Management console.

In the navigation pane, choose Cost Allocation Tags.

Choose the following tags to activate, and then select Activate:

cpm_server_id

cpm_policy_name

It can take up to 24 hours for tags to activate.

For complete details, see http://docs.aws.amazon.com/awsaccountbilling/latest/aboutv2/activating-tags.html

In the Policies tab, you can monitor the backup costs of each policy for the last month or a different time period. The breakdown of costs per policy in dollars for the desired period appears in the Cost ($) column.

Cost Explorer inclusions and exclusions:

The cost figure includes all policies that were ever backed up by N2W and does not filter out deleted policies. The costs for policies deleted during a cost period are still included in the cost figure for that period.

N2W Cost Explorer does not include the cost of cross-account DR snapshots.

If the Allow Cost Explorer option is not enabled for the logged-in user, or if the backup was generated within the last 24 hours, the Cost ($) column will show ‘N/A’. To enable Cost Explorer for a user, see section 18.3.

You can monitor costs for a different time period by setting the Cost Period for all policies. The maximum period is one year. The current period is shown next to Cost Period in the Policies tab below the filters.

To specify the period for cost calculations:

Select the Policies tab and then select Cost Period.

Select Period.

Choose the From and To dates from the calendars, selecting Apply after each date.

In the Resource Control Groups tab, you can monitor the expected Cost Savings for each group, based on the schedules you have set to Turn Off an instance or an RDS database.

When the Operation Mode of a Resource Control Group is Turn Off Only, N2W will show ‘No-Data’ in the Cost Savings column.

Cost Savings currently is not supported in GovCloud regions.

To update the screen with the current AWS savings, select Refetch Savings Data from AWS, and then select View updated data in the data refetched message.

Setup Datadog Account.

Visit Datadog at https://www.datadoghq.com/pricing/ and set up an account that fits your scale.

Install Python Integration.

a. Login to Datadog and go to Integrations.

b. Search for ‘Python’ and install it:

c. Search for 'N2WS' and install it. See step 6.

3. Enable Datadog support on N2W Instance.

a. Connect to your N2W Backup & Recovery Instance with SSH Client.

b. Type sudo su.

c. Add the following lines to /cpmdata/conf/cpmserver.cfg:

If cpmserver.cfg doesn't exist, create, and add the above lines.

d. Run service apache2 restart.